NVIDIA’s Q2 FY26 earnings call underscored once again that the company is not just a GPU vendor, rather it is an AI infrastructure company supporting the buildout of AI Factories. With revenue hitting $46.7B for the quarter and data center sales accelerating, the product portfolio is expanding so quickly that even seasoned observers struggle to keep the names straight. To help make sense of the landscape, we’ve compiled a cheat sheet mapping NVIDIA’s sprawling platforms, where they sit in the roadmap, and how much revenue they’re driving.

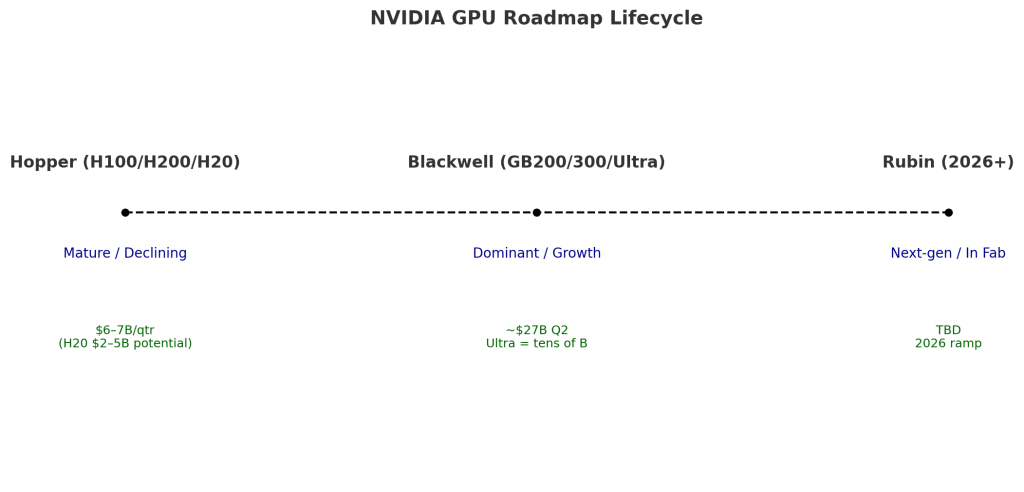

At a high level, our research indicates NVIDIA is orchestrating a three-layered stack comprising: 1) compute (Hopper, Blackwell, Rubin), 2) networking (NVLink, InfiniBand, Spectrum-X); and e) full-stack systems (RTX PRO, Jetson, Omniverse). Each generation is released on an annual cadence, accelerating traditional Moore’s Law progressions, maximizing perf-per-watt improvements and directly converting those gains into customer revenue.

Blackwell: The New Engine of Growth

The Blackwell architecture has become the benchmark for training, inference and reasoning workloads. According to NVIDIA, the GB200 and newly ramping GB300 racks are shipping at scale, with hyperscalers such as OpenAI, Meta, and Mistral already deploying them for large model training and inference. NVIDIA stated in its earnings call that Blackwell Ultra pushed “tens of billions” in Q2 revenue, and analysts estimate the broader Blackwell family contributed roughly $27B of the $46.7B top line.

Key to the architecture is NVLink 72, which allows entire racks to behave as unified computers. Performance per watt is an order of magnitude higher than Hopper, and NVIDIA is pushing NVFP4 precision to drive 50x efficiency in inference. Note: NVFP4 is a specialized 4-bit floating-point format designed for the Blackwell GPU architecture to accelerate AI inference and training. Its primary purpose is to dramatically reduce memory footprint and boost performance while maintaining a high level of accuracy in large language models

Hopper: Still Relevant, but Aging

While Hopper is now a legacy platform, it remains in demand. The H100 and H200 are sold out according to the company, and the H20 – built for restricted markets like China – delivered $650M in Q2 revenue, with the potential for $2B–$5B more in Q3 if licensing to China proceeds (although China has indicated reticence to take these older products). NVIDIA’s ability to monetize Hopper alongside Blackwell highlights the breadth of workloads that still run on CUDA-optimized systems.

Rubin: The Next Step Up

Rubin is already in the fab according to NVIDIA and on track for 2026 volume. It consists of six chips: the Vera CPU, Rubin GPU, CX9 SuperNIC, NVLink 144 scale-up switch, Spectrum-X Ethernet, and a silicon photonics processor. This will be NVIDIA’s third-generation NVLink rack-scale supercomputer. Management remains tight-lipped about performance but teased “a whole bunch of new ideas” to be unveiled at GTC.

Networking: The Hidden Growth Engine

Networking is increasingly as critical as compute as we recently explained in this Special Breaking Analysis. In Q2, NVIDIA posted $7.3B in networking revenue, up 98% year-on-year. Spectrum-X Ethernet is now running at a more than $10B annualized clip, InfiniBand (despite claims of its demise) nearly doubled sequentially, and the newly announced Spectrum-XGS aims to connect multiple AI factories into giga-scale super factories.

NVLink remains the scale-up linchpin, enabling memory bandwidth amplification essential for reasoning AI. Together, these interconnects turn racks of GPUs into unified AI factories — a capability no competitor has matched.

Enterprise and Edge: RTX PRO, Workstations, and Jetson

NVIDIA clear intent is to extend beyond hyperscale into enterprise and edge AI (e.g. factories and robotics). RTX PRO servers, air-cooled PCIe systems for enterprise IT environments, are now in full production and adopted by ~90 firms according to NVIDIA. The company cited Hitachi, Lilly, and Disney as examples. Analysts expect this line to scale into the multibillion-dollar range.

On the robotics front, Jetson Thor is in-market, delivering what the company claims is an order-of-magnitude boost over Orin (NVIDIA’s embedded System-on-Chip (SoC). Adoption appears to be broad, spanning Amazon Robotics, Agility Robotics, Boston Dynamics, Caterpillar, and Meta. Moreover, NVIDIA’s Omniverse with Cosmos, supports its digital twin strategy in partnership with Siemens and leading robotics firms.

Consumer and Vertical Markets

Gaming revenue, the company’s legacy, hit $4.3B (+49% YoY), powered by GeForce RTX 50-series GPUs and the expansion of GeForce NOW. Professional visualization reached $601M, while automotive jumped to $586M, driven by shipments of Drive Thor.

Sovereign AI and the Macro Play

Beyond individual products, sovereign AI emerged as a major theme this quarter. NVIDIA is working with the EU on a 20B euro initiative to build 20 AI factories, including five gigafactories. In the UK, Isambard-AI was unveiled as the nation’s most powerful AI supercomputer. Sovereign AI revenue is projected to exceed $20B this year, doubling YoY.

Quantifying the Product Revenue Contribution

Bottom Line

NVIDIA’s portfolio is sprawling, but the strategy is highly coherent. With an annual GPU cadence, integrated networking, full-stack systems, and a software moat, we believe the company is well-positioned through the decade. Blackwell is setting records, Rubin is waiting in the wings to explode, enterprise AI sovereign AI and robotics represent the next frontier. In our view, NVIDIA has successfully evolved from a chipmaker for gaming into the default supplier of AI factories – a position that, if sustained, justifies the $3–4 trillion AI infrastructure buildout Jensen Huang predicts by decade’s end. Of note, this figure meaningfully exceeds our recent $2.8 trillion dollar cumulative AI data center forecast from 2025 – 2030. We intend to revisit our assumptions based on these new claims and will report back.