AI Adoption Grows, But Production Remains the Bottleneck

As of 2025, roughly 42% of enterprises report actively deploying AI, yet a large percentage remain stuck in pilot or experimental phases.

In this episode of AppDevANGLE, I spoke with Keerti Melkote, CEO of Anyscale, about why AI adoption is accelerating but production readiness continues to lag. The conversation focused on the emergence of AI-native infrastructure, the challenges of scaling AI workloads efficiently, and why the next competitive advantage will come from operationalizing AI, not just building it.

Our discussion explored how AI is driving a new compute stack, why GPU utilization and cost efficiency are becoming critical, and how open, unified infrastructure models are shaping the next phase of enterprise AI.

AI Represents a New Generational Shift in Infrastructure

AI is not just another workload; it represents a fundamental architectural shift, similar to previous transitions like the internet and cloud computing.

Melkote framed this as a generational evolution: “If you look at the internet… there was a stack… the LAMP stack… then came the cloud… built around microservices and containers… AI is a similar generational shift and it will drive its own compute stack.”

This shift is driven by the nature of AI workloads themselves. Unlike traditional cloud-native applications, AI systems require:

- Fine-grained resource orchestration

- Accelerator-aware infrastructure (GPUs, specialized hardware)

- Dynamic scaling across training, inference, and agent workflows

As a result, enterprises can no longer rely solely on cloud-native architectures designed for microservices. AI demands a new foundation.

The Real Challenge Is Not Building AI; It’s Running It

While most organizations can now prototype AI systems, scaling them into production remains the primary challenge. “Now as AI goes into production… the investments become real… the ROI becomes real,” Melkote explained. The problem is not model capability; it is operational complexity.

AI workloads are fragmented across multiple domains:

- Data processing pipelines

- Model training and fine-tuning

- Inference systems

- Agentic workflows

Each of these often runs on separate infrastructure stacks, creating silos that slow development and increase cost. “The world of AI is very siloed… and organizations are not able to move as fast as they want to move,” Melkote said.

This fragmentation introduces two major constraints: slower iteration velocity and higher infrastructure costs.

GPU Utilization Becomes the New Efficiency Battleground

As AI adoption grows, GPU usage is becoming one of the most critical (and expensive) factors in enterprise infrastructure. “Siloed nature fundamentally leads you to disconnected pools of resources… and those disconnected pools are only active when that silo is active,” Melkote noted.

This leads to significant inefficiencies:

- GPUs sitting idle between training cycles

- Underutilized inference resources during off-peak periods

- Dedicated infrastructure that cannot be shared across workloads

The result is rising costs and reduced ROI from AI investments. The solution is not simply adding more infrastructure; it is optimizing what already exists through shared, unified resource models.

Unifying AI Workloads Unlocks Speed and Cost Efficiency

To address these inefficiencies, enterprises need to move toward unified infrastructure that can dynamically allocate resources across workloads. “How do you interplay and mix GPUs across training and inference? You can only do that when you have something unifying between the two,” Melkote explained.

This approach enables:

- Better GPU utilization across different workloads

- Faster iteration cycles for developers

- Reduced infrastructure waste and energy consumption

It also reflects a broader shift toward treating AI infrastructure as a shared, dynamic system rather than isolated environments.

Open Source and Ecosystem Collaboration Drive Innovation

Another key theme is the role of openness in accelerating AI adoption. “Being open is the fastest way to move… it allows you to not feel locked into a single vendor architecture,” Melkote said.

Open source plays a critical role by:

- Enabling collaboration across the ecosystem

- Reducing vendor lock-in

- Accelerating innovation through community contributions

Melkote pointed to the emergence of what he described as a new AI-native stack, bringing together technologies like PyTorch, Ray, and Kubernetes, as an example of how the ecosystem is evolving. This mirrors previous industry shifts, where open ecosystems defined the pace of innovation.

2026 Will Be Defined by Custom Enterprise Models

Looking ahead, the next phase of AI adoption will move beyond using general-purpose models toward building custom, enterprise-specific models. “I see 2026 emerging as the year of custom models,” Melkote said.

This shift is driven by a simple reality:

- Enterprise data is unique

- General models are not trained on proprietary data

- Differentiation requires customization

Enterprises will increasingly:

- Fine-tune open models

- Build domain-specific AI systems

- Develop models tailored to their own business processes

This marks a transition from consuming AI to owning and shaping it.

Analyst Take

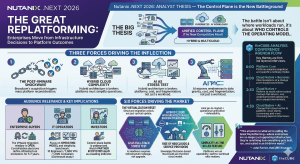

AI is forcing a fundamental rethink of infrastructure. The industry has spent years optimizing cloud-native architectures for microservices, containers, and stateless workloads. AI breaks those assumptions. It introduces new requirements around compute, orchestration, and efficiency, particularly in how GPUs and accelerators are used.

What emerges is a new model:

- AI infrastructure must be unified, not siloed

- Compute must be dynamic, not statically allocated

- Efficiency is measured by utilization, not capacity

- Open ecosystems will outpace proprietary stacks

The most important shift is this: AI is no longer just a model problem; it is an infrastructure problem. Organizations that focus only on building models will struggle to scale. Those that invest in unified, efficient, and AI-native infrastructure will be the ones that turn experimentation into production and production into competitive advantage.

The next phase of AI adoption will not be defined by who has access to models, but by who can run them efficiently, reliably, and at scale.