A Four-Act Architectural Framework for Trusted Enterprise Digital Labor

Next Gen AI Agents Research Summary—While 45% of enterprise leaders are actively planning to deliver next-generation AI agents, the vast majority of deployments remain limited to isolated conversational pilots that fail to deliver scalable business value. This operational stagnation is driven by “The Chatbot Trap”—a strategic misstep where organizations mistake conversational fluency for true enterprise cognition and accountable digital labor. Enterprises must pivot away from model selection and focus on full-stack architectural maturity to enable the next gen of AI agents. This requires a definitive, Four-Act Layered Architecture that systematically binds foundational LLMs with deterministic Knowledge Graphs, adaptive Contextual Spaces, and Persistent Agent Memory.

Watch the Podcast

Executive Brief

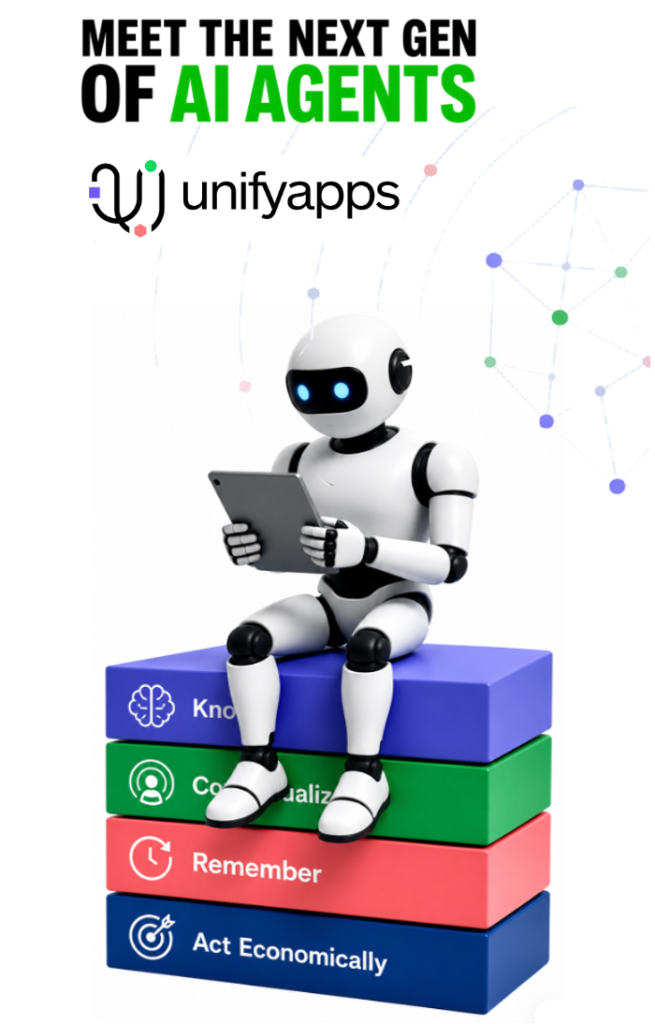

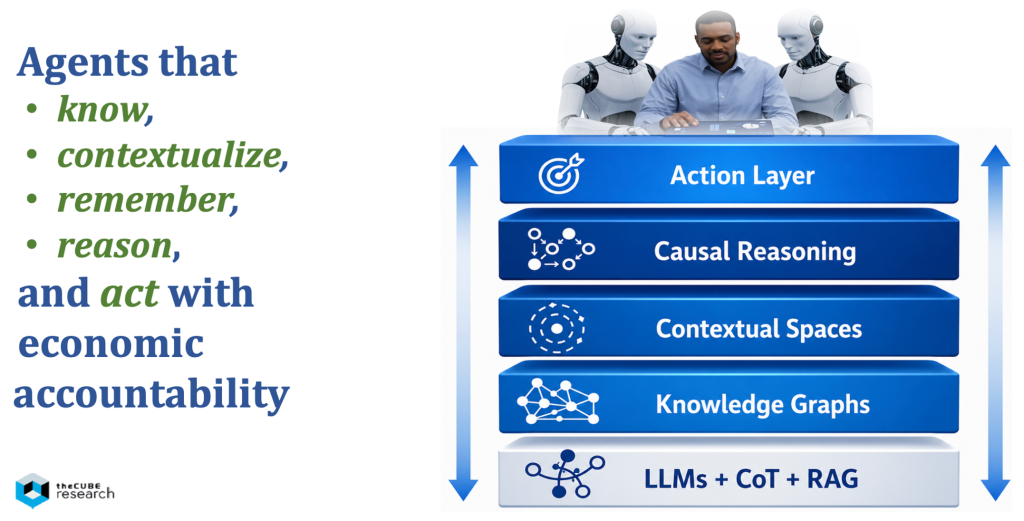

The enterprise AI market is at an architectural inflection point. The first wave, centered on LLM fluency and answer generation, is giving way to AI agents that know, contextualize, remember, reason, and act with economic accountability. This paper explores the emerging architectural progression from LLMs to the full-stack, layered architectures required to deliver trusted digital labor at enterprise scale. In the context, we’ll highlight why UnifyApps represents a leading case study in what is rapidly emerging as the next frontier of enterprise AI.

Key Takeaways:

- The Chatbot Era is ending: The brief argues that enterprise AI is moving beyond assistants and copilots toward workflow-embedded digital workers capable of accountable knowledge work.

- A four-act architecture defines next-generation enterprise AI: Competitive advantage will increasingly come from systematically layering fluency, knowledge, context, and memory into an integrated, economically sustainable platform for digital labor powered by the next gen of AI agents.

- Knowledge and Context are the true differentiators: The paper emphasizes that Knowledge Graphs provide structural truth, while Contextual Spaces create situational awareness, transforming AI from generalized prediction into domain-specific operational intelligence.

- Persistent memory closes the architecture gap: UnifyApps’ framing of synthesized memory positions AI agents to evolve from stateless assistants into continuously learning digital coworkers, preserving enterprise continuity across workflows and time, creating a compounding intelligence effect.

- AI FinOps is a strategic necessity: As AI moves into execution, token intelligence, governance, and measurable ROI become critical. Enterprises must govern AI not only technically, but economically.

The deeper implication is clear: model access will commoditize, but architectural maturity will not. The winners in enterprise AI will be those who build systems that move beyond talking, toward AI that knows, contextualizes, remembers, reasons, and acts. That is, the next gen of AI agents. UnifyApps is setting the pace with an emerging architectural blueprint for that future that promises to deliver trusted, economically disciplined digital labor, rather than the diminishing value of conversational assistants.

Overcoming the Chatbot Trap

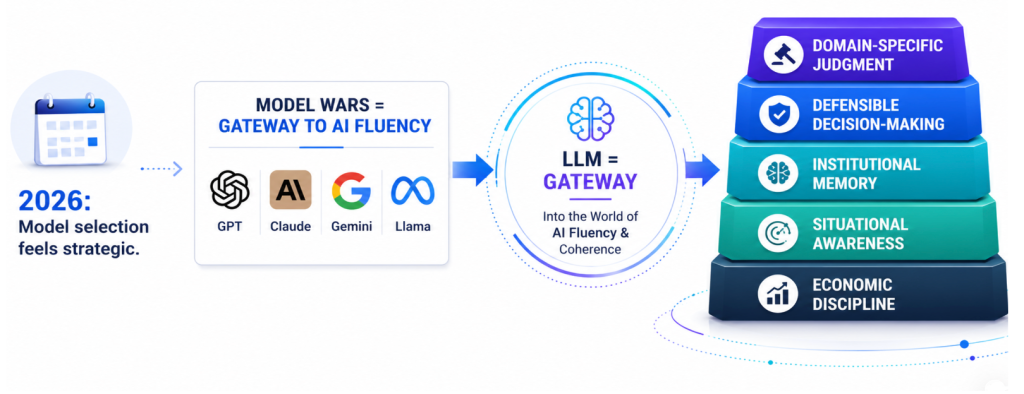

The enterprise market is currently experiencing what may eventually be remembered as the “Browser Wars” phase of AI, an early yet strategically incomplete period in which enterprises entered AI through the LLM front door and often mistook model selection for a transformation strategy. Much like the 1990s fixation on Netscape versus Internet Explorer, today’s enterprise AI conversations are disproportionately centered on GPT, Claude, Gemini, and Llama, as though choosing the best model alone will determine competitive advantage.

But history suggests this is the wrong layer to obsess over. LLMs, like the browsers, will ultimately become gateways, not differentiators. The winners will be those who build architectures, workflow systems, and scalable business models around them, specific to their unique domains, knowledge, and operational context.

Enterprise AI is now following a similar trajectory. Large language models (LLMs) are extraordinary probabilistic engines, but they are increasingly proving insufficient as standalone enterprise systems. They generate language through statistical prediction, but production-grade agentic AI requires more than linguistic fluency.

This is the first major enterprise lesson of the generative AI era. For most, it is now becoming painfully clear: LLM-only architectures fail at production scale. McKinsey research suggests generic LLMs alone can produce dangerously high error rates when operating without domain grounding, governance, or contextual constraints, especially in a highly regulated, domain-specific use case. The issue is not that LLMs lack value. It is that language prediction alone is not enterprise cognition.

The enterprise architecture of agency demands an array of new attributes:

- domain-specific judgment

- defensible decision-making

- institutional memory

- situational awareness

- economic discipline

This is the core barrier between AI that converses and AI that works. Without these foundational layers, enterprises are not deploying agentic AI; they are deploying probabilistic chatbots that merely talk and automate repetitive tasks.

The winners, however, will rapidly evolve toward AI agents capable of executing knowledge work: diagnosing, planning, governing, and making decisions. This is the real strategic frontier because knowledge work depends on knowledge and contextual reasoning. It requires AI systems that understand not just what information exists, but why it matters under specific business conditions, rules, consequences, and standards of economic and legal trustworthiness.

This is what we define as The Chatbot Trap: organizations confuse coherent conversation with enterprise-ready digital labor. They deploy assistants, copilots, or conversational wrappers and assume fluency equals transformation. The result is experimentation without scalable operational redesign and stagnant ROI. Escaping the chatbot trap requires a focus on enabling the next gen of AI agents.

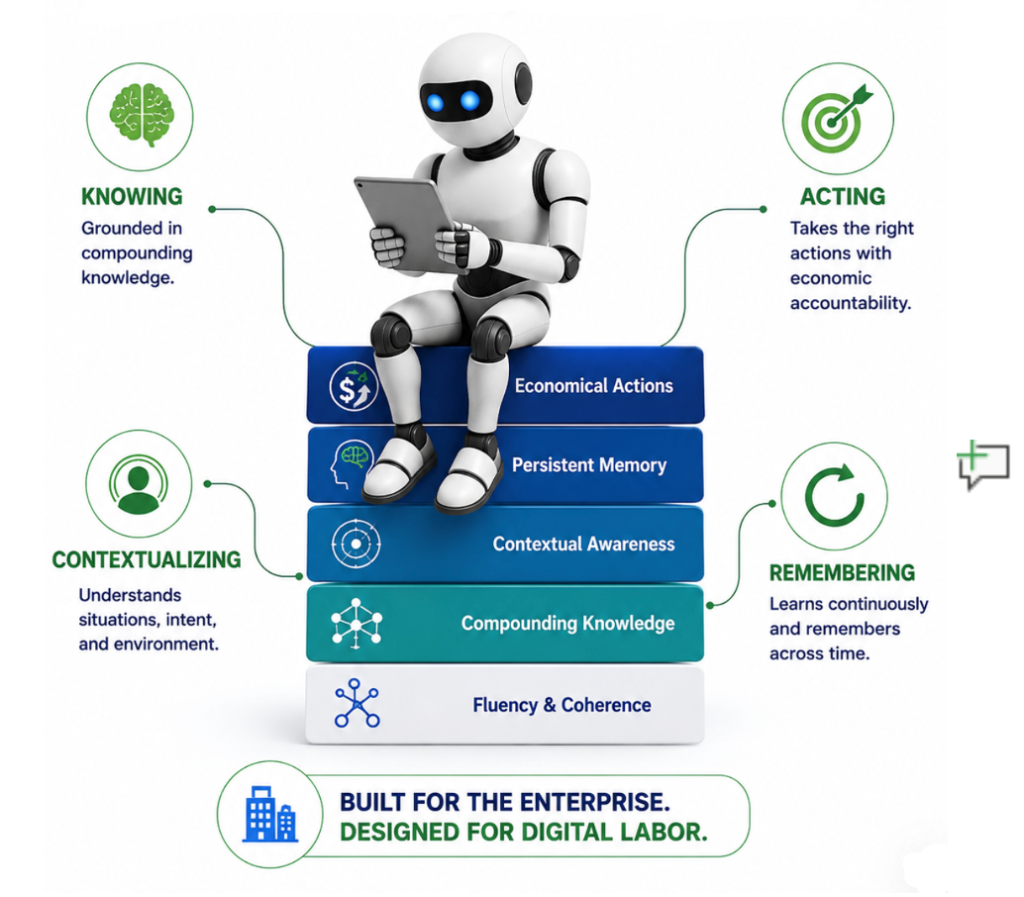

Meet the Next Gen of Agentic Architectures

Enterprise AI is now entering its second major architectural transition designed to enable the next generation of AI agents. The first era was defined by conversational fluency: organizations adopted LLMs, Chain-of-Thought prompting, and Retrieval-Augmented Generation (RAG) to create smarter chatbots, copilots, and assistants. This was an essential first step, but it also exposed a hard truth about the enterprise: language generation alone does not create production-grade digital labor. It creates gateways to intelligence, not accountable systems of work.

Enterprises must now evolve through a four-act architectural progression, each layer building the structural foundations for AI agents capable of executing trusted knowledge work and higher ROI.

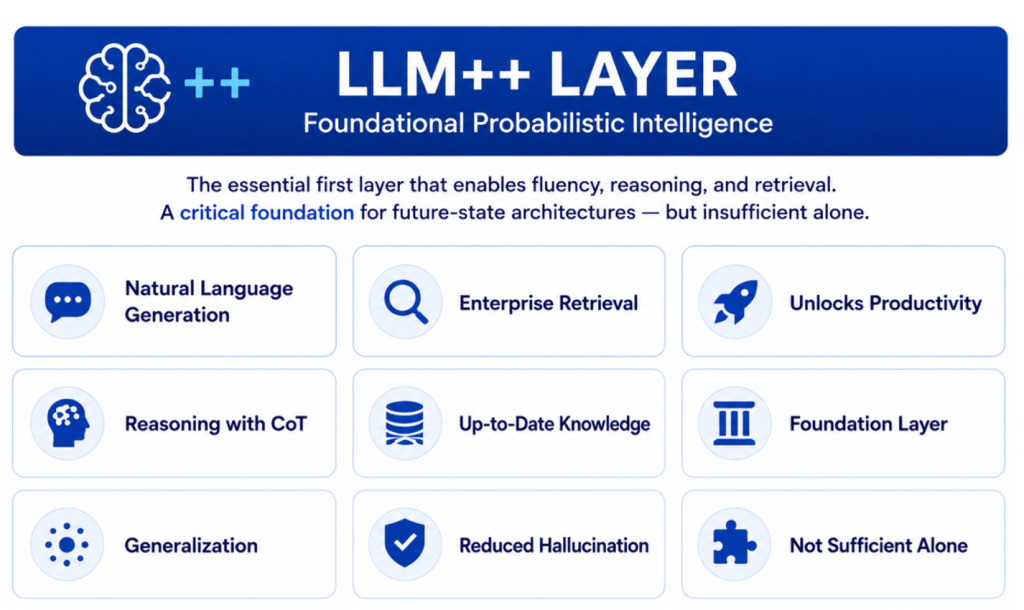

ACT 1: LLM++ | From Fluency to Foundational Utility

The first wave of enterprise AI centered on probabilistic intelligence, and this foundational layer remains critically important. LLMs, enhanced by Chain-of-Thought (CoT) and Retrieval-Augmented Generation (RAG), gave organizations unprecedented capabilities to generate language, summarize information, retrieve content, and improve productivity on tasks. This LLM++ stack represents a vital first step because it dramatically improves fluency, retrieval grounding, and accessibility to enterprise knowledge. For many organizations, establishing this layer is now table stakes. It is the gateway to modern AI capability and a necessary foundation for everyone that follows.

However, while essential, it is insufficient on its own. LLM++ architectures create stronger language systems, but they do not yet create enterprise-grade cognition. LLMs alone are exceptional at prediction, but prediction is not judgment. Even when augmented by CoT and RAG, they can retrieve, synthesize, and communicate, but they do not inherently understand enterprise structure, institutional logic, policy boundaries, or business consequences. In practical terms, this first act gives enterprises AI that can talk better, search better, and assist better, but not AI that can reliably reason within operational realities.

This distinction is crucial because many organizations risk mistaking foundational capability for future-state architecture. LLM++ is not the destination. It is the launchpad. Enterprises must absolutely build this layer well, but they must also recognize that scalable digital labor requires additional architectural layers beyond probabilistic intelligence. Without those next layers, organizations may improve productivity, but they will struggle to create trusted, context-aware, domain-specific agents capable of accountable knowledge work at scale.

ACT 2: Knowledge Graph | Creating Enterprise Cognition

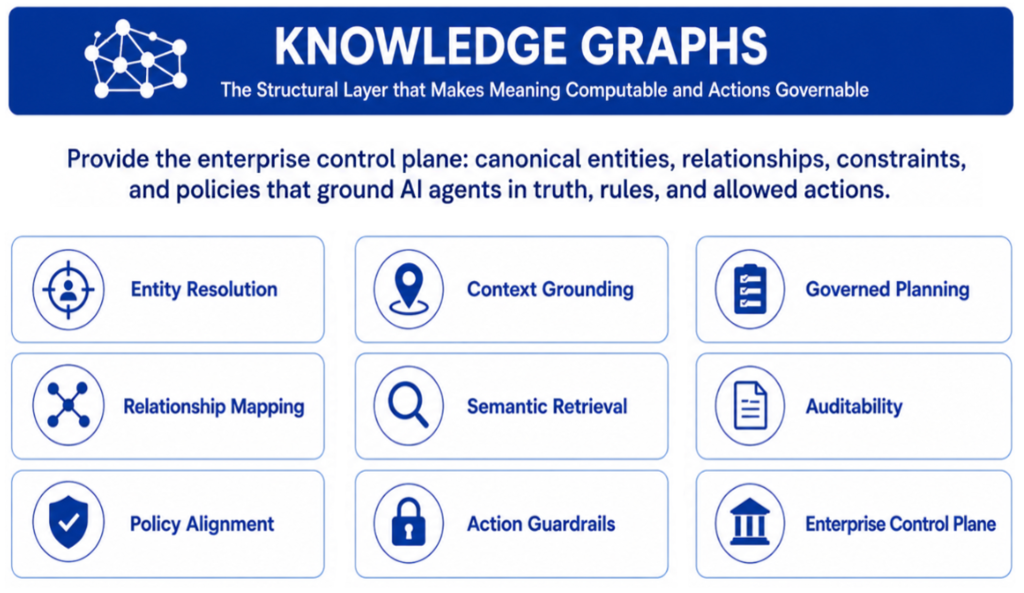

The next layer is structural intelligence. Knowledge graphs provide the deterministic substrate that LLMs inherently lack by making enterprise meaning computable, governable, and operational. They transform fragmented data into a structured enterprise control plane by organizing canonical entities, relationships, constraints, business definitions, policies, and permissible tool pathways into a system of shared understanding.

This is where the next generation of AI agents begins to evolve from generalized language prediction toward grounded cognition and governed execution. Knowledge graphs establish the structural layer that enables AI agents to resolve what something is, understand how it connects to everything else, retrieve relevant meaning, and act safely within boundaries.

By extending LLMs with knowledge graphics, it helps AI agents answer the foundational questions required for knowledge-based digital labor use cases, such as:

- What is this? (entity resolution)

- How is it connected? (relationship mapping)

- What context matters? (semantic retrieval)

- What rules and constraints? (applied policy)

- Who can do what? (permissions)

- What actions are allowed? (guardrails)

- Can decisions be traced? (auditability)

In practical terms, knowledge graphs serve as the enterprise’s semantic and governance backbone. They provide semantic coherence across systems, align business definitions across silos, ground agents in truth, and enforce enterprise control through policy-aware planning. This dramatically improves precision, context grounding, compliance, traceability, and safe execution, transforming AI agents from probabilistic assistants into more reliable digital workers.

Just as importantly, knowledge graphs serve as a foundational orchestration layer for agentic workflows, enabling agents, tools, and systems to operate from a shared understanding of entities, goals, permissions, and process dependencies. This shared semantic control plane allows multiple agents to coordinate actions, exchange context, sequence workflows, and execute within governed boundaries, enabling scalable, interoperable digital labor across increasingly complex enterprise environments.

However, knowledge graphs alone primarily define structural truth, control, and guardrails. They excel at making facts, relationships, and allowable actions explicit, but they remain largely static in determining situational priority. They can tell an AI what is true, what is connected, and what is permissible, but not always what is most important now. In other words, knowledge graphs establish meaning and governance, but additional layers are required to dynamically prioritize relevance, consequences, and adaptive decision-making in changing environments.

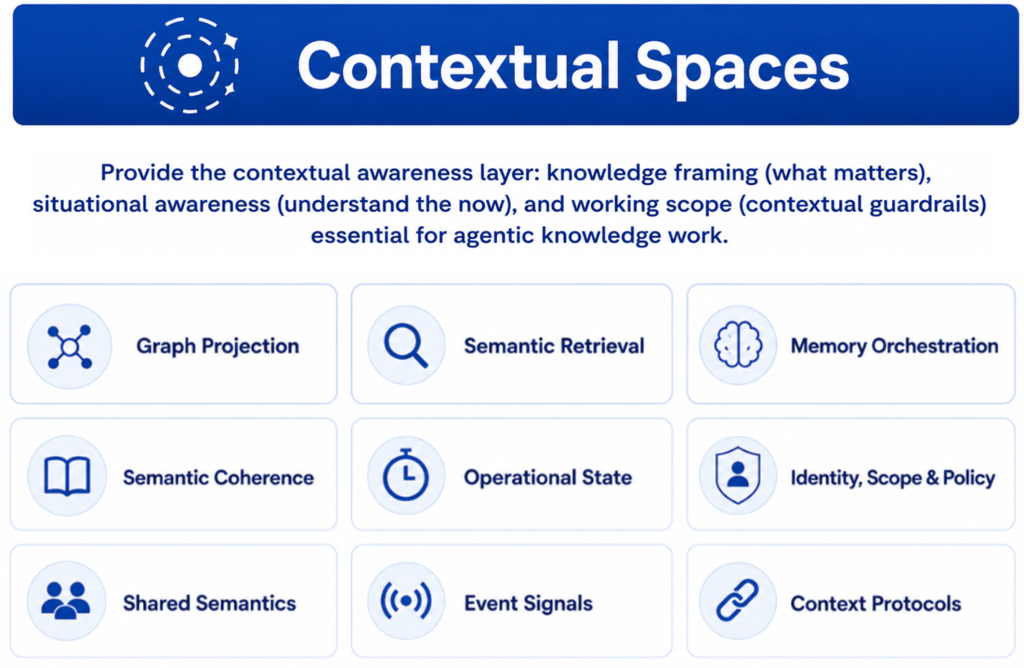

ACT 3: Contextual Spaces | Transforming Knowledge into Situational Intelligence

The implementation of what we call “Contextual Spaces” is where enterprise agency architectures become significantly more powerful. Contextual spaces serve as the situational and adaptive intelligence layer between knowledge graphs and more advanced reasoning systems. If knowledge graphs define the enterprise, contextual spaces interpret the enterprise in motion. They do not simply retrieve facts; they construct dynamic, role-aware operational environments that continuously shape agents’ perception, prioritization, and action.

Contextual spaces dynamically frame what matters in specific moments by combining graph projection, semantic retrieval, operational state, event streams, memory orchestration, identity, temporal awareness, and role-based policy into an active decisioning fabric. Drawing on the emerging construction of contextual space architectures, this layer functions more like a continuously updated cognitive workspace than a search layer, selectively assembling the right knowledge, constraints, workflows, and experiential memory for the task at hand. It transforms static enterprise knowledge into domain-specific situational awareness by creating bounded, purpose-built “working contexts” that adapt as business conditions, objectives, and surrounding signals change.

This layer answers more advanced enterprise questions:

- What matters now?

- What is the current operational state?

- What context is relevant to this role?

- What should be prioritized in this state.

- What constraints/risks must shape action?

- How should context evolve as goals, events, risks, and roles change?

In effect, contextual spaces convert enterprise knowledge into operational intelligence. They create dynamic scopes for agents, enabling them to reason and act within identity, policy, memory, collaboration, and contextual boundaries while maintaining continuity across sessions, workflows, and environments. This is a profound leap because knowledge work is rarely about knowing everything. It is about constructing the right bounded awareness for a specific moment and continuously recalibrating it as conditions evolve.

Together, knowledge graphs and contextual spaces transform generic language systems into operationally grounded decision engines. Knowledge graphs provide structural truth. Contextual spaces provide adaptive relevance. Combined, they establish the cognitive architecture required for a trusted enterprise agency, where AI systems do not merely know the business but can interpret, navigate, and act within it intelligently. This is the true Architecture of Agency.

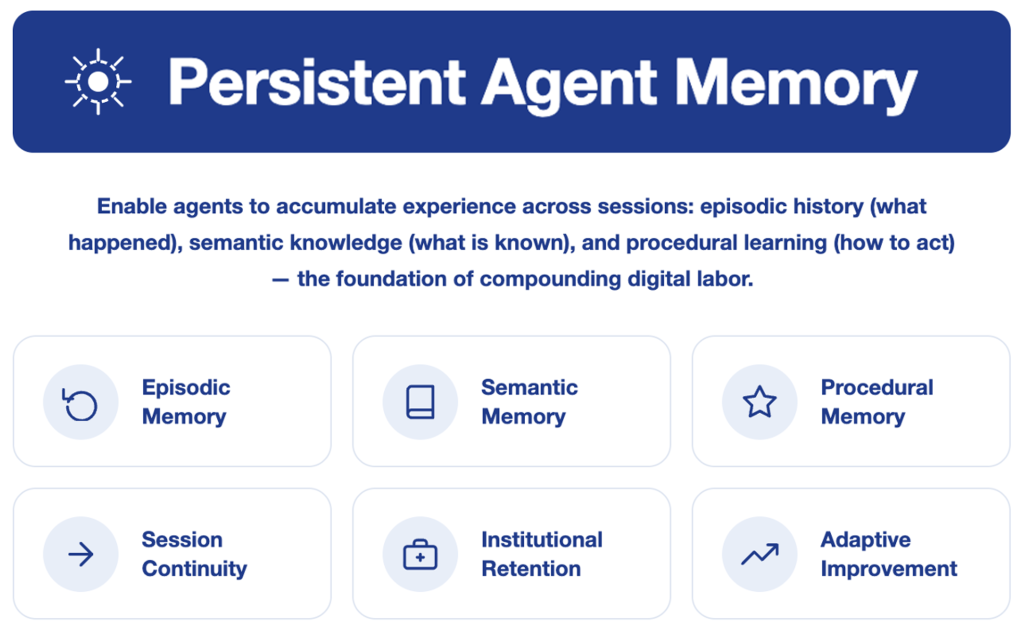

ACT 4: Persistent Agent Memory | From Episodic Assistance to Compounding Intelligence

Traditional chatbots and probabilistic LLMs are largely stateless. They are “forgetful assistants,” useful in isolated interactions but incapable of accumulating enterprise intelligence over time. This is a major barrier to scalable digital labor because real knowledge workers do not restart every day without memory. A senior analyst who joined your firm a decade ago brings institutional context, decision-making history, and relationships that compound in value. Stateless AI cannot replicate that compounding.

Persistent agent memory changes this entirely. By preserving facts, decisions, outcomes, and procedural experience in external storage that survives beyond any single session, enterprises create AI systems that continuously accumulate intelligence, maintain continuity across workflows, and improve with each interaction.

The field has converged on a three-type taxonomy that mirrors how human memory actually works. Episodic memory captures what happened — prior decisions, completed tasks, outcomes, and event sequences, so agents can reference last quarter’s context rather than treating every engagement as a first encounter. Semantic memory stores what the agent knows as standing facts: organizational structures, domain expertise, business rules, and accumulated understanding of how this specific enterprise operates. Procedural memory encodes how to do things — learned workflows, resolution patterns, escalation logic, and the tacit operational knowledge experienced employees carry but rarely document.

This layer answers the questions that require agentic continuity

- What has this agent learned from prior interactions with an entity

- What outcomes occurred last time, and how should they inform action now?

- What institutional knowledge has accumulated about this entity?

- What procedural patterns have proven most effective?

- What decisions or constraints made previously must carry forward?

- How should agent behavior adapt based on accumulated experience?

Collectively, answering these questions delivers three compounding operational outcomes: session continuity that eliminates context loss across interactions; institutional retention that preserves enterprise knowledge independent of individual entities; and adaptive improvement that makes agents progressively more precise over time.

The strategic implication is direct. Knowledge graphs and contextual spaces give agents the intelligence to understand the enterprise environment in the context of an objective. Persistent agent memory gives them continuity to accumulate experience and expertise, and transforms agents from episodic assistants, capable in moments, into agents that deliver the compounding operational intelligence that defines genuine digital labor.

UnifyApps: Leading the Strategic Shift

The strategic shift is real. And UnifyApps is a leading example of the 4-Act Progression in Action.

The enterprise AI market is moving decisively beyond the first wave of chatbot enthusiasm into a more consequential agentic architectural era. The true progression from conversational AI to digital labor is not defined by model fluency alone, but by the systematic layering of intelligence that transforms AI from something that talks into something that works.

This is where market leadership is increasingly being defined, and where UnifyApps is emerging as a real-world innovator. In fact, they already have several customers building a new generation of AI agents capable of much more than just talking, but with actual knowledge work.

UnifyApps is building what can best be described as an AI-native operating layer for enterprises seeking to move beyond fragmented GenAI experiments into workflow-embedded, enterprise-grade digital labor. Serving global enterprises across operationally complex environments (including Lowe’s, GreyOrange, and BELCORP), the company’s differentiation lies not in simply deploying agents, but in architecting the connective tissue that makes agents enterprise-ready.

As Roland Buolos, VP of Solution Consulting at UnifyApps, put it plainly: It’s now about building AI agents that know, contextualize, remember, and do actual work.

Based on our analysis of their platform and direct conversations with UnifyApps leadership, here is how the company maps to each act of the progression described previously:

Act 1 — Fluency and Coherence:

UnifyApps is explicitly LLM-agnostic, allowing enterprises to bring their own models, select from leading public providers, or deploy custom-trained open-source models on-premise. This is the right architectural starting point, treating the LLM as a preference rather than a proprietary lock-in. Enterprises operating at Fortune 50 scale need exactly this kind of model flexibility as the LLM market continues to rapidly evolve.

Act 2 — Knowledge:

The platform provides a comprehensive knowledge architecture that spans unstructured sources (documents, web content) and structured enterprise data, with support for advanced retrieval including GraphRAG and Text2SQL query transformation. This moves agents from generic language generation to enterprise-grounded reasoning, a critical capability for clients operating in complex, data-intensive environments such as supply chain, financial services, and insurance. Roland frames the imperative directly:

“These agents need to understand how to reason within our data, our knowledge, and how all of these are interconnected together.”

Act 3 — Contextual Awareness:

UnifyApps has invested meaningfully in the contextual layer, including hallucination controls, content and topic guardrails, role-based access control tied to source systems, Model Context Protocol (MCP) support, and multi-agent orchestration with master agent coordination. This maps directly to what Roland describes as the governance context:

“The number one discussion we’re having now with customers is all about governance. They’ve already built some agents. They have them in production. Now they’re realizing, how can I be able to manage all of that, monitor it, have the deep observability?”

Enterprises cannot scale digital labor without this layer, and UnifyApps is building it with production deployments already in market. This is meaningful differentiation.

Act 4 — Persistent Agent Memory: This is where UnifyApps’ architectural roadmap is most forward-leaning, and where Roland’s framing is most precise. He explicitly named all three memory types that define this act in our conversations:

“You can have factual memory to gain new knowledge as you’re doing the job. You’re going to have episodic memory to remember specific events that happen. And you’re going to have procedural memory, which is to learn new skills and understand new rules.”

Critically, UnifyApps drew the line between session-level context and true persistent memory: agents must remember not just within a single session but across sessions and processes. That distinction — stateless interaction versus compounding institutional intelligence—is exactly the architectural threshold that separates digital assistants from genuine digital labor. As UnifyApps continues to formalize persistent memory as a named architectural capability, it will complete its evolution from an intelligent assistant to a more capable, compounding digital worker.

AI Operating System for the Enterprise

What makes UnifyApps architecturally distinctive is that these four acts are not delivered as separate point solutions but integrated into a single, coherent platform they call Unify AI.

Its architecture is built in deliberate layers that mirror the four-act progression.

The platform is architected as follows:

- At the foundation sits the Universal Data Processing Backplane, a unified data substrate spanning unstructured, structured, and agentic data chassis, ensuring that every agent, workflow, and application draws from a single, governed data foundation rather than fragmented silos.

- Above that, the Universal Knowledge Context layer, built on ontology and knowledge graph, provides the enterprise intelligence fabric that grounds agents in organizational knowledge, not public internet training data. This is where GraphRAG and Text2SQL retrieval operate, translating raw enterprise data into reasoned, relational understanding that agents can actually act on.

- The AI Agent and App Builder Canvas sits above this knowledge layer, providing a composable, visual development environment where Workflow Builder, App Builder, and Agentic AI modules interoperate, each with its own full software development lifecycle, so enterprises can assemble production-grade digital workers without turning AI deployment into an engineering exercise.

What truly separates the UnifyApps AI OS from most agentic platforms, however, is what flanks the core: two universal context pillars that most competitors treat as afterthoughts.

- The Universal Governance Context, spanning model management, observability, compliance, auditing, evaluation, and governance, means that every agent operates within accountable, auditable boundaries from day one, rather than being bolted on after a governance crisis.

- The Universal Actionability Context connects agents directly to systems of work, systems of record, and external AI agents, enabling the cross-system orchestration that makes digital labor real rather than merely demonstrative. Taken together, this architecture reflects something most agentic platforms have not yet built: a platform where fluency, knowledge, context, and memory are not features to be added, but structural layers to be operationalized.

In addition, UnifyApps recognizes that AI trust extends beyond model quality. AI FinOps, token intelligence, and governance are becoming foundational disciplines. This i. In this regard, UnifyApps appears well-aligned with the direction of enterprise AI: balancing cost, control, and performance while embedding AI into real-world work powered by the next generation of AI agents.

For enterprises like Lowe’s, managing Fortune 50-scale invoice and supply chain complexity;; GreyOrange orchestrating autonomous warehouse operations, and PolicyBazaar personalizing financial products across millions of customers, this is precisely the kind of enterprise-grade foundation that converts AI ambition into measurable digital labor at scale.

The broader implication is significant. As LLM access commoditizes, competitive advantage will shift decisively to those who best architect AI systems that know, contextualize, remember, and act. UnifyApps is positioning itself not as another AI application vendor, but as an enabling platform for next-generation enterprise agents — one that understands the full architectural stack required to make digital labor real, reliable, and economically defensible.

Simply put, this is what an architecture for the next generation of AI agents looks like. And this is precisely why innovators like UnifyApps matter: they are helping move the market beyond AI that talks, toward AI that knows, contextualizes, remembers, and ultimately works.

AnalystANGLE – Our Take

The enterprise AI market is at an architectural inflection point. The first wave — LLM-powered assistants that generate answers — is giving way to agentic systems that know, contextualize, remember, reason, and act with economic accountability. The progression from LLMs and RAG through knowledge graphs, contextual spaces, causal reasoning, and a governed action layer represents the full stack of capabilities required to deliver genuine digital labor.

For enterprise leaders, the strategic implication is direct: AI architecture decisions made today will determine competitive position for years ahead. Now is the time to establish a governed, knowledge-grounded AI foundation, before operational complexity makes the stack exponentially harder to rationalize.

For UnifyApps, three recommendations extend the platform’s leadership:

- First, formalize persistent agent memory as a named architectural capability. The foundation is already in place, with three memory types. Making this a visible platform layer with explicit cross-session and cross-agent memory management will close the gap between current capability and the full four-act architecture.

- Second, invest in causal reasoning as the next frontier differentiator. Build on contextual awareness to enable causal intelligence — understanding not just what is happening but why and which intervention will produce the best outcome. This will separate enterprise-grade platforms from commodity agent builders. Partnerships with causal AI specialists or native investment in this layer would position UnifyApps ahead of a capability curve that most competitors have not yet identified.

- Third, evolve the Action Layer into a measurable economic execution layer. UnifyApps already connects agents to systems of work and record. The next step is to instrument the action layer with outcome tracking, ROI attribution, and token-to-value accounting — turning every agent action into a defensible, auditable business event.

The enterprises that will define the next decade of competitive advantage are building the blueprints of their architectural foundations now designed to deliver the next gen of AI agents. The vendors that keep pace in the enterprise agentic AI platform market are those that move beyond feature competition and commit to completing the full architectural stack: from LLM fluency through knowledge grounding, contextual awareness, persistent memory, causal reasoning, and governed action.

That is not a product roadmap; it is a platform mandate. UnifyApps shows what that commitment looks like in production, with real enterprise clients and a platform that reflects where this market is heading. Others will need to move quickly and with equal architectural seriousness. The window for half-stack solutions is closing. Enterprises are no longer evaluating AI platforms based on model selection alone — they are evaluating them on whether they can deliver accountable, compounding digital labor at scale.

That is the new competitive threshold, and it will separate the platforms that define this era from those that are displaced by it.

Related Research & Resources:

- Explore UnifyApps: https://www.unifyapps.com

- Dive into Digital Labor Trends: Digital Labor Transformation Index Overview

- Why Causal Reasoning: https://thecuberesearch.com/why-causal-ai-decision-intelligence-2026

- Hear from Industry Experts: Watch the next frontiers of AI

📩 Contact Me 📚 Read More AI Research 🔔 Subscribe to Next Frontiers of AI Digest