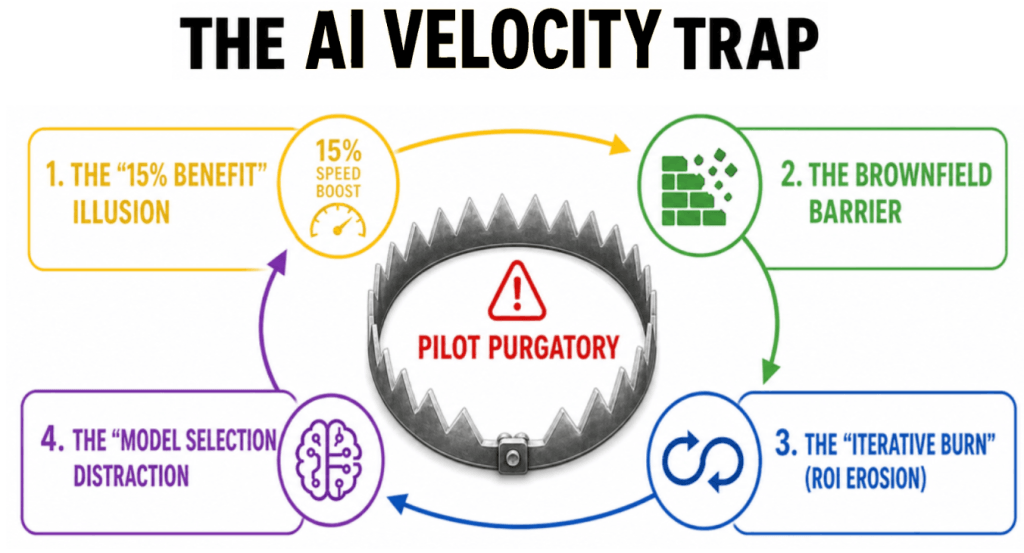

| The Engineering Velocity Diagnostic: Why is 85% of enterprise AI currently stalling? While 57% of organizations are actively piloting agentic workflows, fewer than 15% have reached productive deployment. This stagnation is caused by the “AI Velocity Trap”—an execution crisis in which model obsession distracts from the engineering architecture needed to operationalize intelligence. To break the ROI ceiling, enterprises must pivot from model selection to Engineering Velocity, utilizing a layered framework of unified trust, reusable brownfield connectors, and eval-first engineering. |

Watch the Next Frontiers of AI Episode

The Paradox of AI Ambition

As of May 2026, the enterprise AI market has transitioned from a phase of “irrational exuberance” to one of “operational industrialization.” Despite historic infrastructure spending, with hyper-scalers investing upwards of $675 billion this year, the promised era of transformative ROI remains locked behind a formidable execution barrier.

Recent longitudinal studies from April 2026 reveal a stark reality: while AI tool adoption among enterprise software teams has increased by 65%, actual feature throughput has risen by only 8%. A massive “Proof Gap” has emerged, with 85% of AI pilots currently delivering negligible impact on the P&L.

This indicates that the enterprise AI market has reached a critical inflection point. Despite a global surge in infrastructure investment and an unprecedented “boardroom mandate” to integrate generative intelligence, the promised era of transformative ROI remains elusive for the vast majority of organizations. A recent R Systems data study suggests a staggering disconnect: while approximately 55% to 57% of enterprises are actively engaged in AI pilots, fewer than 2 in 10 have successfully transitioned these experiments into governed, productive deployments.

This disconnect was the central focus of Episode #33 of The Next Frontiers of AI, featuring Nitesh Bansal, CEO of R Systems. During the discussion, Bansal identified the root cause of this stagnation: The AI Velocity Trap. The core thesis is that enterprises are not stalling due to model limitations, but because they lack the Engineering Velocity required to operationalize and trust intelligence across complex “brownfield” environments.

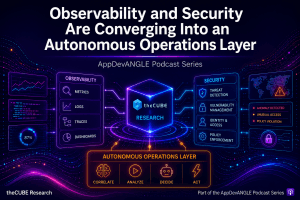

The Strategic Warning: Browser Wars

To understand why “model selection” is a distraction, we must look back to the 1990s “Browser Wars.” In that era, IT leaders believed the most critical strategic decision was to standardize on a specific web browser (Netscape vs. Internet Explorer vs. Firefox, etc.).

History ultimately revealed that the browser was nothing more than a gateway to the value of the internet, rather than the source of value itself. The companies that won the e-business era were not those with the “best” browser, but those that built the most robust proprietary harnesses around the internet to deliver unique services and applications. That is, a layered architecture that transformed a gateway into an enterprise ROI acceleration engine.

In 2026, Large Language Models (LLMs) like GPT-4, Claude, and Gemini have become the modern equivalent of the web browser. These LLMs are acting as the new gateways to the world of AI. They are powerful entry points, but they are rapidly commoditizing.

- The Commodity Trap: If two competing insurance firms use the same foundational LLM, neither gains a competitive edge. Over time, the differentiators marginalize, and the select becomes a simple preference, just as the browser decision today is a preference.

- The Architectural Differentiator: Competitive advantage in the Agentic AI era belongs to the enterprise that builds the most efficient Execution Architecture—the proprietary “layered cake” that allows them to harness these models faster and more securely than their peers, while optimizing for their data, their knowledge, their workflows, their workforce, and their customers.

The enterprise AI market is entering a more mature and revealing phase, just as we did during the internet e-business era. Over the past several years, much of the conversation has centered on foundational models, trust, governance, and the extraordinary promise of generative and agentic AI. Enterprises are investing aggressively, boards are demanding AI strategies, and executives increasingly recognize that AI may define the next era of competitive advantage. Yet beneath this momentum lies a more sobering reality: despite billions in spending and widespread strategic urgency, the vast majority of enterprise AI initiatives still fail to scale to meaningful, repeatable ROI.

Bansal’s thesis is both pragmatic and timely. Enterprises are not primarily stalling because AI models lack capability, nor because trust and governance are unimportant. Rather, they are stalling because trust, governance, and model access alone are insufficient to operationalize AI at enterprise speed.

As Bansal stated directly during the discussion,

“The problem is not the models, it’s an execution crisis.”

This framing is significant because it shifts the conversation from AI aspiration to AI operationalization. It suggests that while trust and governance remain foundational, the larger determinant of enterprise AI success may increasingly be Engineering Velocity: the ability to translate AI capability into governed, scalable business execution across real-world enterprise environments.

This perspective has substantial strategic implications. Enterprises still overly focused on “Which model?” may increasingly miss the more important question: How do we build faster, more governed, more context-rich execution architectures around models? That shift may define the next era of enterprise AI leadership.

In short, trust may get AI approved. Governance may keep it controlled. But engineering velocity may ultimately determine whether it delivers ROI.

The Diagnostic: The 15% Scaler Gap

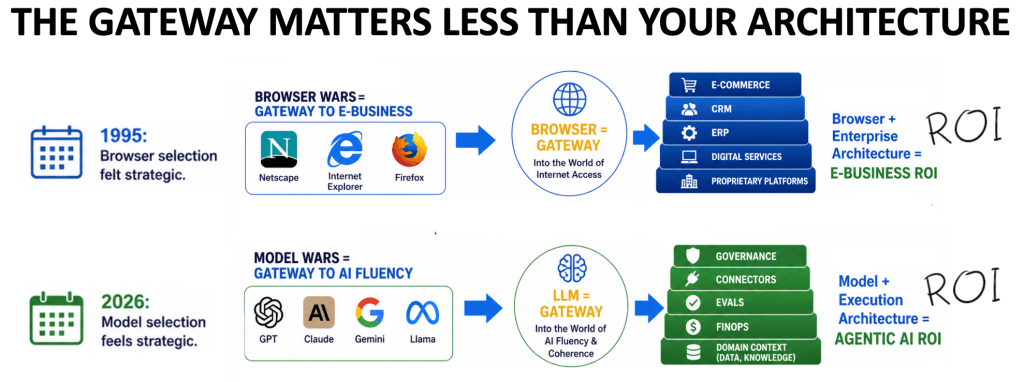

According to the “Agentic AI 2026: A Mid-Market Playbook” report commissioned by R Systems, the enterprise market is split into three distinct personas. Understanding where your organization sits is the first step to escaping the Velocity Trap.

The study identifies three distinct organizational personas in the AI market:

- Explorers (29%): Utilizing individual B2C subscriptions. They see minor productivity boosts but zero enterprise impact due to fragmented governance.

- Pilots (57%): Trapped in “Pilot Purgatory.” They run experiments in clean “greenfield” sandboxes but stall as soon as they encounter the complexities of real-world legacy data.

- Scalers (15%): The minority that has achieved task-level autonomy in governed workflows by mastering high-velocity engineering.

The “Velocity Trap” occurs when an organization moves from Explorer to Pilot mode without updating its engineering lifecycle. The time saved writing code is entirely offset by the increased need for manual review and integration, resulting in a “net-zero” ROI.

The AI Velocity Trap: False Promise of ROI

Perhaps the most compelling element of Bansal’s framework is his explanation of why enterprises fall into the trap in the first place.

The trap begins with legitimate gains. Teams often adopt public AI tools and quickly realize 10–20% productivity improvements. This creates optimism and can reinforce the belief that broader transformation is near. But these early gains can be deceptive.

In many cases, these tools operate effectively in isolated or lightweight contexts, but enterprises rarely operate in greenfield environments. They function within brownfield realities: legacy systems, fragmented architectures, regulatory obligations, security requirements, and operational dependencies.

Bansal emphasized this point clearly when he noted that while frontier model providers may promise extraordinary productivity gains, those gains are often most achievable in greenfield scenarios. Enterprise environments, by contrast, require AI to function inside deeply interconnected operational systems.

This is where the illusion breaks down. Without the infrastructure to integrate AI into those systems, enterprises often experience diminishing returns. Tool fatigue replaces optimism. Governance frameworks take time to mature. Security concerns multiply. Token consumption and cost structures become problematic. Prompt engineering becomes iterative and resource-intensive. ROI begins eroding before the true scale is reached.

The organization then concludes AI may be overhyped, when in reality, the larger issue was never the model itself. It was the lack of execution architecture around the model.

This is the essence of the AI Velocity Trap: confusing model capability with enterprise readiness.

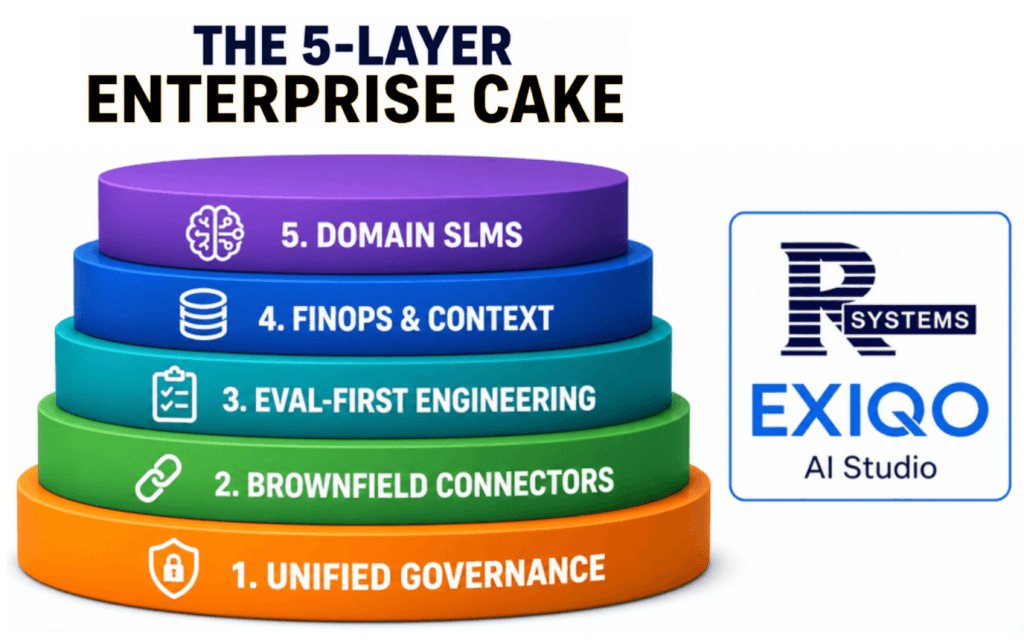

Architectural Blueprint: The 5-layer “Cake”

To operationalize this philosophy, R Systems introduced what Bansal calls its Five-Layer Enterprise Cake, a framework designed to accelerate AI readiness through reusable infrastructure.

At its core, the framework recognizes that enterprises should not repeatedly rebuild foundational AI operational layers from scratch.

Layer 1: Unified Governance

Security, compliance, auditability, role-based access, and policy frameworks. AI Trust is no longer a compliance check—it is a performance metric. Scalers move from manual stewardship to Declarative Governance.

Layer 2: Brownfield Connectors

Integration into legacy systems, enterprise tools, and existing operational environments. 95% of enterprise value is trapped in legacy environments—20-year-old databases and monolithic ERPs. Scalers use a library of reusable connectors that enable AI agents to instantly “plug and play” with legacy infrastructure, eliminating the need for slow custom integration code.

Layer 3: Eval-First Engineering

Prompt libraries, evaluation harnesses, testing methodologies, and reusable agent frameworks. AI output is probabilistic, not deterministic. To solve the “Trust Gap,” Scalers replace manual verification with Agentic Evaluation. Specialized AI agents test the output of other agents against “Gold Standard” datasets, providing auditable decision-points that explain why an agent chose a specific path.

Layer 4: FinOps + Context Engineering

Cost control, token discipline, context optimization, and economic sustainability. Layer 4 is the economic engine of engineering velocity because scalability often fails not because of model performance but because of uncontrolled economics. This layer must also defend against stuffing attacks that artificially inflate token consumption, while shifting financial oversight from broad cloud spend metrics to more operational KPIs such as cost per thought and project.

Layer 5: Domain-Specific SLMs

Industry, enterprise, and organizational context embedded into AI systems. While general reasoning stays on frontier models, the Execution Layer is moving toward Domain SLMs. These are faster, cheaper, and fine-tuned on an organization’s proprietary knowledge, providing a layer of “Private Intelligence” that competitors cannot replicate.

his layered model reframes AI not as a tool-deployment exercise but as an enterprise systems architecture challenge. Its strategic importance lies in reuse. By reusing governance layers, connectors, evaluation systems, and domain frameworks, organizations can materially accelerate implementation speed while reducing the friction that often destroys ROI.

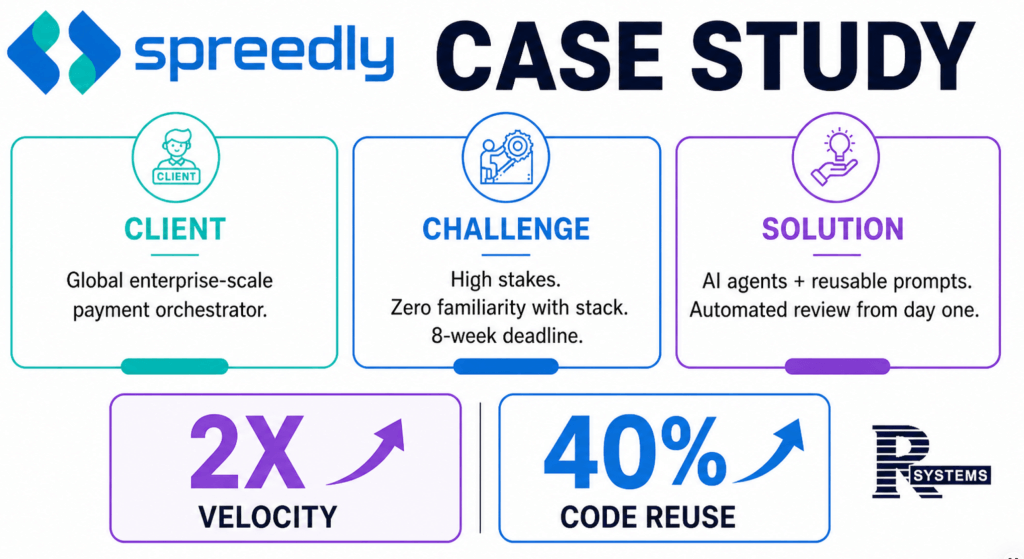

Empirical Proof: Elevated ROI at Spreedly

To validate this framework, we analyzed the results of a recent initiative for Spreedly, a global payment orchestration platform, which faced a significant modernization challenge: migrating 45 complex operational reports from proprietary systems to an open-source dashboard architecture.

Under conventional development assumptions, the roadmap was estimated at roughly 16 months, reflecting the scale, complexity, and integration burden of the project.

R Systems compressed that timeline to approximately 9 weeks by applying an AI-first execution architecture built around preconfigured governance frameworks, reusable brownfield connectors, agent templates, eval-first engineering, and disciplined human oversight.

The outcome was not simply faster delivery. It was a structural reduction in execution drag.

The project reportedly achieved:

- 2x Engineering Velocity: Doubling the speed of the software development lifecycle.

- 40% Code Reuse: Leveraging pre-built assets across the 45 unique reports.

This result serves as the “Gold Standard” proof that engineering velocity—not model capability—is the new determinant of AI success. These gains illustrate that the real advantage did not come from AI models alone, but from the layered enterprise architecture surrounding them.

This is precisely what Bansal means by engineering velocity: compressing the time, effort, and operational drag between AI capability and enterprise outcome. In that sense, Spreedly serves as a practical proof point that engineering velocity, not model selection, is the key determinate of ROI.

AnalystANGLE: Our Take

The broader significance of this discussion is difficult to overstate. For the past two years, much of enterprise AI strategy has focused on foundation models, trust, governance, and experimentation. Those remain indispensable. But increasingly, they may represent prerequisites rather than differentiators.

The next frontier of AI in the enterprise appears to be execution maturity.

Organizations that define clear objectives, redesign workflows AI-first, embed human oversight, operationalize reusable infrastructure, and move faster through brownfield complexity may establish durable advantages over those still trapped in fragmented experimentation.

This suggests a larger market evolution: The winners of the enterprise AI era may not simply be those with the best models. They may be those with the best execution architectures. As enterprises push toward real-world ROI, the more decisive question may be execution speed.

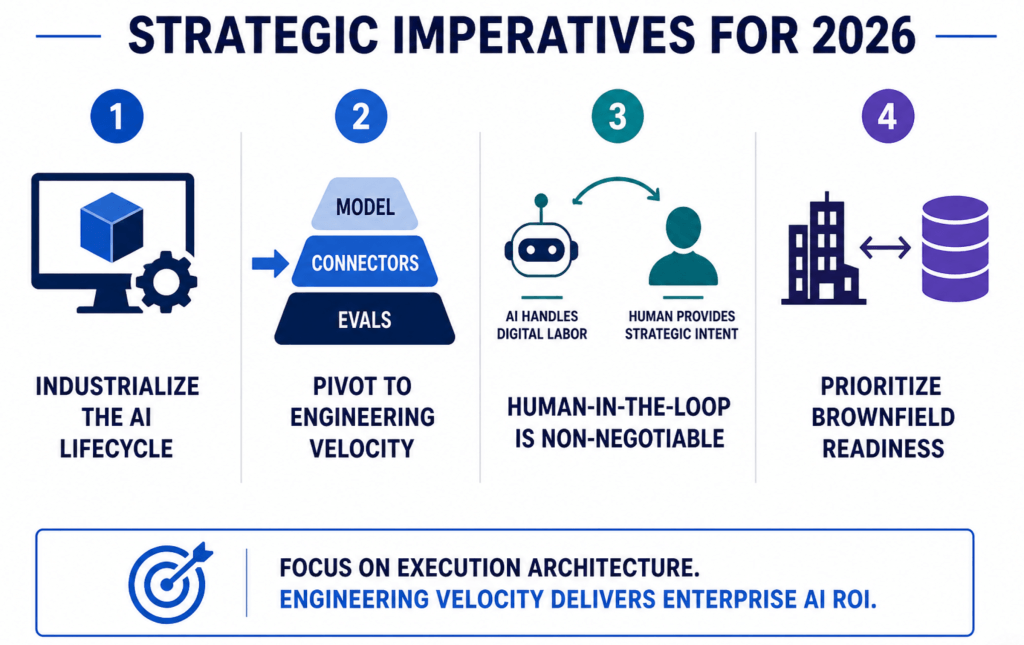

Strategic Recommendations for 2026:

- Industrialize the AI Lifecycle: Invest in an “AI Studio” approach (like EXIQO) to provide reusable assets, connectors, and eval-frameworks.

- Pivot to Engineering Velocity: Shift procurement focus from the “Gateway” (model) to the “Middle Layer” (connectors and evals).

- Human-in-the-Loop is Non-Negotiable: Velocity does not mean 100% automation. Trust is built when AI handles the “digital labor” while humans provide the “strategic intent.”

- Prioritize Brownfield Readiness: If your AI cannot communicate with your legacy systems on Day 1, your ROI will drop to zero.

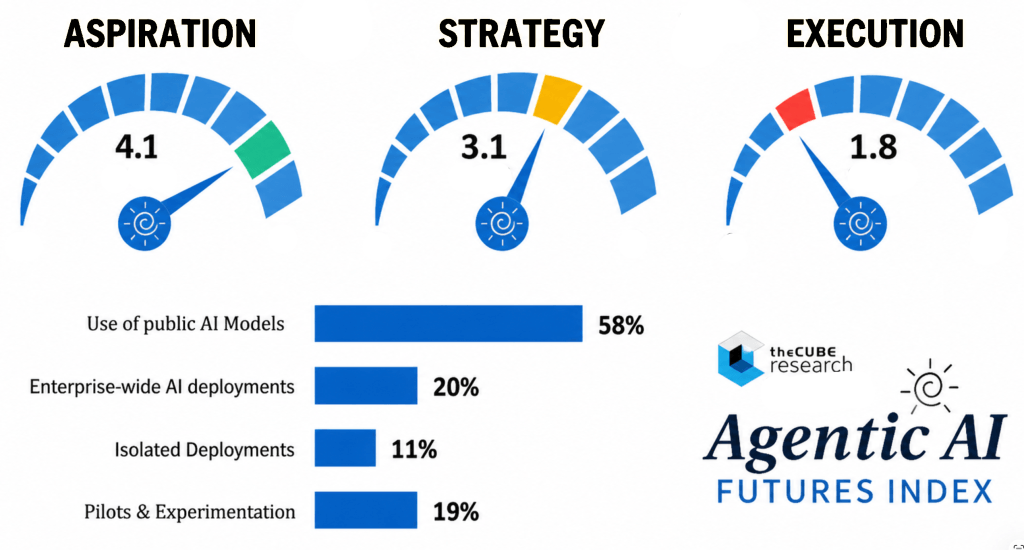

Our Agentic AI Futures Index strongly reinforces these strategic priorities.

The data suggests that while enterprise ambition around AI is high, operational maturity remains uneven. Enterprises scored a robust 4.1 out of 5 on aspiration, reflecting widespread confidence in AI’s transformative potential, and a respectable 3.1 on strategy, indicating that many organizations are actively developing adoption plans. But execution tells a far different story, collapsing to just 1.8 out of 5, exposing a severe operational bottleneck between vision and real-world ROI.

This gap becomes even more revealing when paired with deployment behavior. Nearly 58% of enterprises remain heavily focused on public foundation models such as ChatGPT, Claude, Gemini, and Meta, while only 20% report enterprise-wide AI deployments. Overall, the key takeaway is that the era of AI “exploration” is over.

We have entered the era of AI Industrialization. Organizations that remain obsessed with finding the “perfect model” will remain permanently trapped in Pilot Purgatory. The competitive gap in 2026 is no longer between those who use AI and those who don’t. It is between the 15% of “Scalers” who have mastered Engineering Velocity and the 85% who remain trapped in “Model Obsession.”

As Nitesh Bansal succinctly put it:

“Trust may get AI approved. Governance may keep it controlled. But engineering velocity determines if it delivers ROI.”

Organizations that fail to build this factory architecture today will be left behind as “The Scalers” dominate the next decade of digital labor.

Related Research & Resources:

- Explore the EXIQO AI Studio: https://exiqo.ai

- Dive into the Agentic AI ROI roadmap: Digital Labor Transformation Index Overview

- Continue the Series: Watch our deep dive on the next frontiers of AI

📩 Contact Me 📚 Read More AI Research 🔔 Subscribe to Next Frontiers of AI Digest