Contributing Author: David Floyer

Nvidia wants to completely transform enterprise computing by making datacenters run 10X faster at 1/10th the cost. Nvidia’s CEO, Jensen Huang, is crafting a strategy to re-architect today’s on-prem data centers, public clouds and edge computing installations with a vision that leverages the company’s strong position in AI architectures. The keys to this end-to-end strategy include a clarity of vision, massive chip design skills, new Arm-based architectures that integrate memory, processors, I/O and networking; and a compelling software consumption model.

Even if Nvidia is unsuccessful at acquiring Arm, we believe it will still be able to execute on this strategy by actively participating in the Arm ecosystem. However, if its attempts to acquire Arm are successful, we believe it will transform Nvidia from the world’s most valuable chip company into the world’s most valuable supplier of integrated computing architectures.

In this Breaking Analysis we’ll explain why we believe Nvidia is in a strong position to power the world’s computing centers and how it plans to disrupt the grip that x86 architectures have had on the datacenter market for decades. We’ll also share some ETR data that will put AI spending and the competitive dynamics in context.

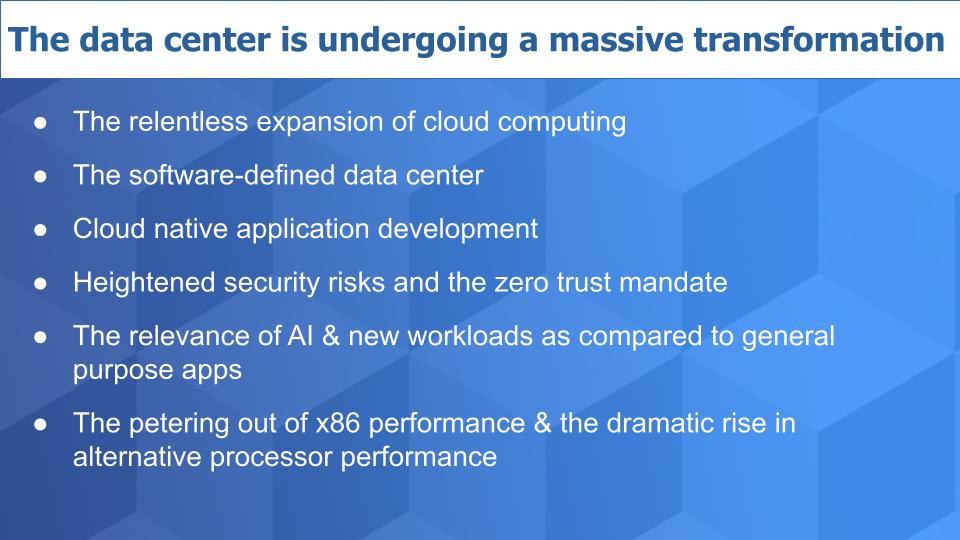

A Datacenter Market in Transition

There are only a handful of mega clouds but there are lots of data centers. Even though the number of data centers worldwide is consolidating, there are still more than 7 million according to IDC. The cloud, like the universe, is expanding at an accelerated pace and millions of data centers are being interconnected via the Internet – the world’s new, (not so) private network. This new cloud is becoming hyper-distributed and run by software.

Open APIs, external applications, sprawling digital supply chains and this expanding cloud increase the threat surface and vulnerability to the most sensitive information that resides within data centers around the world. Z trust has gone from buzzword to become a mandate seemingly overnight.

We’re also seeing AI injected into every application and is the technology area we see with the most momentum coming out of the pandemic. We believe the architectures that are powering AI will be the linchpin of Nvidia’s strong entry into the datacenter market.

To wit, we believe this new world will not be solely powered by general purpose x86 processors. Rather it will be supported by an ecosystem of Arm-based providers that are affecting an unprecedented increase in processor performance as we’ve been reporting.

Nvidia in our view is sitting in the pole position and is currently the favorite to dominate the next era of computing architecture for global data centers, public clouds as well as the near and far edge.

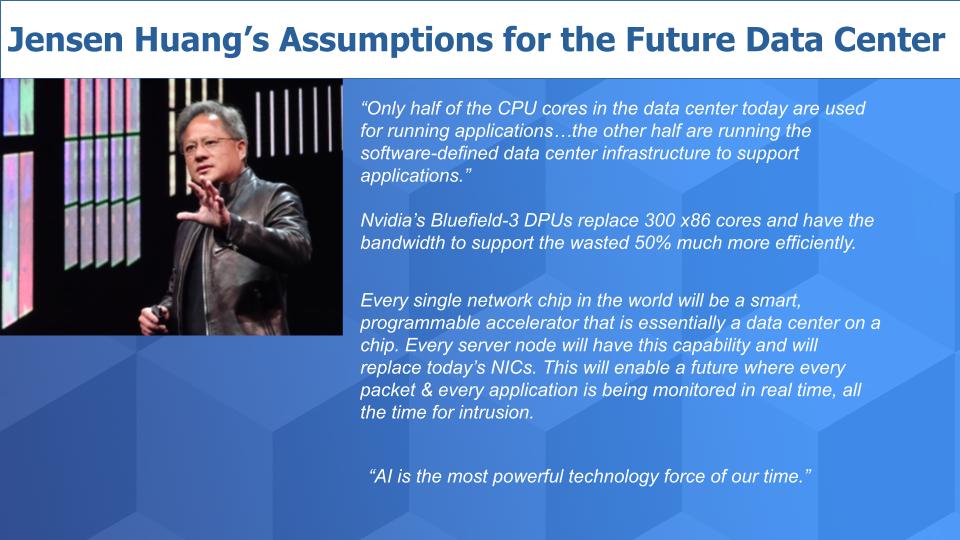

Jensen Huang’s Clarity of Vision

Below is a graphic that underscores some of the fundamental assumptions Nvidia’s CEO is banking on to expand his market. The first is that there’s lots of waste in the data center. He claims that only half the CPU cores deployed in the data center today actually support applications. The other half are processing the infrastructure around the applications that run the software-defined data center. And they’re terribly underutilized.

Nvidia’s Bluefield-3 DPU – data processing unit – was described in a blog post by analyst Zeus Kerravala as a complete mini-server on a card with software defined networking, storage and security acceleration built in. This product has the bandwidth to, according to Nvidia, replace 300 general purpose x86 cores.

Jensen believes that every network chip will eventually be intelligent, programmable and capable of this type of acceleration to offload conventional CPUs. He believes that every server node will have this capability and enable every packet and every application to be monitored in real time, all the time, for intrusion. And as servers move to the edge, Bluefield will be included as a core component. He stated a number that there are 25 million servers shipped each year and that’s his target.

That last statement by Jensen is critical in our view. “AI is the most powerful force of our time.” And whether you agree with that or not, it’s relevant because AI is everywhere and Nvidia’s position in AI and the architectures the company is building are the fundamental linchpin of its data center / enterprise strategy.

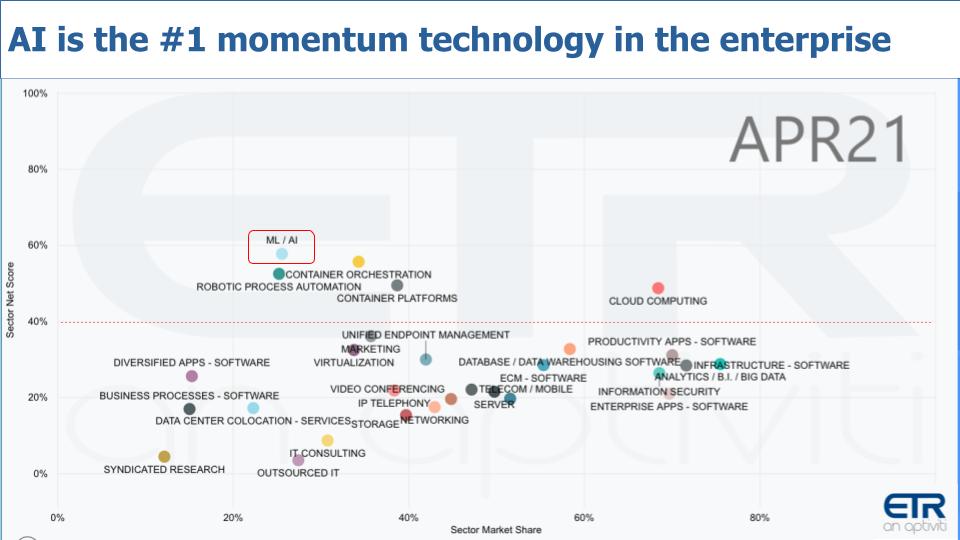

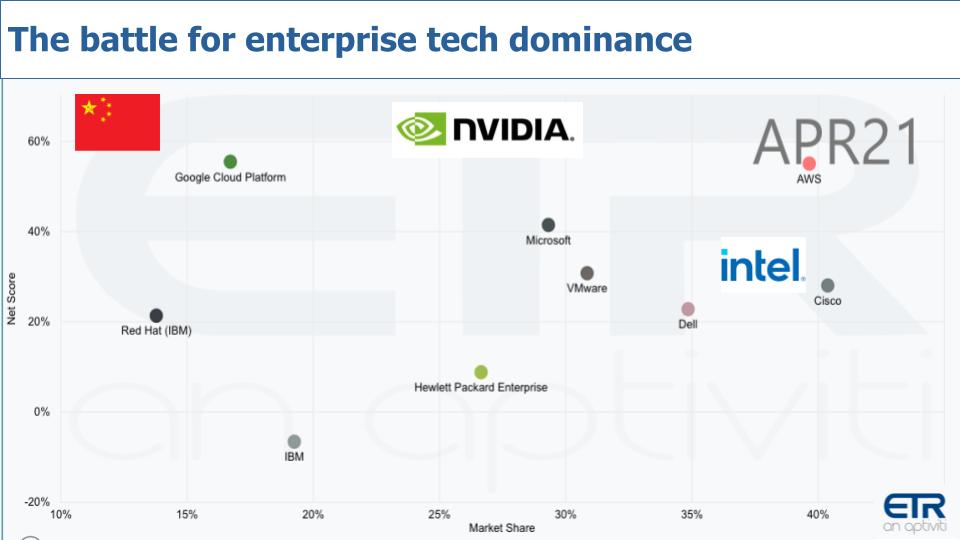

AI Tops the Spending Momentum List

Let’s take a look at the ETR data to see where AI fits on the CIO priority list. Below is a set of data in a view we often like to share. The horizontal axis is Market Share or pervasiveness in the ETR data. But we want to call your attention to the vertical axis, which is Net Score or spending velocity. Exiting the pandemic, we’ve seen AI capture the #1 position in the last two surveys. And we think this dynamic will continue for quite some time as AI becomes a staple of digital transformations and automations. And AI will be infused in every single dot you see on this chart.

The aha is that Nvidia’s architectures are tailor made for AI workloads and virtually every segment in the above graphic will be using Nvidia’s technology.

Workloads are Trending Towards Nvidia’s Wheelhouse

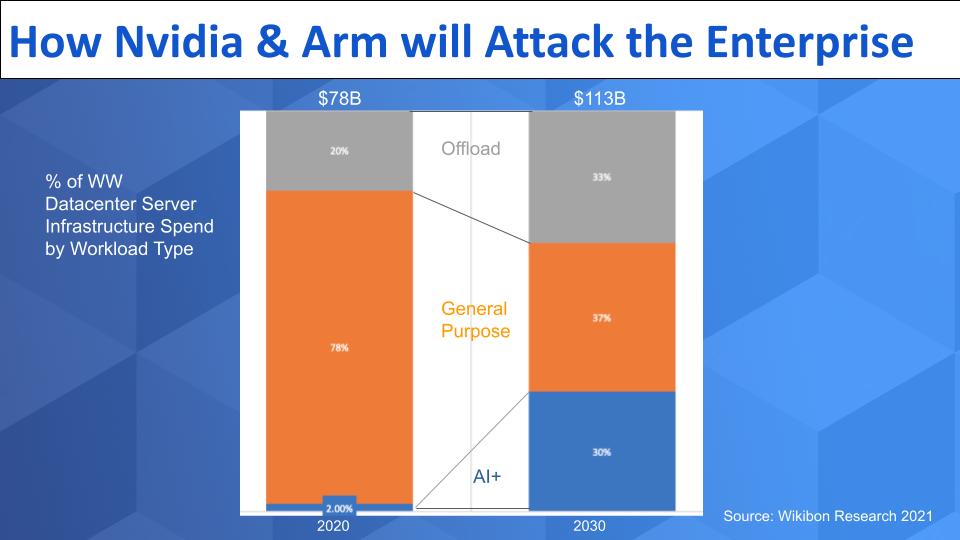

Let’s quantify what this means…and lay out our view of how Nvidia, with the help of Arm, will go after the enterprise market.

In the graphic above we show projections from from Wikibon Research that depict the percent of worldwide spending on server infrastructure by workload type. Here are the key points:

- The market last year was around $78B and is expected to approach $115B by the end of the decade – perhaps a conservative figure.

- We’ve split the market into three broad workload categories – the blue is AI and other data-intensive applications that we define here. The orange is general purpose apps like ERP, supply chain, HCM, collaboration – basically think about the applications from Oracle, SAP, Microsoft and hundreds of general purpose applications. The gray is the area that Jensen was talking as wasted cycles– that offload work for networking and storage and all the software defined management in the data centers around the world.

- Our view is that the general purpose workloads are getting squeezed as investments move toward AI+ workloads and the offload work goes to alternative processors that are embedded into storage and networking solutions. The latter trend reminds us of spinning disk drives. For years, organizations were forced to buy more spindles and underutilize storage just to get better performance. It was wasteful and inefficient and eventually new technologies came along to solve the problem.

Nvidia, with Arm, in our view is well positioned to attack the offload market and, logically, the AI-based work. But even portions of the orange general purpose segment can go to Arm-based systems. As we’ve reported, for example, companies like AWS and Oracle use Arm-based designs to serve general purpose workloads. Why are they doing that? Cost. Because x86 generally and Intel specifically are not delivering the price/performance and efficiency required to keep up with the demands to reduce data center costs. So these companies are working with ISVs to ensure that general purpose apps run on Arm-based processors with no changes required by the customer.

Thought Exercise – What Would Happen if Intel Didn’t Respond?

If Intel didn’t respond to this obvious dynamic, we think would get 50% of the general purpose workloads by the end of the decade. And with Nvidia, it will dominate the blue AI+ and the gray offload work. Dominate – like capture 90% of the available market.

Now Intel isn’t going to sit back and let that happen. Pat Gelsinger is well aware of this and is moving Intel toward a new strategy that can better manage memory resources and accommodate offload processing and greater programability by the ecosystem. But Nvidia and Arm are way ahead in the game– as we’ve reported ad nauseam. In addition, Nvidia is increasingly partnering with storage leaders like NetApp, DDN, VAST Data, WekaIO, Pure Storage and others, which we believe will align with its strategy for portions of their portfolio.

Nvidia is no Longer a Gaming Company

Nvidia made its name as a gaming company. Even today, nearly half of its revenue comes from that segment. Ask any gamer what they think of Nvidia and they’ll go on and on about Nvidia’s incredible performance, its amazing drivers, its smoother coloration, cleaner image presentation, superior resource allocation and other features like screen recording capabilities. The only thing they don’t absolutely love is the price– nice problem to have.

But Nvidia has expanded its TAM by going after enterprise markets. Let’s take a quick look at what Nvidia is doing with parts of its enterprise portfolio that we feel are relevant to this discussion.

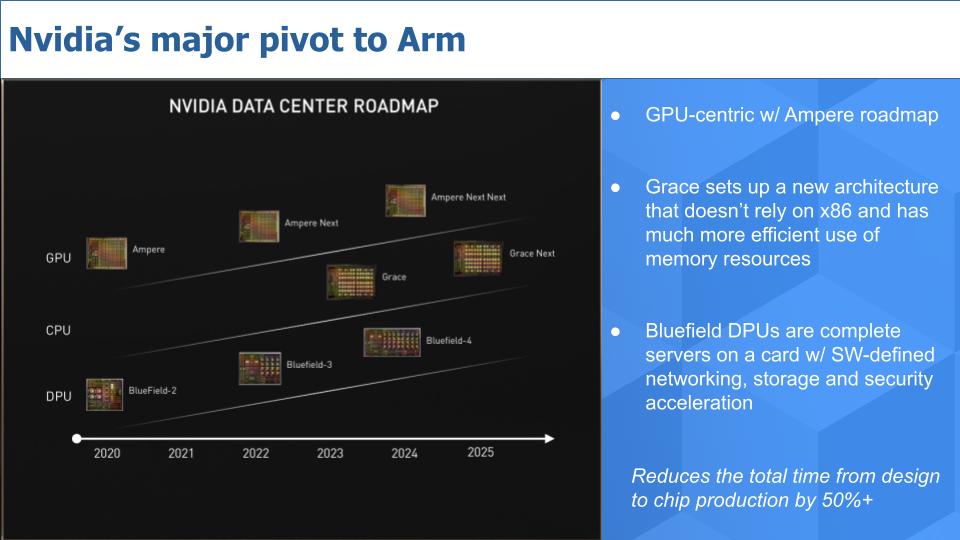

Above is a slide from Nvidia’s investor deck that highlights the company’s 3-chip strategy. Importantly, Nvidia is aggressively moving to Arm-based architectures, which we’ll describe in more detail later. The slide shows on the top line, Nvidia’s Ampere architecture, not to be confused with the company Ampere Computing. Nvidia is taking a GPU-centric approach for obvious reasons, that’s its wheelhouse, but we think over time it may rethink this and diversify more into alternatives like NPUs for cost and flexibility reasons. But we’ll save that for another day.

In the middle line, Nvidia has announced its Grace CPU, in a nod to the famous computer scientist Grace Hopper. Grace is a new architecture that doesn’t rely on x86 and much more efficiently uses memory resources.

And the bottom line shows the roadmap for Nvidia’s Bluefield DPU, which as Zeus Kerravala described, is essentially a complete server on a card.

The last point on the above chart is super important and often overlooked. Moving to Arm will reduce the elapsed time to go from chip design to production by 50%. We’re talking shaving years down to 18 months or less. This will give Nvidia a significant time-to–market advantage in the enterprise.

Doubling Down on AI Workloads & Setting the Edge

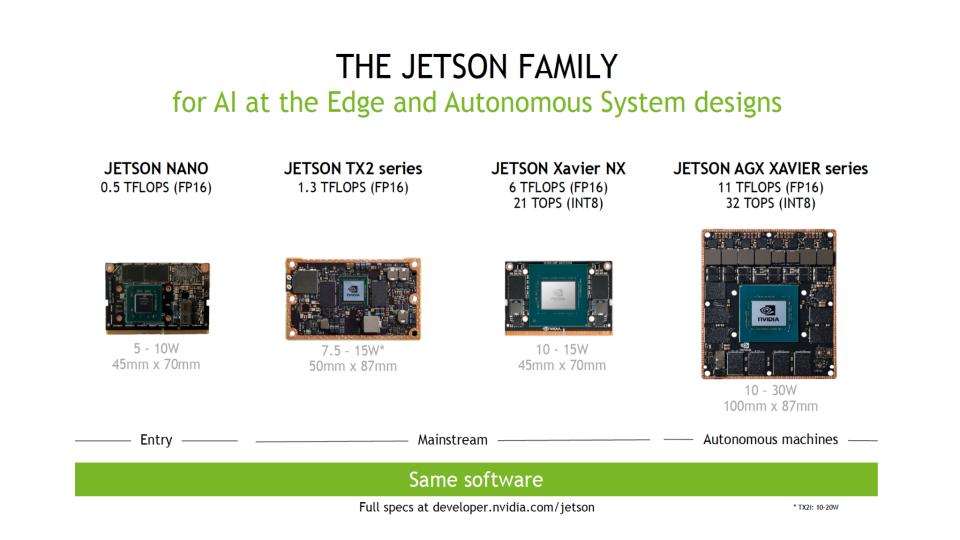

We’re not going to do a deep dive into Nvidia’s enterprise product portfolio. There’s plenty of information on the Web if you’re interested. However we believe the graphic below highlights some things we think are important as it relates to Nvidia’s end-to-end strategy.

The graphic above shows selected details of Nvidia’s Jetson architecture which is designed to accelerate those AI+ workloads that we showed earlier in the (blue) bar chart. The reason this is important in our view is the same software supports small to very large, including edge systems. We think this type of architecture is well suited for AI inference at the edge as well as core data center applications that use AI. So this is a good example of leveraging an architecture across a wide spectrum of performance and cost that we think will serve Nvidia well.

Specifically as it relates to edge workloads, we believe today’s traditional server vendors are missing the larger opportunity– mainly because it’s currently small and they can’t justify the investment. These players are rightly staying close to their customers and networking with industrial giants to identify ways they can re-orient their existing x86 architectural investments toward what they see as “the edge.” We believe they largely view their edge opportunity as a mini data center or aggregation point for data. They want to provide horizontal infrastructure at scale to take advantage of their operating leverage. And they’re prudently circumspect about not going to the “far edge” and get too deep into specialized applications.

We believe Nvidia and Arm see the bigger picture. When vendors throw out TAM figures that the edge will be trillions of dollars in value, we believe the real opportunity is with real-time AI inferencing deep within the instrumented edge. And this will require lots and lots of processing and it won’t look like anything like traditional x86 servers. These servers will be space efficient, low power, tightly packaged/embedded, high performance, programmable and super cheap. And that’s where we believe Nvidia and Arm are headed.

Nvidia’s Move to Arm Addresses its Biggest Technical Bottleneck

We want to take a moment to explain why we think the move to Arm-based architectures is so important to Nvidia.

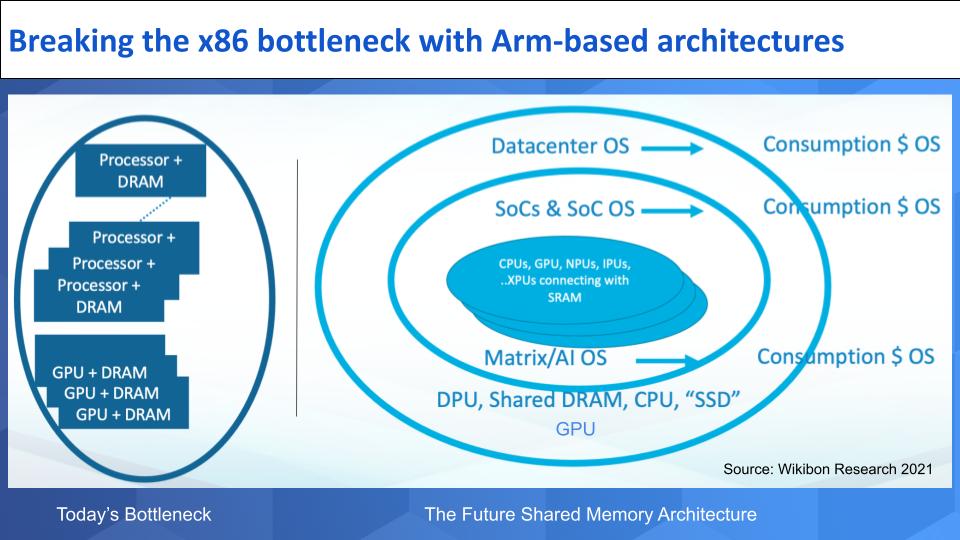

One of the biggest cost challenges for Nvidia today is keeping the GPU utilized. Typical utilization of GPUs is well below 20%. The graphic above is an attempt to explain why.

Imagine that the left hand side of the chart shows racks of traditional compute. It underscores the bottlenecks Nvidia faces. The processor and DRAM are tied together in separate blocks. Imagine there are thousands of cores in a rack. Every time the system needs data that lives in another processor it has to send a request and go retrieve it, which is overhead intensive. Technologies like RoCE are designed to help but it doesn’t solve the fundamental architectural bottleneck.

Because every GPU, as shown on the left at the bottom, also has its own DRAM, it has to communicate with the processors to get to the desired data – i.e. they can’t communicate with each other efficiently.

The Future Architecture

The right hand side shows where Nvidia is headed. Start in the middle with SoCs, system on chip. CPUs are packed with NPUs, IPUs (image processors)…XPUs (i.e. other alternative processors). These are all connected with SRAM which is a high speed layer – like an L1 cache. The OS for the SoC lives inside and this is where Nvidia has a killer new pricing model.

The company is licensing the consumption of the OS that’s running the system and are affecting a new and really compelling software subscription model that aligns with the way enterprise buyers are increasingly buying software. In theory Nvidia could give away the chips for free and just charge for the software. Like the razor blade model.

The outer layer on the right hand side is the DPU and shared DRAM and other resources (e.g. Ampere Computing – the company this time – along with CPUs, SSDs and other resources). These are the processors that will manage the SoCs together.

This design is based on Nvidia’s 3-Chip approach using the Bluefield DPU, leveraging Mellanox (that’s the network). The network enables shared DRAM across the CPUs, which will eventually be all Arm-based. Grace lives inside the SoC and also on the outside layers. And of course the GPU lives inside the SoC in a scaled down version (e.g. a rendering GPU) and we show some GPUs on the outer layer as well for AI workloads-– at least in the near term. Eventually we think they may reside solely in the SoC but only time will tell.

So as you can see, Nvidia is making some serious moves and by teaming up with Arm and leaning into the Arm ecosystem. This is how it plans to dramatically improve the efficiency of its solutions, reduce its reliance on x86 and support those emerging AI-based workloads we described earlier.

Who Competes for Compute Leadership?

Below is that same XY chart showing Market Share or pervasiveness tracking against Net Score or spending momentum. And we’ve cut the ETR data to capture compute, storage and networking segments for some of the leading players that we feel are vying for compute data center leadership.

AWS is in a very strong position. We believe more than half of its revenue comes from compute so we’re talking about more than $25B on a run rate basis. Huge. The company designs its own silicon and is working with ISVs to run general purpose workloads on Arm-based Graviton chips. Microsoft and Google are big consumers of compute and they sell a lot. Microsoft in particular is likely to continue to work with OEM partners to attack the on-prem data center opportunity but it’s really Intel that is the provider of compute to the likes of HPE, Dell, Cisco and the ODMs which are not shown here.

HPE has historically developed architectures. We hate to bring it up but remember “The Machine?” We realize it’s the butt of many jokes from competitors and HP deserves some heat for all the fanfare and then quietly putting The Machine out to pasture. But HPE has a strong position in HPC, which is AI and data-intensive. And the work it did on new computing architectures and shared memories from its lab experiment might still be kicking around and could come in handy some day in this future we’re describing here. Plus HPE has been known to design its own custom silicon so we would not count them out as an innovator in this race.

Cisco is interesting because it not only has custom silicon designs but its entry into the compute business with UCS a decade ago was notable in that it created a new way to think about integrating data center resources. Cisco invests in architectures and we expect the next generation of UCS will mark another notable milestone in the company’s data center business. As well, the company has serious security chops and has made numerous acquisitions to shore up its position in the data center (e.g. AppD, ThousandEyes, Banzai, Meraki and others).

Dell just had an amazing quarterly earnings report. The company grew top line revenue by around 12% and it wasn’t attributable to an easy compare relative to last year. Dell is simply executing, despite continued softness in the legacy EMC storage business. Laptop demand continue to soar and Dell’s server business is growing again. But we don’t see Dell as an architectural innovator in compute. Rather we think the company will be content to partner with suppliers, whether Intel, Nvidia, Arm-based partners or all of the above. Dell we predict will rely on its massive portfolio, excellent supply chain and execution ethos to squeeze out margins by integrating core architectural innovations developed by others. We do expect however, especially in storage, that the company will tap lower cost alternatives to better service those offload workloads we discussed earlier.

IBM is notable for historical reasons. With its mainframe, IBM created the first great compute monopoly before unwittingly handing it to Intel along with Microsoft. We don’t see IBM aspiring to retake the compute platform mantle it once held with mainframes. Rather Red Hat and the march to hybrid cloud is the path in our view.

The Elephants in the Room – Intel, Nvidia & China, Inc.

Now let’s get down to the big dogs. Intel, Nvidia and China, Inc. China is relevant because of companies like Alibaba, Huawei and the Chinese government’s desire to be self-sufficient in semiconductor technology.

But our premise here is the trends are favoring Nvidia over Intel in the above picture and hence the relative positioning we chose for the logos. Nvidia is making moves to further position itself for the new workloads in the data center; and compete for Intel’s stronghold. Intel will attempt to remake itself but it should have initiated the moves Pat Gelsinger is making today, five to seven years ago. Intel can’t change that and is simply far behind. It will take the company years to catch up.

Nvidia by the Numbers

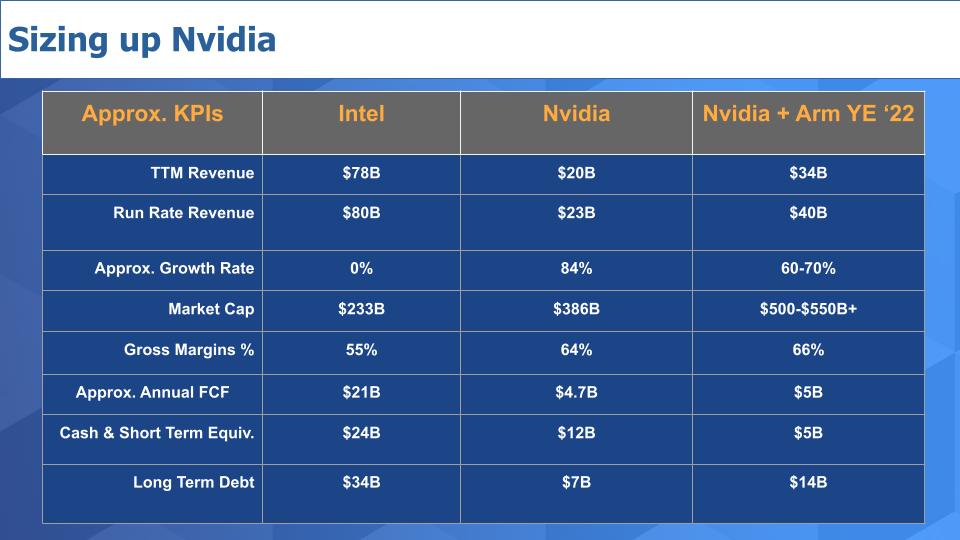

Let’s stay on the Nvidia v Intel comparison for a moment and take a snapshot of the companies’ financials.

Above is a quick and dirty chart we put together with some straightforward KPIs. Some of these figures are approximate or rounded so don’t stress over it too much. But you can see Intel is an ~$80B company – 4X the size of Nvidia. Yet Nvidia’s market value far exceeds that of Intel. Why? Because of the growth line. And in our view it’s justified due to Nvidia’s stronger strategic positioning.

Intel used to be the gross margin king but Nvidia has much higher margins. When it comes to free cash flow, Intel still is dominant. As it pertains to the balance sheet, Intel, especially with its new foundry strategy, is a way more capital intensive business than Nvidia. As Intel starts building out more manufacturing capacity for its foundries it will pressure the company’s cash position.

In the third column we put together a back of napkin pro forma for Nvidia + Arm circa end of 2022. We think they could get to a run rate that is about half the size of Intel’s revenue. And That could propel the company’s market cap to well over ½ a trillion if they get any credit for Arm. The risk is that because the Arm deal is based on cash plus tons of stock it could put pressure on the market capitalization for some time.

Arm has 90% gross margins because it pretty much has a pure license model so it helps gross margin a bit– but Arm is relatively small at around $2B in revenue so it doesn’t move the needle that much. The balance sheet data is a swag. Arm has said it’s not going to take on debt to do the transaction but we haven’t had time to figure out how they do that without taking on some debt so we took a guess as to how we’d approach it with today’s ultra low costs of capital.

The point is given the momentum/growth of Nvidia, its strategic position in AI, its deep engineering aimed at all the right places and potential to unlock huge value with Arm…on paper it looks like the horse to beat if it can execute.

Summarizing Nvidia’s Attack on the Enterprise

The architectures on which Nvidia is building its dominant AI business are evolving. The workload mix and future demand is heading right at these new architectures in our view. Nvidia is well positioned to drive a truck right through the enterprise in our opinion.

The power has shifted from Intel/x86 to the Arm ecosystem and Nvidia is leaning in, whereas Intel has to preserve its current business while recreating itself. This will take time. But Intel potentially has the powerful backing of the U.S. government.

The wildcard is will Nvidia be successful in acquiring Arm? Certain factions in the UK and EU are fighting the deal because they don’t want the U.S. dictating to whom Arm can sell technology – e.g. the restrictions placed on Huawei for many suppliers of Arm-based chips. In addition, Nvidia’s competitors like Broadcom, Qualcomm et. al. are nervous that if Nvidia gets Arm they’ll be at a competitive disadvantage. And for sure China doesn’t want Nvidia controlling Arm for obvious reasons and it will do what it can to block the deal and/or put handcuffs on how business can be done in China.

We can see a scenario where the U.S. government pressures UK/EU regulators to let the deal go through in exchange for commitments to help fund plants in Europe. AI and semiconductors – you can’t get more strategic than that and we think the U.S. military has every reason to support this deal. In exchange for facilitating the deal, the government pressures Nvidia to feed Intel’s foundry business (along with our previous scenario with Apple). At the same time, governments could impose conditions that secure access to Arm-based technology for Nvidia’s competitors.

We don’t have any inside information as to what’s happening behind the scenes but on its earnings call, Nvidia said they’re working with regulators and are on track to complete the deal in early 2022.

Lots is at stake and there are multiple constituents to serve in this chess game. Strategic national considerations are clashing with calls to break up or limit big tech. Meanwhile, China acts with clarity and certainty. The door is open for Nvidia to grab a big prize in data center markets and, even without owning Arm, we think the company is better positioned than any other firm to serve the future needs of enterprise tech.

Do you have thoughts, data or insights on this and other topics that we cover?

Ways to Keep in Touch

Remember these episodes are all available as podcasts wherever you listen.

Email david.vellante@siliconangle.com | DM @dvellante on Twitter | Comment on our LinkedIn posts.

Also, check out this ETR Tutorial we created, which explains the spending methodology in more detail.

Watch the full video analysis: