Enterprise buyers are not relying on AI assistants to assess which vendors to shortlist in the autonomous AI database market. As a result, the market is undergoing a structural shift in how vendor solutions are discovered, evaluated, and shortlisted. Buyers are no longer relying solely on traditional search methods such as browsing websites, reading analyst reports, and viewing product pages. Instead, they are increasingly turning to AI assistants such as ChatGPT, Gemini, Grok, and Claude, which offer market summaries, comparisons, and recommendations before visiting a vendor’s site.

In this way, AI-mediated buyer journeys are not just changing traffic flows; they are reshaping how market leadership is identified, reinforced, and maintained.

The race is now on because, unlike traditional search engine optimization (SEO), an AEO advantage can compound over time. When AI engines repeatedly learn to associate certain vendors with a category, highlight them early, and reinforce these connections through repeated retrieval and answer generation, lagging vendors will find it harder to catch up.

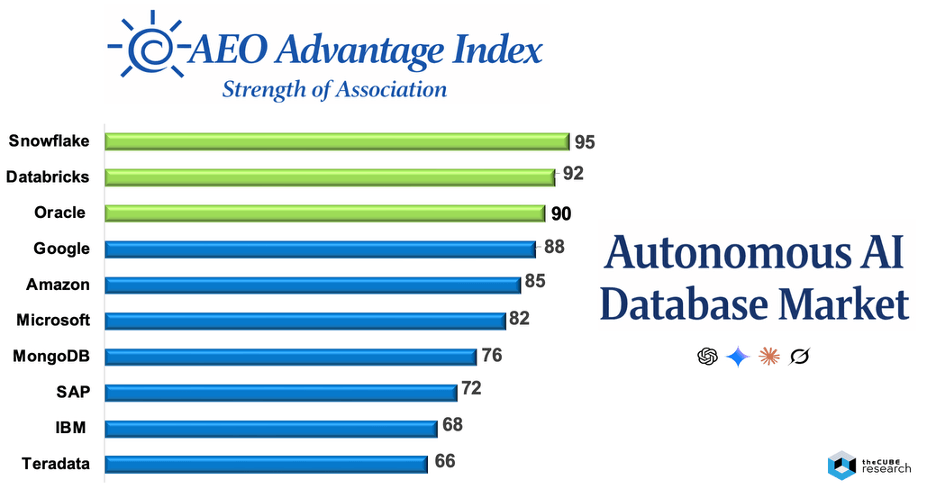

Against that backdrop, we assessed the Autonomous AI Database market through the eyes of AI engines using AEO Advantage Index strength-of-association analysis. The top-line finding is that AI models have already formed a relatively clear mental map of the category. Snowflake, Databricks, Oracle, Google, Amazon, and Microsoft emerge as the vendors most strongly associated with the market, followed by MongoDB, SAP, IBM, and Teradata as credible secondary contenders.

Why AI-Engine Optimization Matters

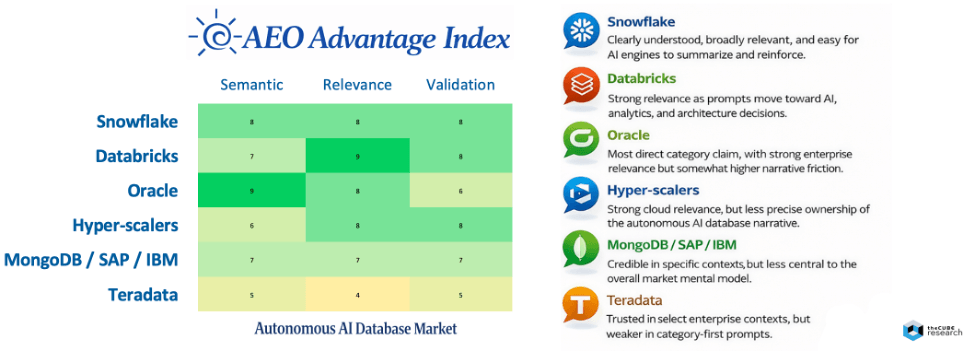

This view should be understood as a map of AI category memory. It reflects which vendors the models are most strongly associated with in the autonomous AI database market based on repeated semantic, relevance, and validation signals. It also captures the language of real buyer inquiry and how the visible content corpus is interpreted and operationalized by AI engines in market-facing answers.

Importantly, strength-of-association is not a market share ranking, nor is it a direct measure of visibility, citation, or recommendation rates. Instead, it shows which vendors AI engines have learned to associate most strongly with the category, based on accumulated evidence, buyer-intent prompts, third-party comparisons, and the broader market context.

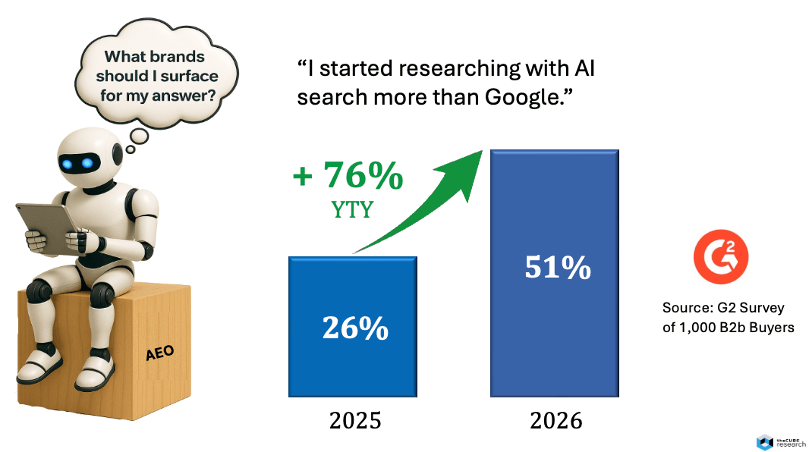

This learned association is important because it affects how the category is defined, which vendors shape the narrative, and what evaluation criteria buyers see first. According to a recent survey of 1,000 B2B buyers by G2, recently highlighted by Tim Sanders, a majority of buyers now start with AI search over Google, up 76% since the same time last year.

This view is further supported by our findings here at theCUBE Research, which indicate that 56% engage in AI-mediated buyer journeys, driving ~120,000 daily answers per product category – such as the autonomous AI database marketplace.

While the strength-of-association scores do not measure market share, product quality, revenue, or execution, it does measure something that is increasingly consequential: the degree to which AI engines have learned to associate a vendor with the autonomous AI database category through accumulated evidence, buyer-intent prompts, category language, third-party comparisons, and ongoing interactions across the open web.

Strength-of-Association

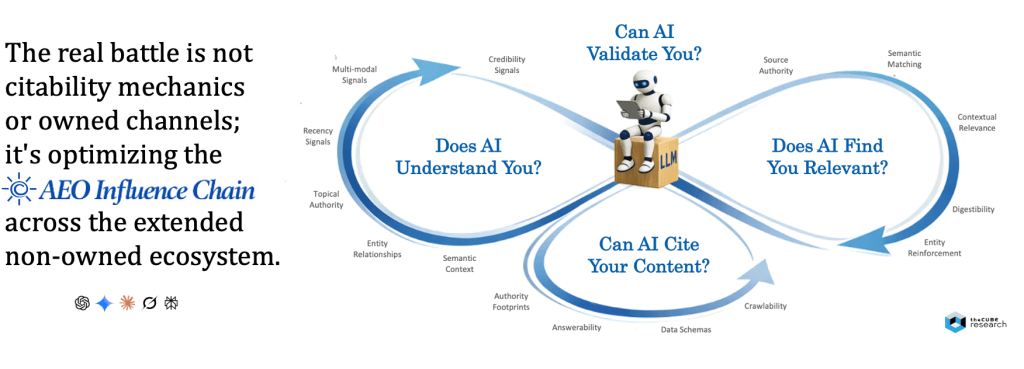

The strength-of-association ranking becomes more useful when viewed through the lens of diagnosing the impact that the 19 signals across the AEO influence chain have on how AI engines form category memory and buyer guidance: semantic clarity, contextual relevance, citability mechanics, and validation strength. Taken together, these dimensions help explain not only which vendors are most strongly associated with the Autonomous AI Database market, but why they appear where they do.

At the top of the market, Snowflake, Databricks, and Oracle stand out as the three vendors most likely to anchor the category in the eyes of AI engines, although they appear to arrive there through somewhat different pathways.

- Snowflake (95) leads because AI engines can readily understand who it is, what it offers, and why it matters. Its story is comparatively easy to compress into a reusable narrative around AI data cloud, governed data, and enterprise AI readiness. It also appears to perform well on contextual relevance, as it continues to show up as buyer questions move from broad market discovery to platform comparison, analytics modernization, and AI data strategy. Snowflake also benefits from strong third-party comparison density and external market discussion, which strengthen citability and validation. A potential limitation is that its strength may be driven more by the broad AI data platform narrative than by precise ownership of the autonomous database concept itself.

- Databricks (92) is closely followed because it has become highly relevant to the questions buyers ask as they move deeper into AI-driven data architecture decisions. It maps naturally to prompts around data lakehouse strategy, AI workloads, machine learning, open architectures, and data intelligence, which gives it broad contextual relevance across the buyer journey. AI engines also appear to understand clearly why Databricks matters, particularly in modern AI-native and analytics-heavy environments. Its validation strength is reinforced by extensive ecosystem discussion and technical credibility. Its relative weakness is that, like Snowflake, its market strength may sometimes stem from adjacent-category ownership rather than from the most direct claim to the autonomous AI database label.

- Oracle (89) remains a top-tier contender because it may have the most direct semantic connection to the category itself. AI engines can readily understand who Oracle is, what it offers, and why it matters in the autonomous database market because Oracle has long defined that narrative explicitly through its positioning as a self-driving, self-securing, and self-repairing database. Oracle also has strong relevance as buyer questions move into mission-critical workloads, regulated industries, multi-cloud deployment, in-database AI, and enterprise-grade operational resilience. Its challenge appears less about legitimacy and more about friction in narrative and citation. Oracle’s proof and portfolio breadth is more distributed and PDF-dependent, which may make the story somewhat harder for AI engines to compress, cite, and reuse than competitors that package a narrower, cleaner market narrative.

The next tier, led by hyper-scalers Google BigQuery, Amazon Redshift, and Microsoft, appears to benefit from ecosystem scale, cloud credibility, and repeated presence in warehouse and data-platform comparisons. AI engines clearly understand who these vendors are and why they matter in the broader data and analytics landscape, which supports semantic clarity. They also tend to stay relevant as prompts evolve from discovery to cloud standardization, analytics modernization, and enterprise architecture decisions. Their strength is their ongoing contextual presence throughout many buyer journeys. However, their limitation is that they are less likely to own the exact autonomous AI database narrative as distinctly as the top three vendors.

Below them, MongoDB, SAP, and IBM appear as credible but more situational contenders. MongoDB likely benefits from strong relevance in developer, document, JSON, and vector-search-related contexts. SAP and IBM retain credibility in enterprise, regulated, and transformation-heavy environments where trust and installed base matter. In each case, AI engines can understand the vendor and its role, but the category connection may be narrower, more contextual, or more workload-specific. These vendors are relevant in parts of the decision journey, but they appear less likely to define the category as a whole.

At the lower end of the current ranking, vendors such as Teradata remain credible in large-scale analytics and complex enterprise environments, but appear less likely to anchor the category in current AI-engine memory. They may still surface when buyer questions move toward specialized performance, analytics at scale, or legacy modernization, but they do not appear to shape the market’s primary mental model in the same way as the leaders.

Viewed this way, association is not simply a reflection of vendor awareness or scale. It reflects which vendors AI engines understand most clearly and continue to see as relevant as buyer questions move deeper into the decision journey. That distinction matters because the strength of association is a critical first step in building an AEO advantage. Before a brand can be cited, recommended, or selected, it must first be understood and linked to the category in the AI engine’s market model. If that association is weak or inconsistent, the vendor is less likely to appear early in category-first prompts or remain present as evaluation deepens.

It also explains why real buyer-intent dynamics matter so much. AI engines form category views from the questions buyers actually ask, the paths those questions take from discovery to selection, and the content available to support those journeys. The strongest vendors are those whose narrative and evidence align with how buyers naturally explore the market. That is what turns market presence into durable strength of association, and ultimately into a more defensible AEO advantage.

Buyer Intent Frames

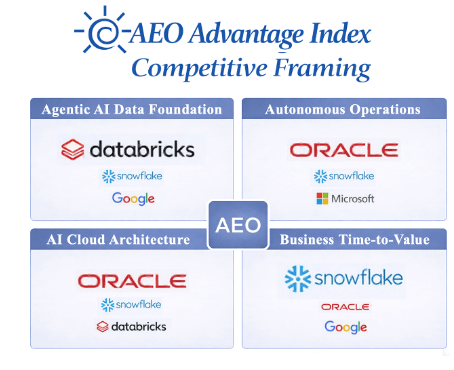

One of the most useful ways to interpret this market is through the primary buyer-intent frames that shape category-first AI prompts. These frames matter because AI-mediated buyer journeys do not begin from a neutral market map. They begin from specific questions about operational burden, AI readiness, architecture, and business value. The vendors that surface most strongly within those frames gain an early advantage in shaping market perception, shortlist formation, and evaluation criteria. In the Autonomous AI Database market, four competitive frames stand out most clearly:

- Agentic AI Data Foundation. When buyers approach the market with the goal of building an AI-ready data foundation for analytics and agents, Databricks stands out most prominently, followed by Snowflake and Google. Databricks leads because its lakehouse, machine learning, and agentic workflow narrative directly connect to prompts about AI-native data architecture. Snowflake is a close second, promoting a strong AI data cloud story focused on governed data access, analytics, and enterprise AI readiness. Google also performs well because BigQuery and its broader AI stack naturally align with cloud-scale analytics and AI integration.

- Autonomous Operations. In prompts centered on reducing operational burden through self-managing infrastructure, Oracle is most strongly associated, followed by Snowflake and Microsoft. Oracle owns the clearest autonomous database narrative through long-standing positioning around self-driving, self-securing, and self-repairing operations. Snowflake also aligns well because of its managed-cloud simplicity and low-friction operations story. Microsoft appears as a credible third option because of its enterprise governance, standardization, and operational reliability across data and cloud environments.

- AI Cloud Architecture. For buyers focused on multi-cloud architecture, scale, sovereignty, and deployment flexibility, Oracle again leads, followed by Snowflake and Databricks. Oracle’s advantage comes from its strong positioning around mission-critical scale, multi-cloud deployment, and regulated-enterprise requirements. Snowflake remains highly relevant because of its cross-cloud, cloud-native data platform narrative. Databricks also ranks highly due to its open architecture, lakehouse flexibility, and modern scale-out design.

- Business Time-to-Value. When the buyer journey begins with productivity, accessibility, and speed to insight, Snowflake leads, followed by Oracle and Google. Snowflake benefits from the cleanest and most accessible narrative around enterprise data, self-service analytics, and rapid time to value. Oracle is highly relevant through Select AI, natural-language access, and integration into enterprise workflows. Google also performs well because it is strongly associated with query simplicity, accessibility of analytics, and AI-assisted data interaction.

Together, these four frames show that the competitive market is not defined by a single narrative. It is shaped by the buyer’s entry point, with Oracle, Snowflake, and Databricks leading from different angles.

Vendor Implications

What stands out in this market view is that the category already has a relatively clear AI-native mental map. Snowflake and Databricks sit at the top of the association range, followed by Oracle, Google, Amazon, and Microsoft, with MongoDB, SAP, IBM, and Teradata forming a credible second tier.

This suggests that AI engines have already formed a recognizable shortlist for category-first prompts around autonomous AI data platforms, AI-ready databases, lakehouse and warehouse modernization, and adjacent concepts such as vector search, multi-cloud data, in-database AI, and agentic analytics.

In other words, the models have already “learned” the category. The strategic issue is now which vendors, solutions, products, and experts enter the consideration list and get elevated into the default set for category-first answers (when the brand is not explicitly referenced).

There are four key implications that matter most for vendors competing in the AI Database market.

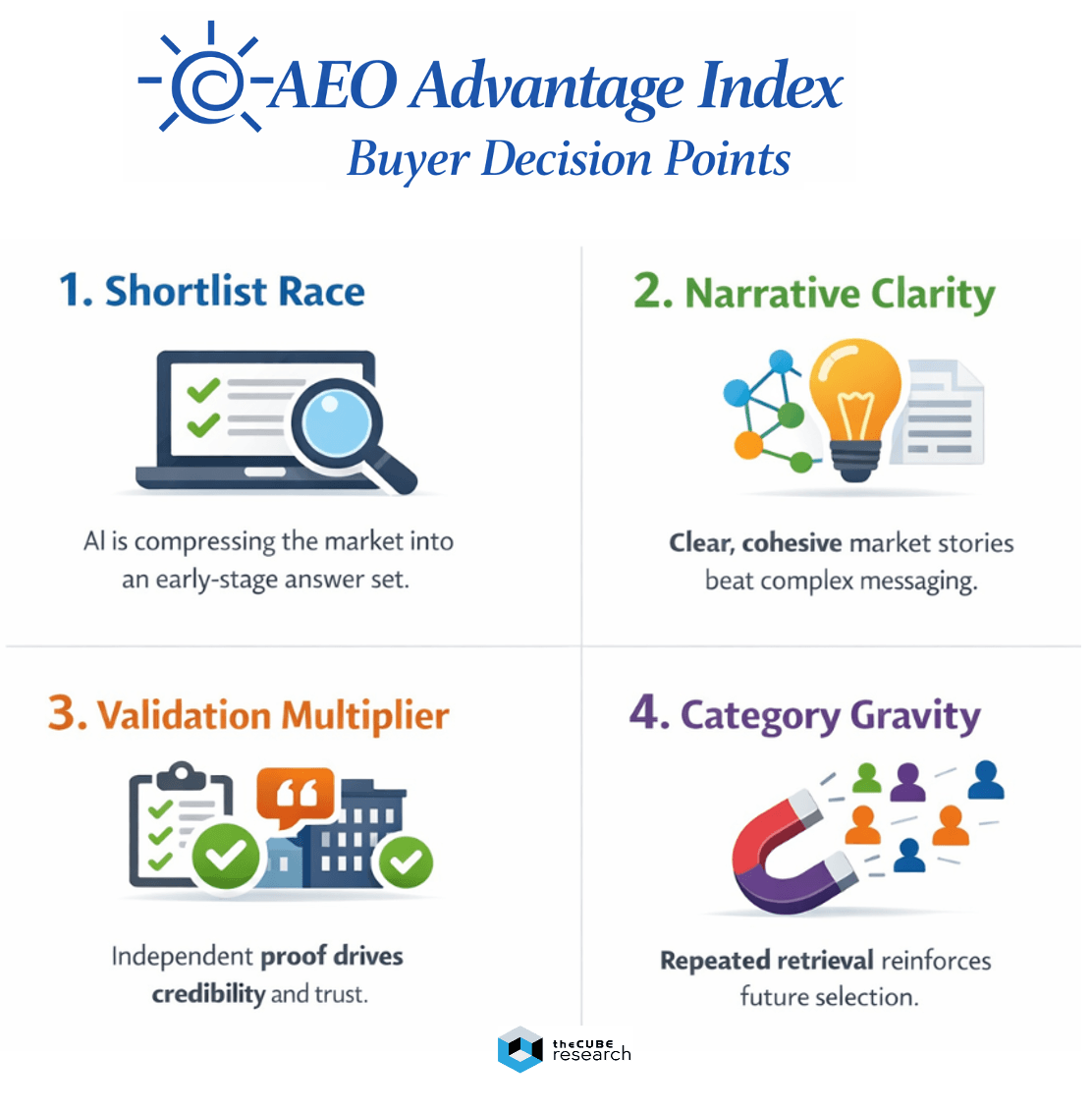

- AI engines are compressing the market into a shortlist race. Strong category association increases the likelihood that a vendor appears in the answer set before detailed evaluation begins. That matters because AI-mediated discovery is increasingly functioning as an early-stage shortlist generator. The answer itself is becoming the new front door to consideration.

- Narrative clarity now matters nearly as much as depth of capability. AI engines reward vendors that can be summarized into a coherent, low-friction market story. Vendors whose positioning, terminology, proof points, and supporting content hang together across the corpus are easier for models to interpret, retrieve, and rank with confidence. In this market, narrative anchors such as autonomous database, AI data cloud, lakehouse, native cloud warehouse, and integrated enterprise stack can create an advantage by reducing ambiguity and token burden for the model. Strength of association should therefore be read not simply as evidence of awareness, but as evidence of narrative learnability.

- Validation is becoming the decisive multiplier. It is not enough for a vendor to describe itself clearly on owned channels. AI engines place disproportionate weight on external signals that reinforce credibility, authority, authenticity, and trust. In practice, that makes the third-party ecosystem strategically central: analyst coverage, customer proof, earned media, partner ecosystems, independent reviews, community references, competitive comparisons, and other non-owned sources. These signals help AI engines determine not just what a vendor claims, but whether those claims appear established, corroborated, and decision-useful. In AI-mediated buying, validation is what converts a self-description into a trusted market position.

- Category gravity compounds over time. Once a vendor becomes a repeated answer for category-first prompts, that repeated retrieval can reinforce future selection. The market starts to operate as a feedback loop. Vendors that surface most often become the vendors buyers ask about more often. That, in turn, drives more comparisons, more discussion density, and more semantic reinforcement around their position in the category. Over time, this can widen the gap between vendors that become AI-native defaults and those still trying to enter the answer set.

The broader strategic takeaway is that AEO execution now has to operate on two fronts. Vendors need strong AEO mechanics and hygiene on owned channels, but that is only the foundation. Websites must now be designed not only for human consumption but also for AI engines to crawl, parse, and retrieve content effectively. This requires clearer structure, stronger entity and category signals, improved crawl accessibility, more answer-ready content, and explicit support for search-oriented AI crawlers where appropriate.

However, owned-channel optimization alone will not establish a durable strength of association. The bigger competitive challenge is to develop a broad, coherent, and externally validated market presence across the third-party ecosystem that AI engines use to evaluate credibility and trust. Current data shows that AI crawler activity is increasing rapidly. Cloudflare reported that AI crawlers accounted for 20% of verified bot traffic in 2025. The message being, you need to optimize well beyond your owned channels, addressing the entire chain of signals that AI engines rely on to derive answers.

That is the real market shift. In the SEO era, owned-channel optimization helped brands compete for clicks. In the AEO era, owned-channel hygiene is table stakes. Durable strength of association is built when clear, owned content, strong third-party validation, and AI-readable proof reinforce one another across the full ecosystem of AI engines that learn the category.

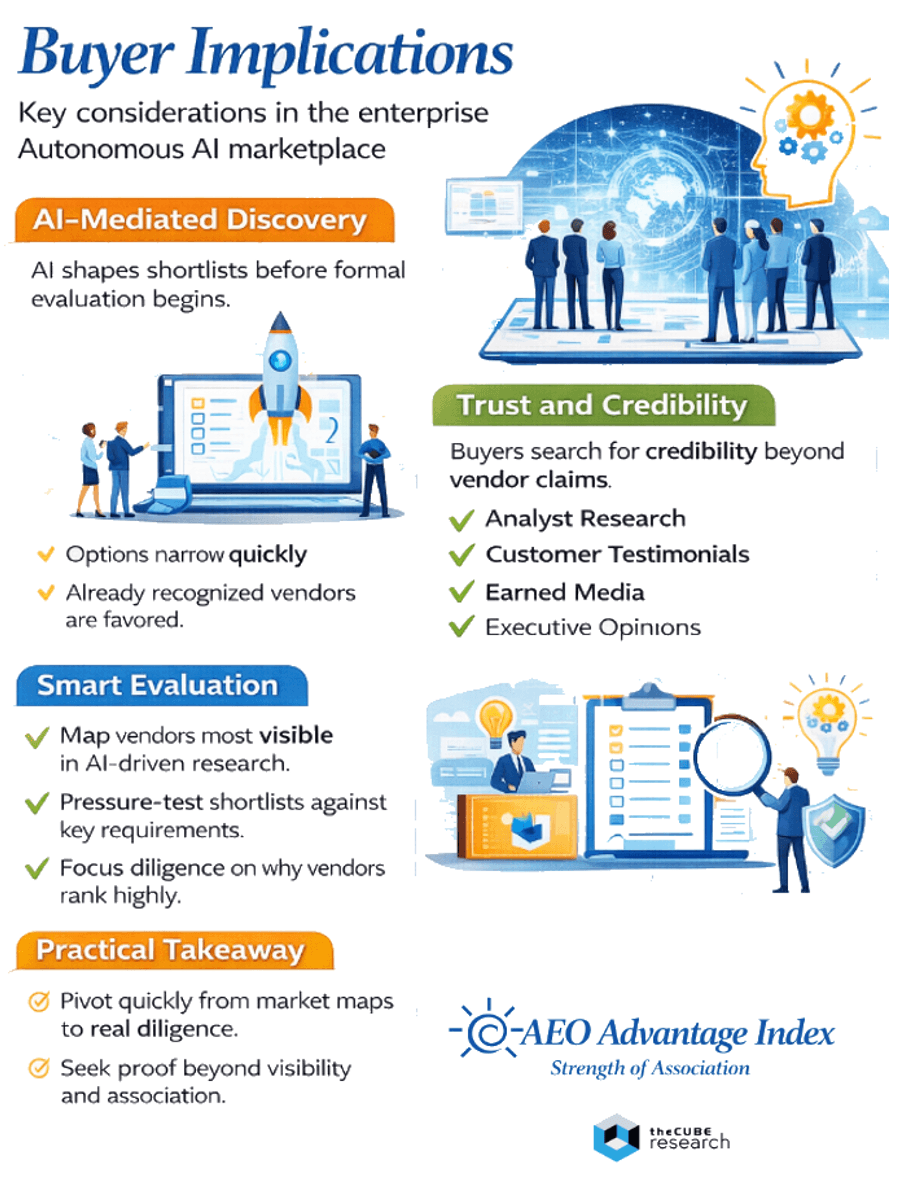

Buyer Implications

For enterprise buyers, this market view is valuable not because it declares a winner, but because it shows how the market is increasingly being experienced through AI-mediated discovery. Different buyer groups enter the category with different priorities, but they are increasingly encountering the same dynamic: shortlists are shaped before the formal evaluation process begins. AI engines are shaping how the category is described and what evaluation criteria buyers see. This can quickly narrow down vendors that the engines recognize as best.

The more critical issue, however, is trust. In AI buying journeys, buyers aren’t just assessing product claims. They are assessing vendor credibility, authority, and authenticity. That’s why independent 3rd-party ecosystems are so important. Analyst opinions, customer stories, practitioner insights, executive perspectives, earned media, and partner references all help determine whether a vendor is perceived as credible. This often outweighs what a vendor claims about itself.

Enterprise buyers should view this in three ways:

- Treat it as a map of initial market visibility. If research begins through AI assistants, these vendors are more likely to appear early in responses, comparisons, and synthesized shortlists.

- Distinguish category association from fit-for-purpose suitability. A vendor may be strongly associated with the category overall but still not be the right fit for a given workload, regulatory posture, or operating model. The right next step is not to accept the AI shortlist at face value, but to pressure-test it against specific use cases.

- Use category association to sharpen diligence questions. If a vendor is highly associated, ask why. Is it because the platform is genuinely differentiated for autonomous operation and AI-native workflows? Or is it because the vendor has a stronger ecosystem presence and greater credibility?

Buyers should also seek proof that goes beyond mere association. Insist on evidence of customer outcomes, governance, interoperability, and measurable business value. In an AI-driven market, the vendor most visibly linked to a category is not always the best equipped to deliver the desired outcome.

The practical takeaway is to use AI engines intentionally. Begin with category-level exploration, then quickly shift to persona-specific questions about your needs. The more accurately buyers frame these questions, the more likely they are to move beyond market associations and toward a trusted shortlist.

The Bottom Line

The autonomous AI database market is currently evolving in two arenas at once: the conventional market arena of products, ecosystems, and enterprise sales, and the AI arena of learned association, retrieval preference, and answer-set inclusion. This strength-of-association perspective reflects the second arena.

Its importance is strategic. It reveals which vendors AI engines already connect most strongly to the category before formal evaluation begins. That is not the same as winning the deal, but it increasingly determines who enters the conversation early, who anchors the market narrative, and who is most likely to make the initial shortlist.

In AI-driven buyer journeys, that early advantage compounds. Vendors that are clearly understood, credibly validated, and repeatedly surfaced gain a structural edge in the market experience. In this context, the strength of association is no longer just a branding artifact. It becomes a vital competitive asset.

About the AEO Advantage Index

The AEO Advantage Index is a research-grade diagnostic and decision-making framework that helps organizations understand how AI engines interpret their brand, category position, and competitive relevance. It goes beyond surface-level visibility tracking to uncover what is happening, why it is happening, and how to outperform.

The framework diagnoses the entire AEO influence chain: how effectively AI engines understand who you are, what you offer, and why it matters; how relevant you stay as buyer questions go from discovery to evaluation; how easily your claims and proof can be found and cited; and how strongly external signals of credibility, authority, authenticity, and trust reinforce your market position. It is not just a scoring system. It provides a structured way to identify root causes, benchmark performance, and prioritize actions to build a durable AEO advantage.

Ultimately, the AEO Advantage Index transforms AI-engine behavior into practical insights. The result is not just a score but a collection of diagnostic benchmarks, decision frameworks, and impact-prioritized actions that help brands close the most critical gaps.

In AI-mediated buyer journeys, the issue is no longer just whether your content exists. It is whether AI engines can understand it, trust it, and use it to shape market perception and buyer decisions. To learn more about the AEO Advantage Index and how it can be applied to your brand, market category, or competitive landscape, contact us on LinkedIn or at theCUBE Research.

To better understand how AI engines go about deriving their answers, read AI-engine Optimization (AEO): How to get Cited.

📊 More Research: https://thecuberesearch.com/analysts/scott-hebner/

🔔 Next Frontiers of AI Digest: https://aibizflywheel.substack.com/welcome