This Agentic AI Solutions review examines Hyperscience Hypercell and its positioning at the “inference inflection point,” where enterprise AI success shifts from model capability to the operationalization of trusted, governed inference at scale.

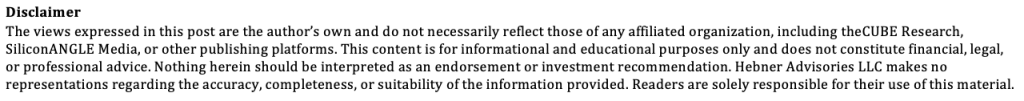

Hyperscience is expanding beyond intelligent document processing to establish a foundational inference-control layer for digital labor, ensuring that unstructured enterprise data is transformed into accurate, auditable, and decision-ready inputs for agentic systems.

Takeaways:

- Hyperscience is not another AI application; it is an architectural control point. The company is positioning Hypercell as the inference-control layer that sits between enterprise data and AI factories, enabling organizations to move from fragmented automation to production-grade, decision-driven digital labor.

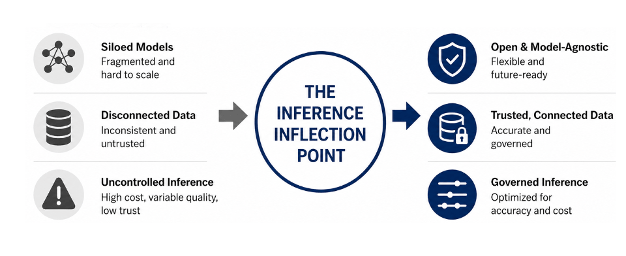

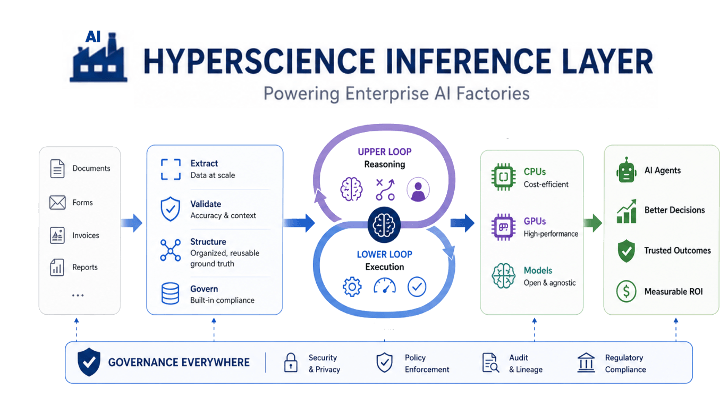

- The shift is from model performance to decision integrity. As enterprises scale agentic AI, the limiting factor is no longer model capability but the quality, governance, and economic efficiency of inference. Hyperscience addresses this by separating high-volume execution from high-value reasoning in the inference pipeline.

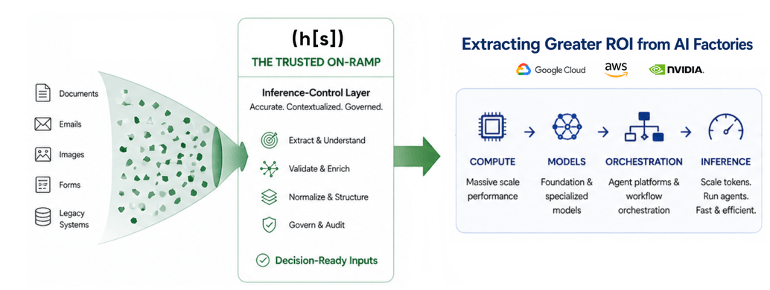

- Differentiation lies in being the “trusted on-ramp” to AI factories. While hyper-scalers such as Google, AWS, and NVIDIA focus on compute, models, and orchestration, Hyperscience ensures that what enters those systems is accurate, contextualized, and safe to act on. This makes it a trusted inference for AI factories, complementary—and increasingly essential—in enterprise AI architectures.

- The ROI case is grounded in decision economics, not just efficiency. Hyperscience ties value to measurable outcomes such as faster adjudication, reduced error rates, and lower cost per decision. Real-world deployments using Hyperscience Hypercell demonstrate workflow-level impact in high-stakes environments.

- Our AnalystANGLE: Hyperscience addresses the most underdeveloped layer in enterprise AI. In a crowded market where claims outpace proof, its strength lies in linking architecture to measurable outcomes. Buyers will increasingly prioritize vendors that can demonstrate trusted, governed execution at scale. Hyperscience’s success will depend on gaining visibility as the inference-control layer in enterprise architectures.

The Inference Inflection Point

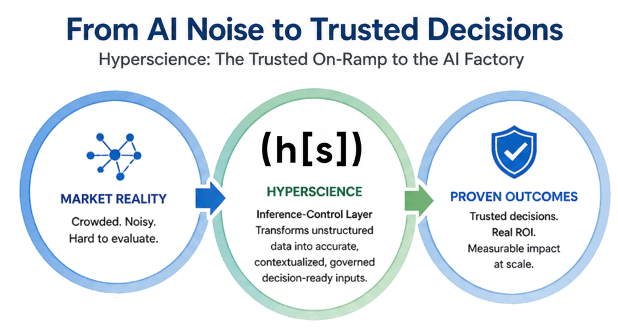

The journey to ROI in the enterprise is entering a new phase. It’s not just about model innovation; it’s about operationalizing inference at scale in key business processes where trust, resiliency, cost control, and decision integrity are now top priorities. The market data underscores how quickly this shift is unfolding. With most companies running at least some AI agents in production, it exposes a fundamental truth: the primary constraint to scaling digital labor ROI is not model capability but operational fit.

Against this backdrop, at Google Cloud Next 2026, Hyperscience positioned itself squarely around what it calls the “inference inflection point.” This signals a meaningful evolution: complementing intelligent document processing with foundational AI infrastructure for trusted inference for AI factories within enterprise architectures that need to:

- Route complex document workloads dynamically across compute architectures to achieve mission-critical accuracy at the lowest practical cost per transaction.

- An open, model-agnostic framework that gives enterprises hardware and model flexibility without lock-in.

- Delivery of measurable impact (ROI) in highly demanding regulatory environments, with Deep Analysis naming Hypercell for SNAP its 2026 Solution of the Year in the public sector.

That combination of cost governance, architectural optionality, and third-party validation aligns directly with the priorities CFOs, CAIOs, and CIOs now place ahead of raw capability. With 6 in 10 expecting measurable AI ROI improvements, it’s clear that the pilot era is ending. The operating era is beginning.

This shifts the question. Winners will not ask, “Which model should we use?” They will ask, “Can we trust the data, govern the inference, and operationalize decisions?” That is where Hyperscience is placing its bet. They are building an inference infrastructure for digital labor— a production-grade layer that reads, validates, and governs enterprise data before agents act on it.

Hyperscience’s Hypercell, in effect, becomes the trusted inference for agentic AI layer between systems of record, AI factories, and agentic workflows. This matters because if data is stale, fragmented, or wrong, agents do not just make mistakes; they scale them. Bad inputs lead to bad decisions, and bad decisions lead to operational, financial, regulatory, and human risks. This is the gap the market has under-addressed.

That positions Hyperscience as a foundational infrastructure layer within agentic architectures, built for environments where accuracy, auditability, compliance, and economic efficiency are non-negotiable. Operationalizing trusted inference for AI factories is a fundamental tenet of success. They may very well hold the key to unlocking greater ROI.

That positions Hyperscience as a foundational infrastructure layer within agentic architectures, built for environments where accuracy, auditability, compliance, and economic efficiency are non-negotiable. They may very well hold the key to unlocking greater ROI.

Strategic Implications

Hyperscience is positioning itself at a control point in the emerging agentic enterprise architecture: transforming high-volume enterprise data into governed, decision-ready “ground truth.” This is not an incremental evolution of intelligent document processing; it is a redefinition of inference as an operational discipline. At its core is a shift from model-centric to pipeline-centric architectures.

As enterprises scale AI factories, the constraint shifts upstream. The ability to dynamically orchestrate inference across compute environments, models, and workflows is the key determinant of both cost efficiency and decision integrity. Hyperscience’s approach to “inference layering” and its dual-loop model directly addresses this challenge by separating high-volume execution from high-value reasoning while preserving governance.

This has three strategic implications for enterprises:

- Inference becomes an economic lever: Routing workloads across CPUs, GPUs, and models enables enterprises to optimize the cost per decision, not just the cost per token. This is essential because inference costs scale exponentially with the number of agents.

- Ground truth becomes a competitive asset: The shift from extracting a handful of fields to hundreds per document highlights a new data bottleneck. Enterprises that can industrialize this layer will outperform those that rely on fragmented or low-fidelity data.

- Governance moves into the runtime: Auditability, compliance, and data validation are no longer post-process controls. They must be embedded directly in the inference pipeline to support regulated, high-stakes workflows and the much-needed trust required to achieve enterprise ROI.

As Andrew Joiner, CEO of Hyperscience, frames the company’s strategic purpose:

“The winners of the AI era won’t be those with the biggest models, but those who best operationalize their proprietary data through a trusted, industrial-grade inference pipeline.”

In effect, Hyperscience is building the “on-ramp” to AI factories by delivering a trusted inference for agentic AI layer, ensuring that what flows into agentic systems is accurate, contextualized, and governed. Without this layer, enterprises risk scaling flawed decisions. This is critical as digital labor moves from isolated automation to consequential decision-making, where trust becomes the gating factor for ROI and adoption.

AnalystANGLE – Our Take

Hyperscience occupies a strategically important position in the evolving enterprise AI stack: the inference-control layer that transforms unstructured enterprise data into decision-ready inputs. That positioning is becoming more critical and must stand out in a highly crowded, noisy enterprise AI marketplace. The rise of AI factories has triggered an explosion of vendors spanning various layers within that context. The result is a noisy landscape where capability claims are abundant, but proven, production-grade outcomes remain scarce.

From an enterprise investment perspective, our Agentic AI Futures Index survey (n=625) highlights the importance of this space, with 73% planning to increase spending on trust and governance over the next 18 months. Furthermore, Gartner Group has warned that more than 40% of agentic AI projects could be canceled by 2027 due to cost.

In addition, as AI-mediated buyer journeys become standard, market positioning will increasingly depend on the linkage between claims and proof. Enterprises are now asking a more direct question: Where is the evidence that this works at scale, in my environment, with my constraints?

Hyperscience appears well-positioned to provide credible ROU proof points. Its work with the U.S. Department of Veterans Affairs, reducing claims processing timelines from months to days, represents a clear ROI signal. Its traction in public-sector programs such as SNAP further reinforces its focus on high-volume, high-stakes workflows where accuracy, auditability, and throughput directly translate into measurable economic outcomes.

Equally important is how Hyperscience differentiates within the broader AI factory narrative. Hyper-scalers and infrastructure providers such as Google Cloud, AWS, and NVIDIA are defining the AI factory as a system of compute, models, and orchestration designed to scale token generation and agent execution. Their value is foundational: enabling enterprises to build and run AI systems at scale. Hyperscience operates one layer up the stack, and, critically, one layer earlier in the value chain. It is not the factory. It is the trusted on-ramp into the factory. Its focus is on ensuring that what enters these factories is accurate, contextualized, and governed.

This distinction is consequential. AI factories optimize how fast and cheaply inference can run; Hyperscience optimizes whether that inference is correct, auditable, and safe to act on.

- From model performance to decision integrity

- From token economics to decision economics

- From automation at scale to trusted execution at scale

The bottom line is that Hyperscience’s differentiation is not in competing with AI factories, but in extracting greater ROI from them in real-world, high-stakes deployment. For enterprises, the implication is clear: without a governed inference layer, scaling AI may simply mean scaling risk.

📩 Contact Me 📚 Read More AI Research 🔔 Subscribe to Next Frontiers of AI Digest

🎧 Watch the Next Frontiers of AI podcast on theCUBE