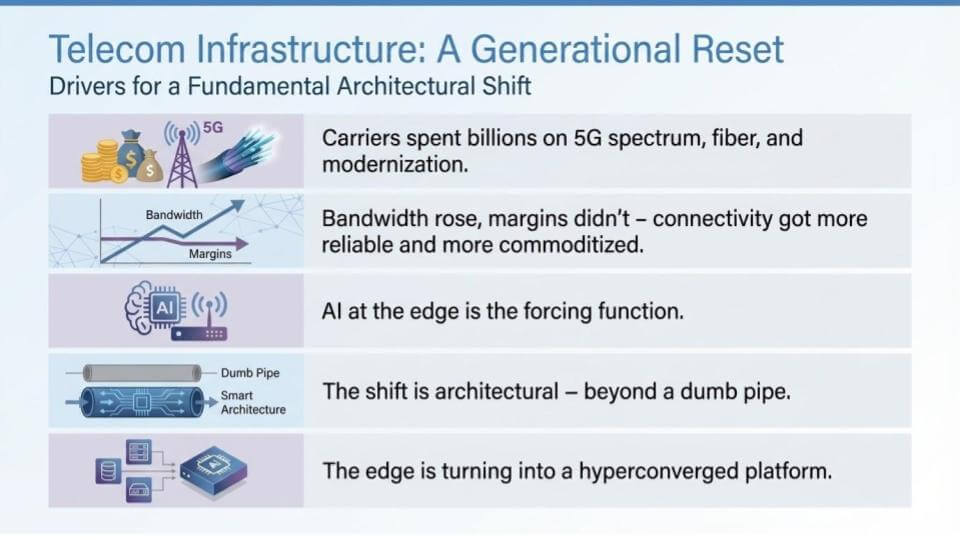

Our main thesis coming out of MWC 2026 is that we believe telecom is staring at a once-in-a-generation infrastructure reset. Carriers poured billions into 5G spectrum, fiber expansion, and network modernization on the promise that faster networks would unlock new enterprise revenue. Bandwidth rose, margins didn’t. Connectivity got more reliable, but at the same time, it commoditized. Now, AI at the edge changes the economics of remote computing. A simple infrastructure refresh cycle won’t cut it. We’re talking about an architectural shift where the edge becomes more intelligent and goes beyond just moving packets around. We see the edge as the place where AI workloads run natively. This means security and policy are enforced, compute is managed, and systems are orchestrated at the edge, outside of the traditional data center.

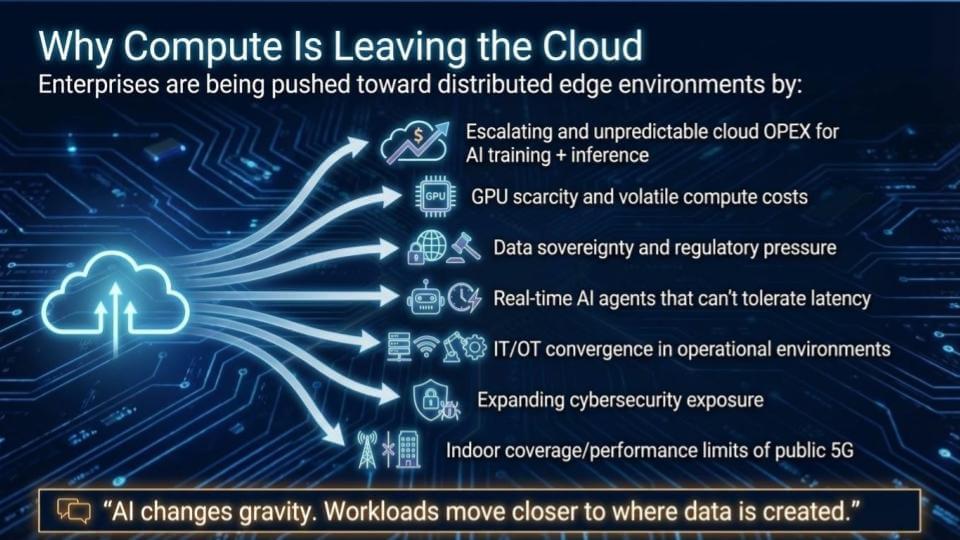

Further, our view is that compute moves closer to where data is created. Think factories, hospitals, warehouses, retail stores, campuses. Why is this? We cite the following contributors to this trend: 1) Cloud OpEx is rising; 2) GPUs are in short supply; 3) Sovereignty edicts are on the upswing; and 4) Latency. AI agents need real-time responsiveness.

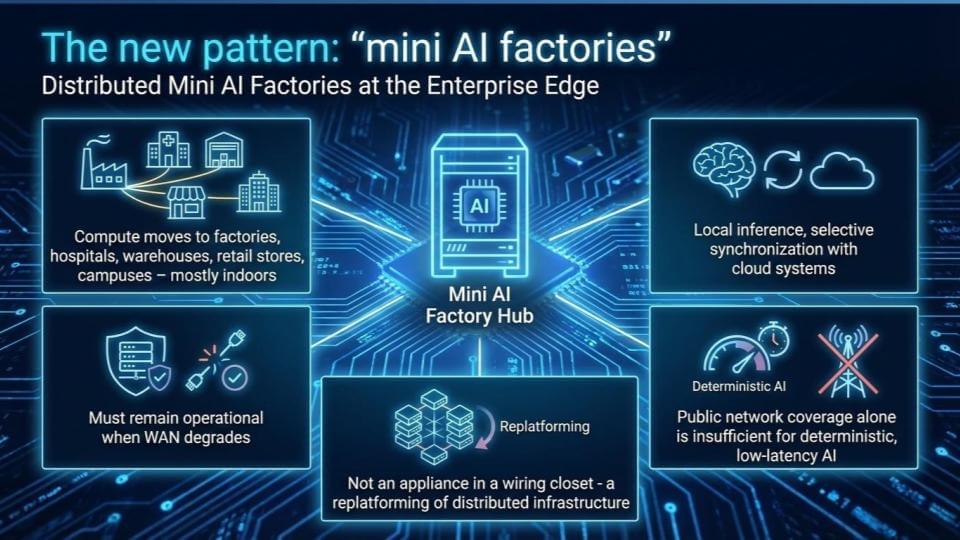

The result, in our view, is a new vision, where distributed “mini AI factories” operate (often indoors) at the enterprise edge. We believe this demands an entirely new platform model that we call the hyper-converged edge, which is governed through unified control planes.

In this Breaking Analysis, we unpack why the legacy telco monetization model that exists today completely breaks; and what a hyper-converged edge stack actually looks like; and how carriers can move from dumb pipe providers to platform operators before the window of leadership closes. We do so by going deeper into a recent research report from John Furrier on the Hyperconverged Edge.

A generational reset for telecom infrastructure

Telecom is entering a generational reset. Carriers spent billions on 5G spectrum, fiber, and modernization. Bandwidth rose, cost per bit fell, and connectivity became more reliable and more commoditized. The edge is now the forcing function because the economics are changing.

We emphasize that this is a platform shift. The edge is turning into a hyperconverged environment where compute, storage, networking, and orchestration collapse into a single operational model – and telecom adds a unique ingredient – i.e., radio. We remember that data center virtualization unified compute, storage, and networking and set up the cloud era. The same dynamic is now playing out at the edge, but with multi-access connectivity and wireless as native building blocks.

Our premise is that if we put intelligence at the edge, it unlocks new capabilities that show up over time. Specifically, local inference, agent-driven operations, real-time optimization, and a tighter loop between the physical network and the software that manages it. Multi-access and seamless meshing across Wi-Fi, 4G, 5G, 6G, and fiber becomes fundamental. Virtualized radio expands what can be orchestrated, automated, and optimized.

The strategic point is that carriers already have the facilities and the footprint. Central offices, towers, and distributed edge locations represent latent capacity – i.e., power, real estate, and operational reach – that can be converted into GPU-enabled “mini AI factories” over time. This opens up a new business model, moving telcos from bandwidth providers into platform economics. And the scale is already showing up in production. As an example from our CUBE interview this week at MWC, AT&T is generating 27 billion tokens a day.

Why compute is moving closer to the edge

AI is shifting where compute has to live because it is pulling workloads closer to where data is created. There’s data in the cloud, data on-prem, and a growing amount of data at the edge. When the goal is real-time inference and low-latency action, round trips back to centralized clouds stop working.

We note a set of six forces pushing enterprises toward distributed edge environments:

- Cloud OpEx for AI training and inference is escalating and unpredictable, and the complaints about cloud cost aren’t slowing down.

- GPU supply is improving, but demand still exceeds supply, which keeps compute costs volatile and unpredictable. And with memory prices rising, expect cloud vendors to hold or increase pricing to preserve margin.

- Data sovereignty edicts are rising fast, with regulatory pressure and national agendas pushing “sovereign AI.”

- Agents need real-time access, and latency becomes a fundamental constraint.

- IT and OT are converging, which expands the threat matrix and increases security exposure.

- Public 5G has indoor coverage and performance limits, which means much of enterprise work happens indoors.

Despite the provocative title of the slide above, compute isn’t so much leaving the cloud as it is expanding everywhere. The cloud remains, but it’s becoming distributed. That’s what hybrid really means in this era – distributed hyperscale capability, deployed across edge, metro, and core data centers.

Recent events – e.g., AWS outages due to the Middle East war – have reinforced why this is so important. When centralized regions go down, the impact is immediate, and the blast radius is broad. It strengthens the case for a converged edge where workloads can keep running even when the WAN performance degrades.

The other key point is that radio becomes the interface. Wireless connectivity is needed for robotics, manufacturing, and systems that require always-on behavior. Radio frequency can mesh and create new networking dynamics, and when that mesh connects into a factory and the backbone, it creates an advantage for placing models where they belong and doing real-time analysis. That’s where the control plane and GPUs (and CPUs for orchestration) have to sit. The edge can be tiny, small, medium, or large. The requirement is similar in that local intelligence needs to be close to the physical environment, with distributed coordination across the network.

Mini AI factories: The edge becomes a production environment

The edge is turning into a production environment. It’s no longer a branch office with a device in a closet. The mini AI factory idea is that compute moves into retail stores, warehouses, hospitals, campuses – mostly indoor locations – and those sites have to stay up when WAN performance degrades. Local inference must often run in real time. Cloud synchronization will happen when it can, but the production work can’t depend on it; it must be real-time.

A mini AI factory is a connected compute node on the network. If the central AI factories are the multi-billion-dollar data centers Nvidia and others are building, the edge becomes a distributed node that can participate in that network. The footprint of that node is constrained by power, space, and connectivity. A tower might have a cabinet, a shed, or a small building at the base – a place where a rack can fit, or a space where a smaller form factor device can sit. The node can be tiny, or it can be more substantial. Jetson-class embedded systems are a good mental model for what “mini” looks like, and a 1U-class device is an obvious next step as these footprints get productized.

Once intelligence is placed there, the edge can make decisions locally. It can identify who the user is, what network the traffic is coming from, whether it’s Wi-Fi or fiber, and it can do reasoning, learn, and coordinate with other nodes. The interesting part is not just the local work, it’s coordination across a distributed fabric- one node can talk to others and pull what it needs when it needs it.

Determinism is a driving function. The old approach – moving packets from point A to point B using static rules – doesn’t scale in a world with massive device counts, mixed access types, and real-time AI requirements. Only AI can manage the complexity because there are too many variables and too many endpoints for humans to adjudicate and optimize. That’s why the mini AI factory becomes the key ingredient. The edge doesn’t work at scale without it.

The carrier angle is the opportunity. Telcos already have the footprint – towers, central offices, and dense access networks – and they can turn that into differentiated services. If the edge is hyperconverged – compute plus networking plus orchestration tied together – carriers can sell higher-value services that sit next to connectivity. Think consumer, enterprise, public sector, and specialized use cases with different trust and policy requirements.

The bottom line is that once intelligence is deployed as a distributed set of nodes, networking becomes a force multiplier. The radios, the access layer, and the backhaul stop being just transport. They become the fabric that makes the mini AI factories usable and monetizable at scale.

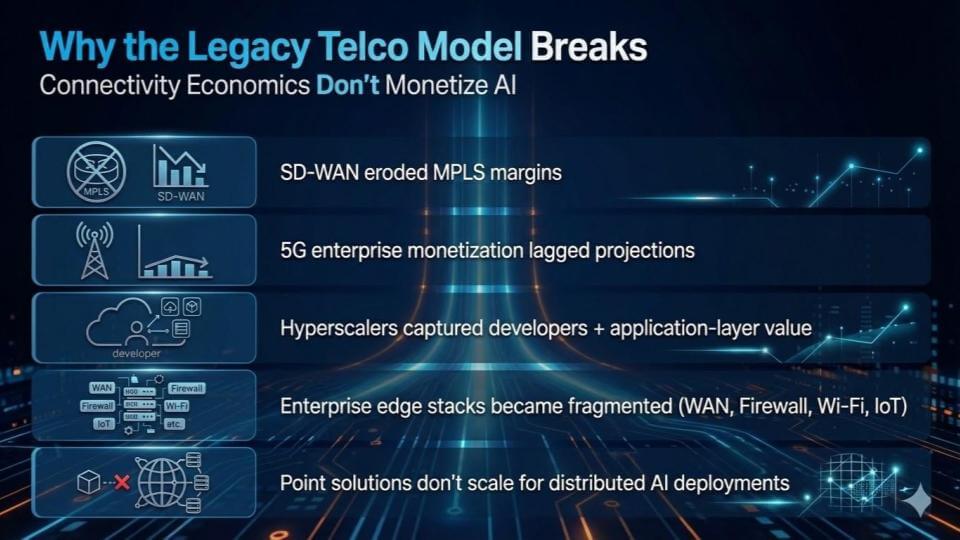

Why the legacy telco model breaks

The legacy telco monetization model sells access. Telcos are great at connectivity. That model ran into the wall in the last cycle. SD-WAN took a direct shot at MPLS margins by making pathing smarter and cheaper with rules-based control. Then, 5G arrived with better performance and lower latency, but the monetization never showed up at the scale carriers forecast. People didn’t pay more for 5G. In many cases, they paid less than they did for 4G. Meanwhile, over-the-top (OTT) players such as Netflix continued to win the monetization game.

The core issue for telcos has been lack of applications. 5G was a good spec, but there weren’t enough new applications that made customers willing to pay up. And while carriers were trying to monetize access, hyperscalers captured developers and the application layer where the real value sits.

Meanwhile, enterprise edge stacks turned into a mess – WAN devices, firewalls, Wi-Fi, IoT gateways, servers, etc. – all managed separately. Point solutions don’t scale when AI workloads are distributed and need consistent policy, security, and lifecycle management across thousands of sites.

The framework we see is a new monetization model that sells outcomes through novel services. That requires a mindset change inside carriers. The business can’t be run like a connectivity company that thinks in revenue per user and lives off upgrading subscribers to new plans. It has to be run like a managed platform that sells a growing set of services, priced by usage, with a buyer audience that understands what it’s paying for.

That’s where the edge platform becomes a potential economic flywheel. Once services show up at the edge – agent workloads, identity and security, policy enforcement, deterministic performance – carriers can start charging for more than transport. The opportunity is tied to what users and enterprises will actually consume. If agent marketplaces take off, those agents have to run somewhere. If users want a secure experience at a sports event, on a train, or in a factory, the network has to deliver it locally and reliably. That can be monetized – but only if carriers move from “connectivity seller” to “platform operator” and start packaging and selling services, not just access.

What is the “hyperconverged edge,” really?

The simplest way to understand the hyperconverged edge is compute, networking, storage, and Wi-Fi (and more) brought together at the edge in a small container system, operated through a unified control plane. The requirement is turning site-level complexity into centrally managed, governed policy so it works the same way across thousands of locations.

The components are critical, but the outcome is what’s really important here. The compute is heterogeneous because different jobs need different silicon – CPUs, GPUs, other accelerators, lower power profiles. Storage is local and distributed. Connectivity is multi-access – licensed spectrum, Wi-Fi, fiber nodes that connect to radios, and backhaul links. Security and governance are built in, not bolted on. Kubernetes-class orchestration is in the mix as shown above because the runtime has to handle distributed workloads. Fleet lifecycle management becomes compulsory because no one is going to manage this site by site.

The iPhone analogy is relevant here because it’s a hyperconverged device. People don’t care what’s inside their iPhone. It works. The iPhone wasn’t an incremental improvement to the iPod. It was an architectural reset. That’s the point here. The edge has lived through years of incremental upgrades and point solutions. This shift is architectural.

Security is one of the big drivers. Distributed intelligence reduces the “one location takes it down” risk, and cybersecurity can be built directly into the node. The push toward distributed resilience is getting reinforced by current events, along with the steady drumbeat of ransomware and attack surface expansion.

The “brain” at the edge is the AI factory. A brain belongs at the edge because decisions have to be made locally, under tight latency and reliability constraints. That’s why embedded systems are relevant. Nvidia’s Jetson is already a developer platform for this direction, and it points to where the market is going. The next step is factory-to-factory distributed computing across AI factories (scale across).

And it doesn’t stop at the edge. Metro comes into play as well. Cities will have their own factory footprints because the topology goes where network access lives – metros, edge, home. The wireless side becomes critical because it’s the main interface. If the radios can mesh and the complexity gets abstracted away, the platform model kicks in. It’s a big project, but major advantage confers to the winners.

Telcos’ “last chance” at a new monetization opportunity

The telco business model has been trapped in a familiar loop. Massive investment goes into fiber and spectrum. Connectivity improves. Cost per bit keeps falling. Over-the-top players capture the relationship with the user and most of the upside. Some carriers tried to move up the stack into content, and it didn’t work. 5G was supposed to be the monetization lever – it wasn’t. Carriers built a better network, and the market forced prices down anyway.

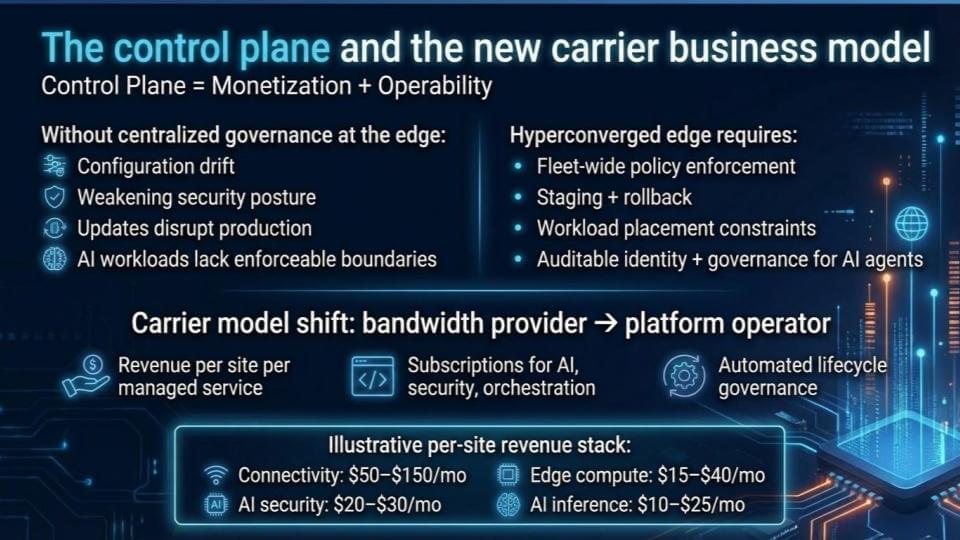

The path out of this endless cycle, in our view, is a carrier model that shifts from bandwidth provider to platform operator. The control plane is the linchpin because it connects monetization to operability and quality.

Without centralized governance at the edge, distributed deployments turn into a tax. As we cite in the graphic above, you get configuration drift, weaker security posture, updates that are disruptive, and AI workloads that “float” without enforceable boundaries. A hyperconverged edge requires estate-wide policy enforcement, staging and rollback recovery, workload placement constraints, and auditable identity and governance for AI agents. That’s what makes the edge operable at scale.

Once that foundation is in place, the revenue model opens up. The unit becomes the site that can drive revenue. The monetization becomes managed services, delivered as subscriptions – connectivity, AI security, orchestration, lifecycle governance, and eventually, inference. The per-site revenue stack shown above is illustrative but makes the point. Connectivity in the $50–$150 per month range; AI security $20–$30; edge compute $15–$40; inference $10–$25. The exact numbers will vary, but the logic is the same – more services per site, priced and managed as a platform.

The service offerings have to be multi-dimensional. It starts with network services and expands into business services – employee services, virtual desktop-style capabilities, and new offerings that emerge once network intelligence can slice and dice demand, dial up and dial down, and share the underlying infrastructure across use cases. The telcos are in a prime position to offer these services.

The importance of price-performance doesn’t disappear. The network will keep getting better and cheaper over time. That was the 5G lesson. The way to win is to bundle and package services so customers get a better product that replaces multiple point solutions, ideally at a lower total cost than the sum of the parts. Bundling works when the product does more and is simpler to consume.

In our view, adoption will occur where urgency is highest. Specifically, small and mid-sized enterprises are underserved. Cybersecurity is a key factor. Mission-critical environments are obvious early targets – public safety and first responders, hospitals, homeland security, critical infrastructure. Those deployments demand reliability and control, and they create the first wave of willingness to pay for managed services.

The payback for carriers is twofold: 1) The network becomes better because intelligence is applied to operations and configuration; and 2) The revenue model shifts toward recurring platform economics as carriers sell a growing stack of services per site, rather than trying to squeeze margin from access alone.

Competitive analysis: Who wins – and how telcos avoid losing

The edge opportunity is attracting competitors from every direction. Hyperscalers are pushing cloud outward. Networking vendors are embedding compute. Hardware vendors are pushing accelerators. Satellite services are coming. The market is converging because the TAM is large and the old boundaries are dissolving.

As described above, the winning architecture supports hardware heterogeneity. It has unified policy frameworks. It stays open but governed, which requires standards. It has consistent edge-to-cloud control models. That’s the standard table stakes checklist.

The more interesting way to look at it is the losing path – because that’s the approach most operators fall into by default. Below we put forth what not to look like:

Stage one is margin erosion. CapEx rises, ARPU declines, margins get thin, and the numbers start to look unsustainable. That is the 5G monetization dynamic.

Stage two is commoditization. Hyperscalers lease “AI services” to telcos. The telco becomes the transport layer while the hyperscaler owns the value and the customer relationship. That’s the point where the fire alarm should be pulled.

Stage three is irrelevance. AI agents bypass telco services entirely for transactions. The reference point is what’s already happening in crypto rails and payment settlement – new rails emerge, the relationship shifts, and the telco gets pushed further into the background. Stage three ends with consolidation, acquisition, and bankruptcy, or a default back to selling “dumb pipes.”

Avoiding that path requires a deliberate strategy and better execution in the arena. In our view, telcos must increase services and fight the erosion with differentiated offerings built on network and data. They have an opportunity to refresh central offices and towers as platform assets. Use the hyperconverged edge as the intelligent node that brings AI capability to physical assets. That’s why “physical AI” matters to telcos – because it directly ties intelligence to the footprint they already own.

Our advice to telcos is that once the edge is in place, fill it in across metro and regional. That’s where sovereignty becomes tangible. If a telco wants sovereign cloud, the hyperconverged edge roadmap is the best path to get there, and it’s the most direct way to stay out of the three-stage losing sequence that we laid out above.

Telcos’ last chance: A three to five year window for real momentum, five to seven years for full convergence

In our view, this shift is inevitable. The question is speed and sequence.

Our view is that five to seven years is the realistic timeline for full convergence across the edge, metro, and the broader carrier footprint. Too many things have to move at once – architecture, hardware form factors, radio virtualization, multi-access meshing, operational tooling, and commercial packaging. None of that flips overnight, and the industry doesn’t live in an ideal world.

At the same time, real-world events are compressing the conversation. When large cloud regions get disrupted, and there is no clean failover outside a provider’s own internal architecture, it reinforces why intelligent distributed infrastructure is so critical. If the system can roll workloads to another area, you reduce the blast radius. That kind of stress makes the “do nothing” path a losing strategy, as your expected loss will be higher than your competitors that lean into the hyperconverged edge.

The way this builds is uneven, in our view. Hyperconvergence wins first where the need is urgent, and the buyer is motivated.

- Small and mid-sized enterprises are underserved and have limited in-house IT capacity. They are largely reliant on the public cloud and are locked into that model. A turnkey security-led offering can bring fast value-add because it solves an immediate problem and doesn’t require a multi-year transformation plan. In addition, if telcos make it simple, businesses will adopt.

- Sovereignty and regulated deployments are another early factor. They’re already forcing changes in architecture and procurement, and carriers can move here without betting the entire company in one shot.

- Mission-critical environments accelerate this trend, too – public safety, hospitals, critical infrastructure. They will pay up for reliability, control, and recovery, and they will tolerate less fragility.

The technical gates are already in play. Radios have to mesh. The reference architecture has to be agreed upon and implemented. That requires standards and a consortium of leaders who can put the initial architecture on paper and then run it in production.

Hardware is not the limiting factor, in our view. The supply chain is moving fast. Mini AI factories can be productized. A DGX box can fit into a cabinet. Towers and central offices can be retrofitted. The CapEx wave in the big AI factories sets a precedent for what’s coming next at the edge. The bigger challenge is execution – i.e., getting the architecture right, aligning incentives, packaging services, and operating at scale.

Our best estimate is a three- to five-year window to get meaningful traction if the industry gets aligned, with full convergence taking longer. The pressures that accelerate the timeline are economics and security. If those two forces collide, we think the adoption curve steepens, but this market is historically slow to move.

And that’s the “last chance” theme. Carriers have the assets – towers, central offices, spectrum, fiber, and access. The edge buildout is the opportunity to move from connectivity economics to platform economics. If they don’t take it, stage two is hyperscalers leasing them back their own relevance, and stage three is consolidation. The window is not infinite. The next move has to be decisive.

What do you think about the telcos’ last chance? Will they take back what’s rightfully theirs or cede the future to more nimble players?