Executive Summary

At Nutanix.NEXT 2026, several panel sessions collectively outlined a broader strategic shift underway in enterprise infrastructure and application platforms. Across discussions on AI infrastructure, Kubernetes strategy, service-provider enablement, and pragmatic modernization, Nutanix presented a consistent message: the market is moving away from single-purpose infrastructure decisions and toward governed, multi-environment platforms that can support virtual machines, containers, data services, and AI workloads together.

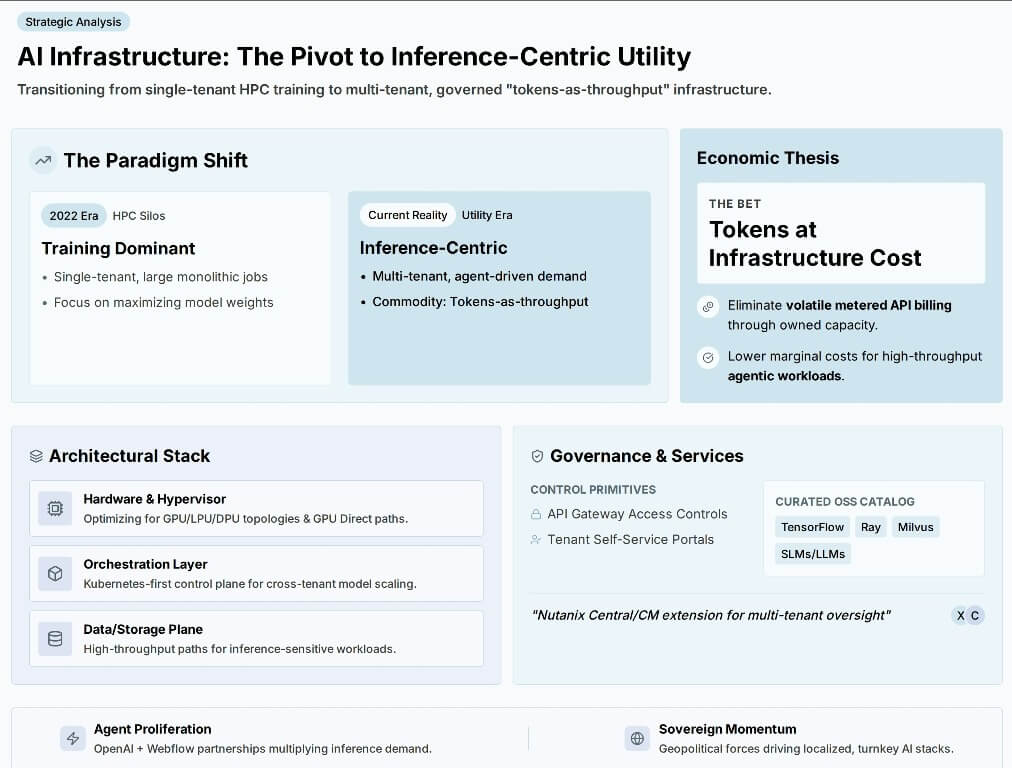

What stood out was not simply product messaging, but the framing of the market itself. Nutanix repeatedly positioned the industry as entering an inference-first phase of AI adoption, where token economics, governance, multi-tenancy, and operational consistency matter more than raw training scale. At the same time, the company made clear that Kubernetes is central to its long-term platform strategy, particularly as the control point for net-new application workloads that will increasingly define where platform share moves next.

The inclusion of Kelsey Hightower added an important counterweight to vendor narrative. His emphasis on trust, fundamentals, and transparency reinforced a reality many enterprises are already confronting: modernization is not just a technology adoption problem. It is an operational discipline problem, a cultural problem, and increasingly, an economic one.

Market Context

The Nutanix panels landed in a market that is already under pressure to reconcile AI ambition with operational reality. According to theCUBE Research data, 74.3% of organizations identify AI/ML as a top spending priority over the next 12 months, 60.7% prioritize cloud infrastructure, and 43.6% prioritize DevOps automation. At the same time, 61.8% of organizations primarily operate in hybrid environments, and 46.5% report that required deployment speed has increased by 50% to 100% compared with three years ago.

Those numbers matter because they explain why vendors are increasingly converging around platform-level narratives. Enterprises are not just trying to deploy more software. They are trying to accelerate delivery, contain operational complexity, secure increasingly distributed environments, and now layer AI capabilities into the same systems without losing control of cost or governance.

This is especially relevant in observability and security. Research shows 60.5% of organizations prioritize real-time insights to meet SLAs and performance goals, while 51.3% prioritize tracing and root-cause analysis. On the security side, 41.3% say faster CI/CD cycles increase vulnerability exposure, and 47.2% report breaches tied to cloud-native applications. In that context, Nutanix’s message about consistent control planes, shared governance, and operational simplification should be viewed less as branding and more as a response to a real market need.

Key Takeaways from the Sessions

AI is shifting from model creation to infrastructure economics

One of the clearest themes from the event was Nutanix’s view that AI has entered a fundamentally different phase. Rather than focusing on training large frontier models, the market is moving toward inference-centric consumption, where many applications, agents, and enterprise services require access to models at predictable cost and under governed conditions.

The shift toward inference-centric AI is also reshaping how infrastructure is architected and consumed. Rather than optimizing for large, single-tenant training jobs, organizations are increasingly prioritizing multi-tenant environments that can support continuous, agent-driven inference demand. In this model, tokens effectively become the unit of throughput, introducing a more utility-like consumption pattern that aligns cost more closely with usage. This shift has direct implications for platform design. Supporting high-throughput inference requires coordination across the full stack, from GPU-aware infrastructure and data pipelines to Kubernetes-based orchestration and API-level governance. As a result, AI is becoming less of a discrete workload category and more of an integrated platform capability, where access control, tenancy, and cost visibility are as critical as raw performance.

That is a notable shift because it reframes AI from a prestige infrastructure conversation into a platform efficiency conversation. Nutanix argued that enterprises will increasingly care about receiving “tokens at the right price point,” and that this requires coordinated optimization across hardware, hypervisors, Kubernetes, model governance, and self-service access. This is a more enterprise-grounded position than the earlier AI market emphasis on sheer model size and training scale.

From an analyst standpoint, this matters because it aligns with how application teams are likely to consume AI. Developers are far less likely to be building frontier models than they are to be integrating model-backed services into internal applications, workflows, copilots, and emerging agent-driven systems. The platform that wins in that environment may not be the one with the most raw compute, but the one that can expose AI capabilities with the right balance of governance, cost predictability, and deployment flexibility.

Kubernetes is now central to the platform capture strategy

The Kubernetes discussions were equally revealing. Nutanix’s NKP positioning suggests the company sees Kubernetes not merely as a product adjacency, but as the mechanism through which future platform share will be won. In one panel, the argument was made explicitly: the “platform battle” begins with containers, because net-new workloads will increasingly be built there rather than on traditional virtualization stacks.

This is an important distinction. Much of the Kubernetes market has historically focused on developer enablement, cloud-native purity, or ecosystem breadth. Nutanix’s differentiation appears more grounded in enterprise operational fit. The company emphasized bringing IT administrators and platform teams together, supporting both containers and VMs, and preserving consistent security, data services, and infrastructure management across deployment environments.

That approach may resonate in a market where mainstream Kubernetes adoption is still shaped by operational readiness rather than enthusiasm alone. theCUBE Research shows 76.8% of organizations have adopted GitOps, 76.8% integrate IaC into pipelines, and 92.3% provide cloud-native training, which suggests the issue is no longer basic awareness. The bigger barriers remain skills, cost, and complexity. That favors vendors able to simplify production adoption rather than merely expand ecosystem reach.

The enterprise opportunity is in governed coexistence, not forced replacement

Another important message across the sessions was that most enterprises are not looking for a clean break from existing environments. They are looking for a manageable coexistence model. Nutanix repeatedly stressed optionality: VMs where appropriate, containers where appropriate, bare metal where needed, and consistent management across them all.

That is a more pragmatic position than some of the market’s all-in cloud-native narratives. It also reflects enterprise reality. Large organizations rarely move through clean architectural transitions. They add new platforms beside old ones, migrate selectively, and prioritize operational continuity over ideological purity. Nutanix appears to be designing for that reality, particularly in the way it talks about supporting both traditional workloads and next-generation AI or containerized applications within one broader control model.

For developers, this matters because the surrounding platform environment often determines how quickly modern application patterns can move into production. Where modernization requires a full organizational or operational reset, adoption typically slows. Where teams can incrementally adopt new runtimes and governance models without abandoning existing infrastructure, the path tends to be more realistic.

Strategic Implications

For application developers

These sessions reinforce that the platform decisions made around developers are becoming more consequential than the tools directly in front of them. AI, observability, Kubernetes, and data services are increasingly intersecting in the runtime environment, which means developer productivity will be shaped as much by platform access, operational consistency, and governance as by IDEs or frameworks alone.

That shift could be especially important as AI-backed applications move from pilot to production. If enterprises accept the premise that future apps increasingly embed agentic or model-driven behavior, then developers will need internal platforms that expose those capabilities in a reusable, policy-aware way rather than through isolated experiments. Nutanix’s emphasis on open-source AI tooling catalogs, self-service access, and governed endpoint control is one attempt to address that shift.

For infrastructure and platform teams

For platform and infrastructure leaders, the deeper implication is that the control plane is expanding. It is no longer enough to manage VMs, containers, or storage separately. The emerging requirement is to manage AI access, tenancy, security, model consumption, and runtime portability through a common operational framework.

This is where Nutanix is trying to reposition itself. The company is effectively arguing that its long-standing strengths in virtualization, hybrid operations, and centralized management can be extended into Kubernetes and AI service delivery. Whether that becomes a durable competitive advantage remains to be seen, but the logic is understandable. Enterprises do not want an AI strategy that creates a second, disconnected infrastructure universe.

For the broader market

The broader market implication is that platform competition is becoming less about isolated feature leadership and more about reducing the friction between old and new operating models. Hyperscalers still have enormous strength, and Red Hat remains a significant Kubernetes force. But Nutanix is clearly trying to occupy a middle ground: one that offers more operational continuity than cloud-native greenfield models and more future-facing application relevance than legacy virtualization-centric approaches.

That middle ground may become increasingly important as enterprises sort through where AI should actually live, how sovereignty and governance concerns affect architecture, and how much complexity their teams can absorb before delivery slows.

Trust, Transparency, and the Reality Check on AI Narratives

The session featuring Kelsey Hightower stood out as a grounding moment within a broader set of forward-looking discussions, offering a more pragmatic lens on the current state of AI and infrastructure adoption. Rather than reinforcing prevailing narratives, the conversation challenged them by emphasizing the importance of trust, transparency, and a return to foundational principles in evaluating emerging technologies.

One of the more notable insights centered on “shadow AI.” Instead of positioning it as a novel risk category, Hightower framed it as a continuation of a long-standing enterprise pattern: when centralized systems fail to meet demand with sufficient speed or flexibility, teams will find alternative paths to execution. This framing shifts the conversation from containment to enablement. It suggests that governance strategies rooted purely in restriction may inadvertently accelerate fragmentation, while those designed to provide accessible, sanctioned pathways are more likely to be adopted and sustained.

More broadly, the discussion highlighted a recurring dynamic in the technology industry: many so-called “new” paradigms are, in practice, reinterpretations of established concepts under different abstractions. This is particularly evident in the current AI cycle, where enthusiasm is often outpacing operational maturity. For developers and platform teams, this perspective is instructive. It reframes decision-making away from chasing emerging trends and toward evaluating whether a given approach meaningfully improves outcomes in production environments.

This perspective also aligns closely with John Furrier’s broader framing of Nutanix.NEXT 2026 as a “control plane moment” for the industry, where the real competition is shifting away from infrastructure features and toward operational ownership and platform outcomes. Hightower’s emphasis on fundamentals reinforces that this shift is not just architectural; it is operational and cultural, requiring discipline in how platforms are adopted and governed.

Bottom Line

Nutanix.NEXT 2026 presented a company trying to evolve from infrastructure supplier into a broader enterprise platform provider for hybrid operations, Kubernetes production, and governed AI services. The panels did not just push product news. They outlined a theory of the market: AI is becoming inference-centric, Kubernetes is becoming central to future platform control, and enterprises need operational coexistence more than architectural absolutism.

That theory is credible because it maps to what enterprises are already experiencing. AI adoption is rising, but so are pressures around governance, cost, and operational discipline. Kubernetes continues to expand, but the real challenge remains production simplicity, not developer enthusiasm. And hybrid environments remain dominant, which means the next generation of platforms must bridge rather than erase the past.

For Nutanix, the opportunity is real. The challenge now is execution. If the company can translate this strategic framing into a genuinely simpler and more governable operating model for AI and modern applications, it could strengthen its relevance well beyond HCI. If it cannot, the market will continue to favor vendors that better align platform ambition with operational reality.