Premise. The challenge of optimally running applications and infrastructure has grown geometrically as each new application generation adds more moving parts. Managing applications that span generations and platforms requires a broad approach to application performance and operations intelligence (AP&OI). “Breadth-first” management applications foster collaboration among administrators of specialized technology domains. That collaboration process can make broad management applications gradually smarter about the topology and operation of customers’ applications and infrastructure. And it’s that intelligence that ultimately helps find and resolve the root cause of problems.

This report is the second in a series of four reports on applying machine learning to IT Operations Management. As mainstream enterprises put in place the foundations for digital business, it’s becoming clear that the demands of managing the new infrastructure are growing exponentially. The first report summarizes the approaches. The following three reports go into greater depth:

Applying Machine Learning to IT Operations Management – Part 1

Applying Machine Learning to IT Operations Management for High-Fidelity Root Cause Analysis – Part 3

Information technology is being applied to an increasingly complex array of digital business problems. The systems that address these problems are more distributed, less deterministic, and more central to brand experience. Demands on application performance and operations are growing dramatically, but new management platforms that include big data and machine learning capabilities are arriving to help reduce operational noise, surface issues earlier, and better automate responses.

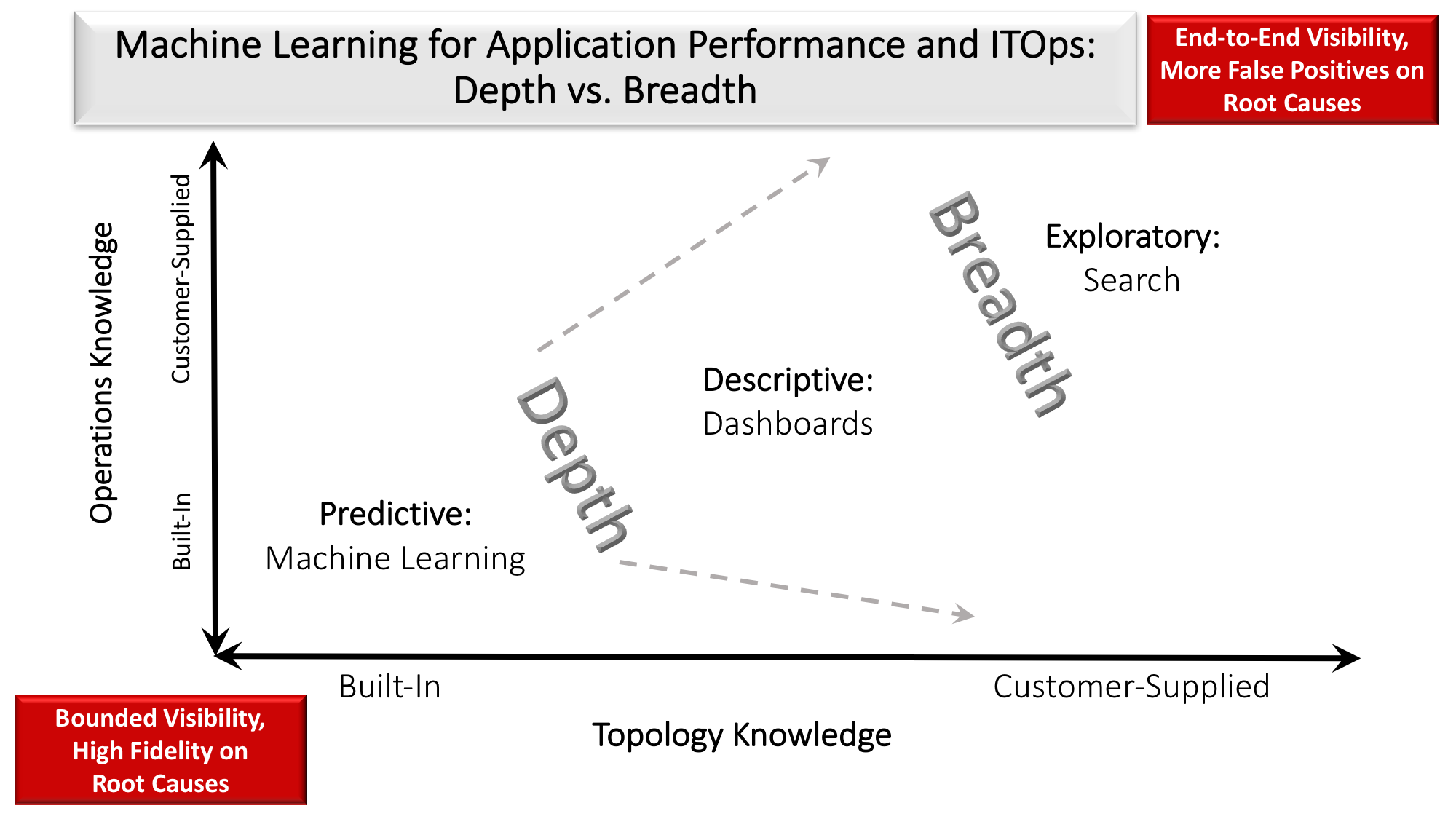

The challenge facing IT professionals is that these new tools typically fall into one of two camps. Either they are good at handling system breadth or depth, but not both. “Breadth-first” tools facilitate cross-role collaboration, enabling management system intelligence to emerge out of human collaboration in highly bespoke operational domains, which typifies any larger enterprise. “Depth-first” tools ship with digital twin-class intelligence included, but the scope of capability is limited to a given class of resources or applications, such as intrusion detection or big data applications. As larger portions of a firm’s application portfolio are directly connected, or enhanced with big data, or integrated with SaaS and other third-party applications (e.g., customer or partner systems), breadth-first tools are becoming essential, whether or not they feature the type of depth typically desired in IT organizations (see table 1). The guiding principles for successfully adopting these complex AP&OI tools are:

- Breadth-first applications deliver end-to-end visibility at the expense of built-in high fidelity root cause diagnostics. Breadth-first tools and services are designed to support end-to-end IT Operations Management (ITOM), not deliver resource-specific optimization (see Figure 1). When looking at all these systems, AP&OI can treat business transactions, customer interactions, and operational data about infrastructure as one integrated dataset, making it possible to manage this amalgamation of applications and infrastructure as one end-to-end system.

- Breadth-first applications can foster collaboration to break silos, gradually add intelligence. The IT applications and infrastructure portfolio of every enterprise is complex and unique. No AP&OI vendor can deliver a comprehensively correct and unified set of digital twins out of the box. Instead, users should evaluate AP&OI tools on the basis of (1) fitness of what is available to their specific portfolio; and (2) capabilities for accelerating training of the platform in order to rapidly increase the breadth-first tool’s fitness.

- Breadth-first products designed as platforms can host specialized depth-first AP&OI apps. Breadth-first products have the repository that collects telemetry that provides end-to-end visibility. That repository can be the foundation for depth-first products but the combination does not provide both depth and breadth. Without digital twin-class intelligence extending to relationships between depth-first products, each product still only has deep analytics within its own resource pool or domain.

| Scope of coverage –> | Breadth-first | Depth-first | |

| Built-in knowledge of topology and operation of apps and ITOps | |||

| Examples built on machine learning | Splunk Enterprise Rocana BMC TrueSight |

Unravel Data Extrahop Pepper Data AWS Macie Splunk UBA |

|

| Examples built without machine learning | VMware vCloud Suite New Relic AppDynamics Datadog SignalFX |

CA Network Operations & Analytics | |

| Searchable | Yes | No | |

| BI-style exploration | Yes | Limited | |

| Pre-trained machine learning models | No. Train on customer events, metrics | Yes | |

| Customer extensibility | |||

| Infinite hybrid cloud platform configurations | Yes | No | |

| New apps, services, infrastructure: systems of record, Web/mobile | Yes | No | |

| New events and metrics | Anomaly detection for new time series of events, metrics | No | |

| New visualizations, dashboards | Yes | No | |

| New complex event processing rules | Yes. Based on customer’s domain expertise | Limited | |

| New machine learning models | Some vendors (ex – Splunk) | No | |

| Root cause analysis | |||

| Source of domain knowledge | Customer | Vendor | |

| Alert quantity vs. quality | Quantity: What happened. | Quality: Why things happened. | |

| Scope of contextual knowledge | Identifies failed entities | Identifies failures within and between entities | |

| Likelihood of false positives | High | Low | |

| Machine learning maturity | |||

| Already trained | No | Yes. Using data from design partners | |

| Data science knowledge required | Yes | Minimal |

Table 1: Breadth-first and Depth-first serve distinct — and sometimes tangential Applications Performance and IT Operations Management needs.

Breadth-First Delivers End-to-End Visibility at Expense of Built-in High Fidelity Root Cause Diagnostics

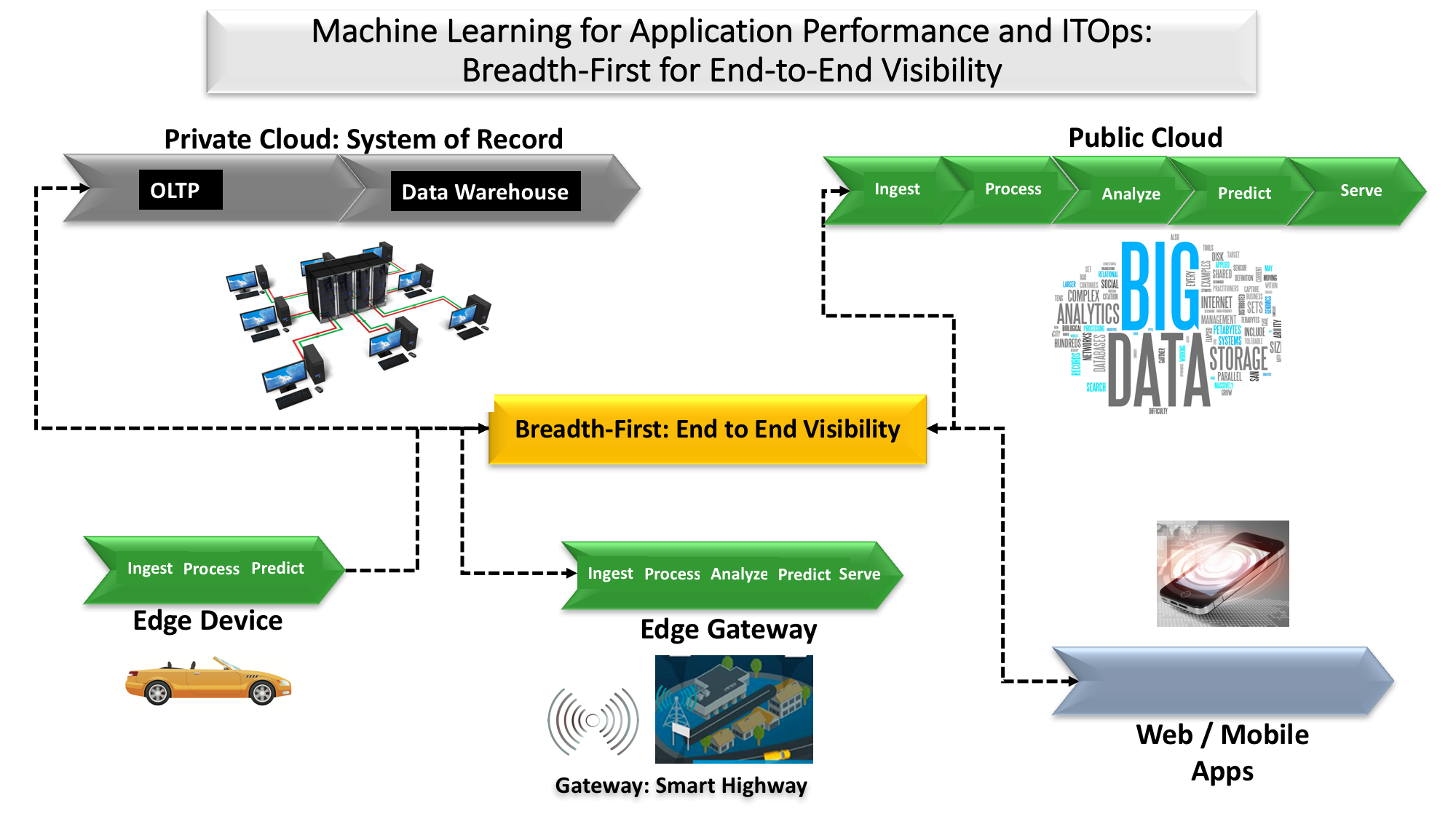

As applications increasingly span geographies and cloud platforms from different vendors, being able to manage system heterogeneity will be more critical. That’s what “breadth-first” AP&OI tools are designed to provide. The ability to point the firehose of distributed events at different locations enables easier distributed operation (see figure 2) and, when logically centralized, can feed additional, specialized ITOM services. Breadth-first products take a number of shapes, but all share two key features:

- Breadth-first products emphasize 360-degree vision. The sheer scope of end-to-end topologies and operational theatres makes it too difficult for AP&OI apps to manage infrastructure landscapes autonomously with only out-of-the-box function. Without built-in knowledge of the topology and operation of the end-to-end landscape, deep root cause analysis requires administrator knowledge to put the right remediation together manually. They don’t have the built-in intelligence to get at the root cause of problems automatically. Hybrid cloud deployments are especially challenging. Each platform is defined by its own data gravity, API ecosystem, and application design patterns. Within each platform, the actual entities may be emitting telemetry completely differently from other clouds or on-prem platforms.

- Breadth-first products require big data — and big planning. The first challenge of putting together a breadth-first AP&OI solution is similar to challenges facing other big data workloads: Breadth-first products must gather data from a potentially unlimited set of disparate entities. That data acquisition process requires something equivalent to adapters associated with every entity to collect such heterogeneous data. And it takes big data to manage big data, potentially 100s of TB/day. Moreover, administrators potentially need access to several years of data so they can compare how things worked over time. But storing big data can be very costly, despite the use of commodity storage. Data collection must be well designed and meticulously planned, or shops can anticipate data storage service bills in the millions of dollars per year just for storing end-to-end ITOM data.

Breadth-First Can Foster Collaboration to Break Silos, Gradually Add Intelligence

IT operations management (ITOM) has evolved in response to advances in products and product-specific automation tools. An “iron triangle” has emerged in ITOM, typified by balkanized product-defined roles, product-based automation, and product-specific vendor support. As a result, IT organizations often deliver excellent resource uptime, but mediocre end-to-end service. As application and infrastructure portfolios become more dependent on third-party services, incorporate resources that are more reactive than programmatic, and are more deeply embedded within brand-defining operations, mediocre end-to-end ITOM service becomes a prescription for business failure. Breadth-first tooling is improving end-to-end service in leading organizations, but these platforms and services require significant care and feeding; bespoke application and infrastructure portfolio complexity makes delivering out-of-the-box solutions impossible. Instead, plan for the following:

- Tools should be open enough to serve as a single source of truth. Not all administrative domains or specializations have depth-first products. And even if all areas were covered by depth-first products there is still the need for a repository that can serve as clearinghouse of crucial ITOM data. Having a single repository for data about all business transactions, customer interactions, and operational events enables different roles, such as sysadmins, DBAs, SREs, devops, netops, secops, and others, to collaborate and look at contextually relevant data across specializations and time periods. The collaboration process yields customer-added domain expertise that starts to make the application smarter over time in finding the root cause of problems and suggesting remediation.

- Expect lots of “false-positive” alerts initially. Breadth-first products will always issue a lot more false positives about root causes than depth-first products. A key challenge of a breadth-first product is that it will be more demanding on the expertise and collaboration of administrators to find root causes via triaging through false positives. Reducing false positives over time requires putting the right information together from several perspectives, which administrators with different specialties can use collaboratively. The process of collaborating across roles allows admins to imbue the AP&OI application with more and more domain expertise over time. Superior breadth-first tools provide capabilities to translate manually-created, side-by-side comparisons and correlations across time into complex event-processing logic to create custom rules that get at root causes of problems. To be fair, however, that collaboratively-added expertise will likely never match the built-in knowledge that comes with specialized, depth-first management apps.

- Evaluate breadth-first tools on speed-to-train. Can’t figure out what to measure in order to anticipate problems? Measure everything and the AP&OI app will gradually get smarter. The best breadth-first products and services can “observe” the application and infrastructure landscape and build baseline models of how the different elements perform. Even though these models don’t show how all the elements should work together prescriptively, they can reduce the need for the customer to have data science expertise. In addition, rather than just using a static baseline for finding anomalies, models can continually learn as entities’ behaviors evolve over time.

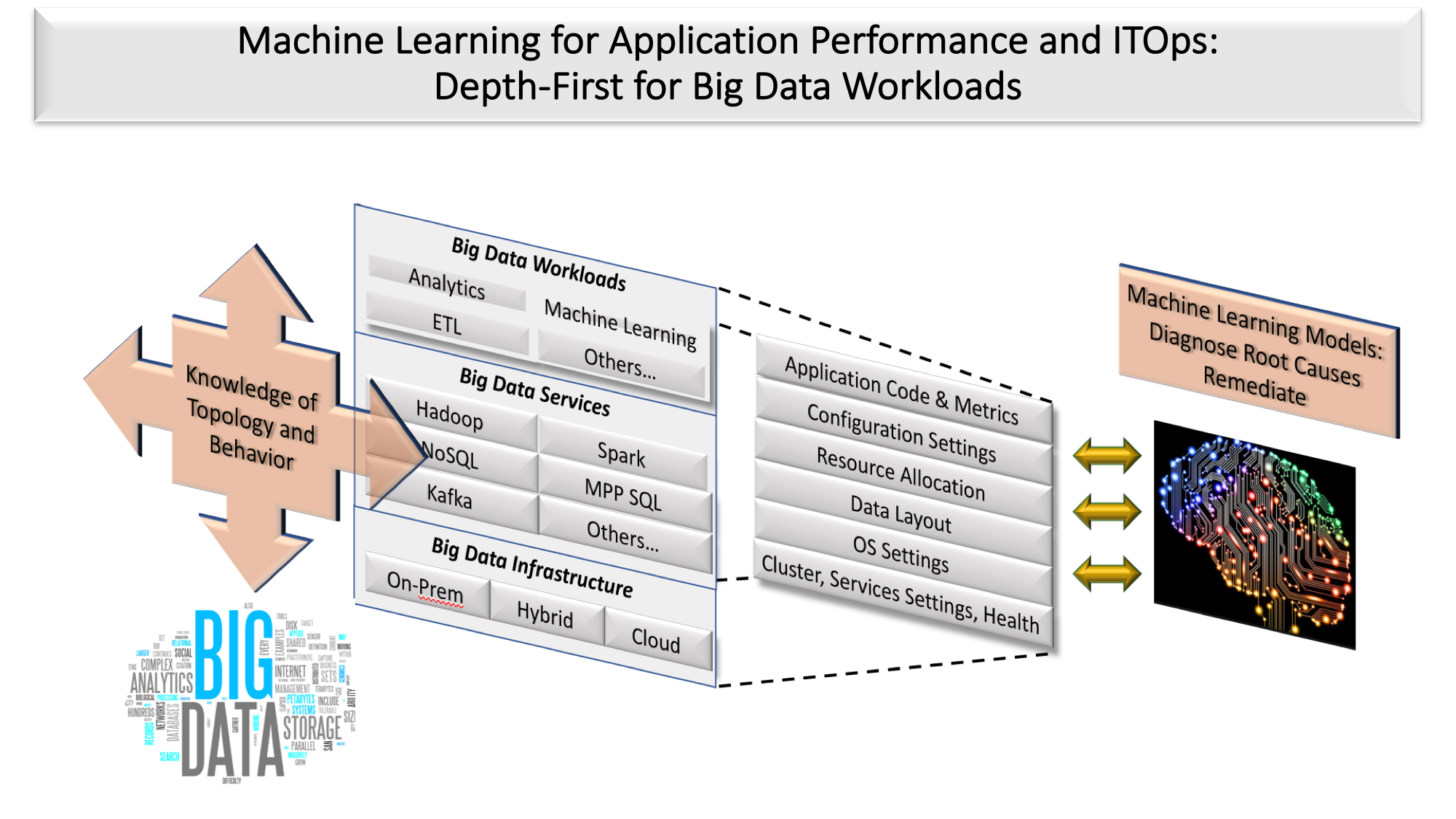

Breadth-First Products Designed as Platforms Can Host Specialized Depth-First AP&OI Applications

Time-to-value is crucial in any business investment. It’s especially crucial in ITOM with enterprises struggling to keep up with the ever growing sprawl in s application and infrastructure portfolios. Buying a breadth-first product that can learn over time into a platform for depth-first products is one tactic for accelerating time-to-value. Critical issues that should form the basis of product evaluations are:

- Breadth-first products have to learn “on the job”. Machine learning models in breadth-first products can’t have built-in knowledge of the landscape. There are too many degrees-of-freedom relative to depth-first products. Depth-first products get their knowledge from pre-trained models while still augmenting that knowledge from ongoing operations.

- Breadth-first platforms can grow deeper over time. With this approach, a product such as would serve as a unified repository for other vendors who could leverage that broader data set to add more contextually relevant data to their specialized products. Vendors explicitly recognize the need to add more out-of-the box expertise to their product.

- Even if multiple depth-first products build on a breadth-first product, the depth-first products are still somewhat silo’d. While a breadth-first product could be an ideal platform to store data for specialized products, that configuration won’t get rid of silos. The entire design center of depth-first products is about limiting the scope of visibility and analysis. That limited scope enables depth-first products to have built-in knowledge of topology and operations for specific domains, such as security information and event management, user behavior analytics, network management, and others. This built-in knowledge is equivalent to “digital twins” in IoT applications (see figure 3). Until there are higher level digital twins that incorporate individual digital twins or domains, it won’t be possible to provide cross-domain knowledge out-of-the-box. In other words, a depth-first product that’s looking at suspicious behavior might not realize that the root cause is really related to a performance problem elsewhere.

Action Item. Customers who need end-to-end visibility across generations of applications and infrastructure should evaluate the new generation of breadth-first AP&OI products and services. However, CIOs must be aware that these tools presume significant investments in training-by-collaboration. They will improve ITOM productivity, but they also will engender significant organizational and process changes. Finally, don’t let powerful groups of administrators balkanize domain control by procuring depth-first tools when breadth-first is required.