Premise. CIOs remain focused on costs, but digital business is shining a brighter light on the revenue contributions of technology. Elastic infrastructure provided the approach to optimize costs, but a revenue focus requires an evolved approach, which we call “plastic infrastructure.”

Elasticity solves the problem of known workload, any scale. It’s an important problem to solve, given the historic IT focus on cost control. Elastic infrastructure technologies and disciplines ensured that IT organizations generally could avoid having to invest in hardware, system software, and administrative personnel to handle workload peaks, leaving significant portions of resources idle – while still paying for them – during normal times. CIOs could employ elastic infrastructure technologies and concepts to take significant chunks of cost out of IT operations. One obvious option: Rent more cloud-based capacity for workloads that are fit for the cloud and pay for only what is used.

However, as customers increasingly demand digital engagement, the IT focus starts to shift from known workloads, any scale, to unknown workloads, any scale. Why? Because system development shifts focus from:

- known process, internal-facing, and employee-oriented applications to;

- data-driven, external-facing, and customer-oriented applications.

Many IT functions already are well down the path of supporting this change, like app dev’s adoption of agile development methods. But infrastructure and operations still lag; DevOps, for example, is not taking the world by storm. The technology foundation for digital business must be plastic: mutable, rapidly responsive, but able to sustain new steady-state, operational-sound configurations to ensure reliable digital business behaviors and performance. Plastic infrastructure requires CIOs to start thinking about:

- Prioritizing hardware that enhances local plasticity. Faster and cheaper are not the only infrastructure goals. Better still matters, and all-flash storage systems do a better job of providing local infrastructure plasticity.

- Moving data-at-a-distance to enhance global plasticity. Not all data is local, which creates a multitude of problems that many hope will magically disappear by moving all data to the cloud. But that’s just not viable.

- Bridging the last mile between DevOps and AppDev. Digital business must feature high-quality IT operations, even as more computing takes place in the cloud. The DevOps movement is grinding forward, but new concepts and tools are emerging that can accelerate DevOps adoption.

Prioritize Hardware That Enhances Local Plasticity

Elastic infrastructure ensured that infrastructure configurations and costs scaled to match process-oriented workloads. The customer-facing systems being conceived and built today are data-driven. The infrastructure required to support them must be architected and built with data scope in mind. What do we mean by data scope? It’s the use of consistent copies of the same data applied to any workloads at any point in the lifecycle to achieve lower costs and higher quality application production. Improving data scope is the key to increasing the value an enterprise’s data capital: the data assets available to driving digital business.

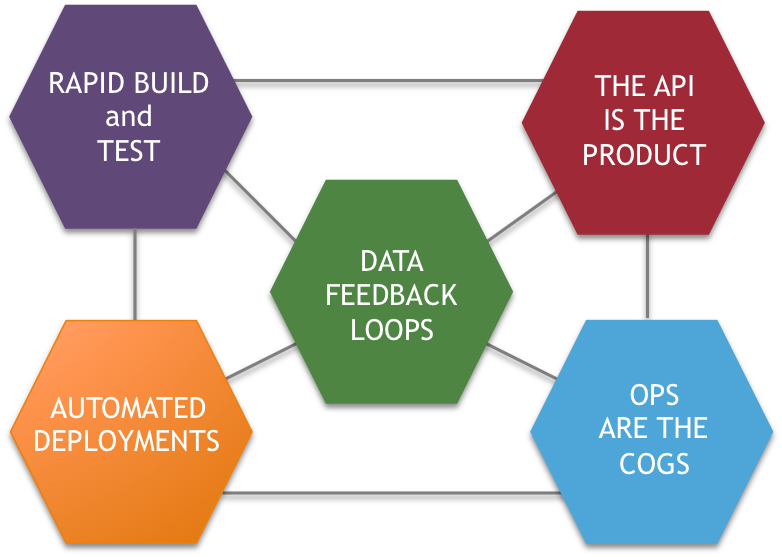

Software-defined infrastructure (SDI) has introduced a plethora of important advances across all hardware domains. Without SDI, elastic infrastructure would be very difficult to achieve. Moreover, SDI makes it much easier to configure or reconfigure hardware assets to add new applications to existing portfolios. However, SDI also makes it easier to spin up sub-optimal infrastructure. Hardware still matters to the challenge of increasing data scope, and no type of hardware is having a bigger impact on infrastructure plasticity than all-flash storage systems. Provided by newer firms like Pure Storage and Tegile Systems, as well as incumbents like Dell EMC, Oracle, and HPE, these systems can support unprecedented data sharing among workloads while improving IoPS and reducing latency to data access. Our research shows conclusively that the benefits of all-flash storage systems are translating into explosive market growth (see Figure 1).

Source: © Wikibon 2016

Move Data-At-A-Distance To Enhance Global Plasticity

While all-flash storage systems are helping to solve the problems of local data scope, or “local plasticity,” dealing with data-at-a-distance is an increasing challenge. There are five reasons for this:

- More data is being generated by IoT&P (the internet of things and people) at the edge and on the move. Moreover, much of this data is the customer, service, operation, and maintenance data that has the broadest, natural scope: it is especially in demand across multiple functions and applications. IoT&P is a key catalyst for infrastructure plasticity.

- Moving data in bulk from private-to-public infrastructure is required to take full advantage of the cloud. However, while traditional batch and replication middleware have roles to play, they may introduce latency and integrity issues.

- Machine learning, AI, and other big data-driven applications are evolving into essential business system elements; these apps tend to suck huge amounts of data from distance, whether it’s needed.

- As a business’s value proposition becomes more digital – based on data – perimeter-based security fails. Increasingly, security must move with the data, and security policies must evolve to accommodate that fact.

- Physics is the same always and everywhere in the universe. Not even the greatest marketing material in this world can abrogate basic physical laws: Nothing moves faster than the speed of light and entropy increases randomness – the two banes of computing and communications.

Four technologies are emerging to improve infrastructure plasticity for data-at-a-distance. First, is lower-cost, more flexible networking using architectures like leaf and spine. Companies like Mellanox are introducing networking equipment that provides fast and flexible ways to support high speed networking among data center end points. Second, new data movement software from companies like Wandisco are offering novel approaches to moving bulk data while minimizing latency and integrity costs. Third is blockchain, which promises an approach to move security with data. And fourth, new infrastructure design best practices (which we loosely call a technology) are slowly diffusing in the market as IT shops and others fully consider the impossibility of moving all data to a single, master location. Increasingly, the data-at-a-distance challenge will encourage less data movement from the edge to the center, and more extension of the cloud to the edge.

Bridge The Last Mile Between DevOps and AppDev

Container-oriented application development tools from sources like Docker, Kubernetes, Chef, and Puppet, simplify the conversation between developer and operations personnel and help bridge the remaining technology gap between software-defined infrastructure and application development. While still unproven in many hardcore OLTP environments, these and related tools are more likely than previous technologies, like SOA, to result in development of highly shareable, repeatable, and infrastructure agnostic application components.

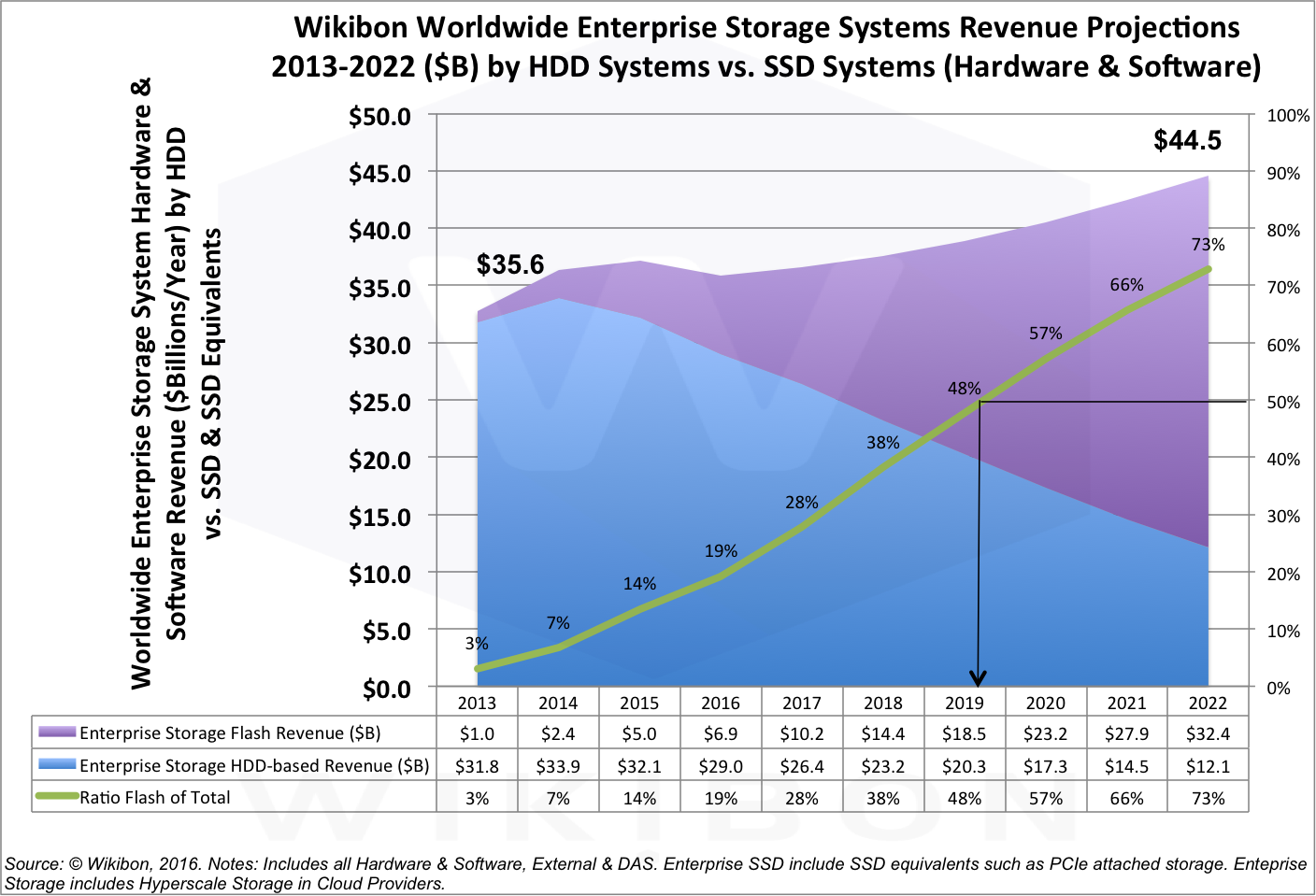

However, application development practices must evolve to ensure that mechanisms like microservices are not employed to, essentially, create monolithic applications. Wikibon’s research shows that a Digital Business Platform (DBP) should be conceived and deployed to enact modern plasticity practices, including DevOps (see Figure 2). This effort will be enhanced by the evolution of three technologies. The first is modeling tools for operations developers. Powerful modeling tools exist for app developers, but emerging tools like Canonical’s Juju are can help operations developers model, manage, and deploy reusable templates comprising any class of application and infrastructure component. Second, as software “eats the world,” digital operations gain complexity. Big data analytics can be applied to IT problems, too. Splunk and Rocana, in particular, have established sound foundations for analyzing and acting on log file data; Rocana especially is pushing the start of the art in this space with Rocana 2.0. The third is data catalogs that can better record and track data assets independently of application silos. While data catalog tools are still relatively new, companies like Alation are putting significant engineering and go-to market resources behind establishing the technology and social foundations required to deliver real solutions for a key data asset management problem.

Figure 2. The Five Pillars of a Modern Digital Business Platform