Contributing Author: David Floyer

Database is the heart of enterprise computing. The market is both growing rapidly and evolving. Major forces transforming the space include cloud and data – of course – but also new workloads, advanced memory and IO capabilities, new processor types, a massive push toward simplicity, new data sharing and governance models; and a spate of venture investment.

Snowflake stands out as the gold standard for operational excellence and go to market execution. The company has attracted the attention of customers, investors and competitors and everyone from entrenched players to upstarts want in on the act.

In this Breaking Analysis we’ll share our most current thinking on the database marketplace and dig into Snowflake’s execution, some of its challenges and we’ll take a look at how others are making moves to solve customer challenges; and angling to get their piece of the growing database pie.

What’s Driving Database Market Momentum?

Customers want to lower license costs, avoid database sprawl, run anywhere and manage new data types. These needs are often divergent and challenging for any one platform to accommodate.

The market is large and growing. Gartner has it at around $65B with a solid CAGR.

But the market as we know it is being redefined. Traditionally, databases served two broad use cases – transactions and reporting e.g. data warehouses. But a diversity of workloads, new architectures and innovation have given rise to a number of types of databases to accommodate these diverse customer needs.

Billions have been invested in this market over the last several years and venture money continues to pour in. Just to give you some examples: Snowflake raised around $1.4B before its IPO. Redis Labs has raised more than half a billion so far. Cockroach Labs, more than $350M, Couchbase $250M, SingleStore $238M, Yellowbrick Data $173M and if you stretch the definition to no code/low code, Airtable has raised more than $600M. And that’s by no means a complete list.

Why all this investment? In large part because the total available market (TAM) is large, growing and being re-defined.

Just How Big is This Market?

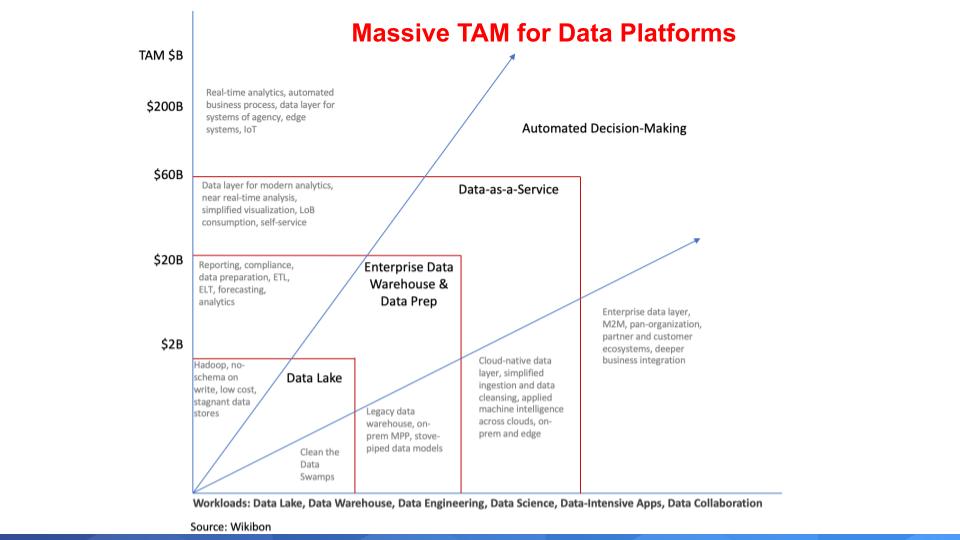

We no longer look at the TAM in terms of database products. Rather we think of the market in terms of the potential for data platforms. Platform is an overused term but in the context of digital, it allows organizations to build integrated solutions by combining data from ecosystems; or specialized and targeted offerings within industries. Below is a chart we’ve shown previously to describe the potential market for data platforms.

Cloud and cloud native technologies have changed the way we think about databases. Virtually 100% of the database players have pivoted to a cloud-first strategy and many, like Snowflake, have a cloud-only strategy. Databases historically have been very difficult to manage and highly sensitive to latency, requiring lots of tuning. Cloud allows you to throw infinite resources on-demand to attack performance problems and scale very quickly.

Data-as-a-Service is becoming a staple of digital transformations and a layer is forming that supports data sharing across ecosystems. This is a fundamental value proposition of Snowflake and one of the most important aspects of its offering. Snowflake tracks a metric called edges which are external connections in its data cloud. It claims that 15% of its total shared connections are edges and edges are growing 33% quarter on quarter.

The notion of sharing is changing the way people think about data. We use terms like data as an asset. This is the language of the 2010’s. We don’t share our assets with others do we? No, we protect them, secure them, even hide them. But we absolutely want to share our data. In a recent conversation with Forrester analyst Michele Goetz, we agreed to scrub data as an asset from our phraseology. Increasingly people are looking at sharing as a way to create data products and data services which can be monetized. This is an underpinning of Zhamak Dehghani’s concept of a data mesh. Make data discoverable, shareable and securely governed so that we can build data products and data services.

This is where the TAM just explodes and the database market is redefined. We believe the potential is in the hundreds of billions of dollars and transcends point database products.

Database Offerings are Diverse

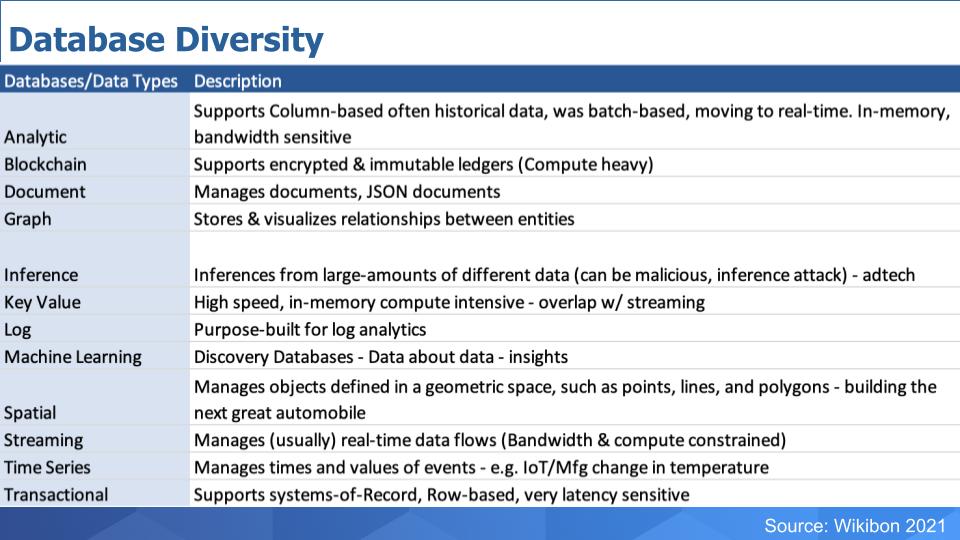

Historically, databases used to support either transactional or analytic workloads– the bottom and top lines on this table below.

But the types of databases have mushroomed. Just looking at this list. Blockchain is a specialized type of database in and of itself and is now being incorporated into platforms – Oracle is notable for incorporating blockchain into its offering.

Document databases that support JSON and graph data stores that assist in visualizing data. Inference from multiple different sources has been popular in adtech. Key value stores have emerged to support workloads such as recommendation engines. Log databases that are purpose-built. Machine learning is finding its way into databases and data lakes to enhance insights – think BigQuery and Databricks. Spatial databases to help build the next generation of products like advanced autos. Streaming databases to manage real-time data flows and time series databases.

Perhaps we’ve even missed a few, but you get the point.

Diversity of Workloads has Spawned Innovative Data Companies

The above logo slide is by no means exhaustive. Many companies want in on the action. Including firms that have been around forever like Oracle, IBM, Teradata and Microsoft. These are the tier 1 relational databases that have matured over the years with properties like atomicity, consistency, isolation and durability – what’s known as ACID properties.

Some others that you may or may not be familiar with. Yellowbrick Data is going after the best price/performance in analytics and optimizing to take advantage of both hybrid installations and the latest hardware innovations.

SingleStore – formerly known as Memsql is high end analytics and transactions and mixed workloads at very high speed – we’re talking trillions of rows per second ingested and queried.

Couchbase with hybrid transactions and analytics.

Redis Labs – open source NoSQL doing very well as is Cockroach with distributed SQL. MariaDB with managed MySQL. Mongo in document database, EDB supporting Postgres. And if you stretch the definition a bit, Splunk for log database, ChaosSearch a really interesting startup that leaves data in S3 and is going after simplifying the ELK stack.

New Relic has a purpose-built database for application performance management. We could even put Workday in the mix as it developed a purpose-built database for its apps.

And we can’t forget SAP with HANA trying to pry customers off of Oracle and of course the big 3 cloud players, AWS, Microsoft and Google with extremely large portfolios of database offerings.

The spectrum of products is wide, with AWS, which has somewhere around 16 database offerings all the way to Oracle, which has one database to do everything, notwithstanding MySQL and its recent Heatwave announcement. But generally Oracle is investing to make its database run any workload while AWS has a right tool for the right job approach.

There are lots of ways to skin a cat in this enormous and strategic enterprise market.

Which Companies are Leading the Data Pack?

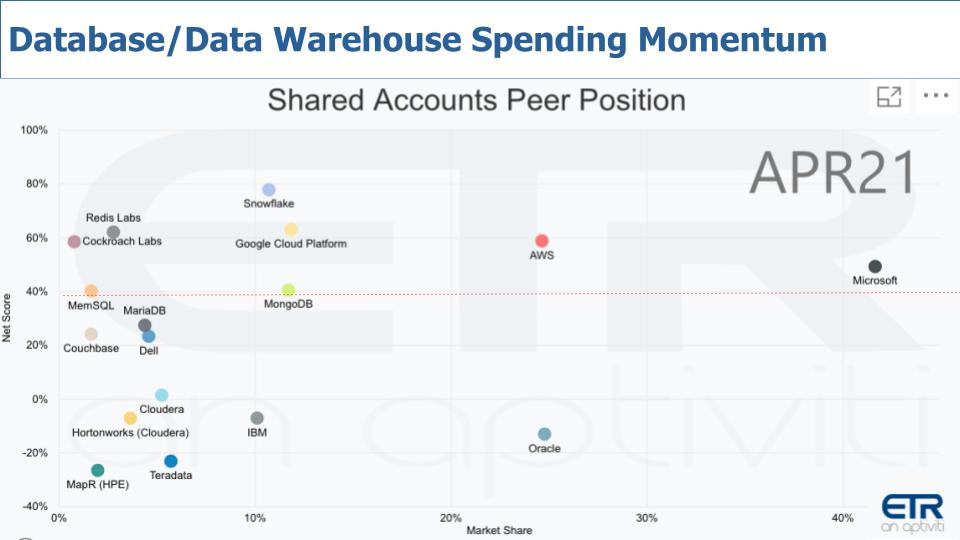

The chart below is one of the views we like to share quite often within the ETR data set. It shows the database players across 1,500 respondents and measures their Net Score – which is spending momentum on the Y Axis and Market Share, which is pervasiveness in the survey on the X-Axis.

Snowflake is notable because it’s been hovering around 80% Net Score since the survey started tracking the company. Anything above 40% – that red line – is considered by us to be elevated.

Microsoft and AWS are especially notable as well because they have both market presence and spending velocity with their platforms.

Oracle is prominent on the X axis but it doesn’t have the spending velocity in the survey because nearly 30% of Oracle installations are spending less whereas only 22% are spending more. Now as a caution, this survey doesn’t measure dollars spent and Oracle will be skewed toward large customers with big budgets – so consider that caveat when evaluating this data.

IBM is in a similar position as Oracle although its Market Share is not keeping pace with the market leader.

Google has great tech, especially with BigQuery and it has elevated momentum – so not a bad spot although we suspect it would like to be closer to AWS and Microsoft on the horizontal axis.

And some of the others we mentioned earlier like MemSQL – now SingleStore – Couchbase, Redis, Mongo, MariaDB all with very solid scores on the vertical axis.

Cloudera just announced it was selling to private equity and that will hopefully give it time to invest in its platform and get off the quarterly shot clock. MapR was acquired by HPE and is part of HPE’s Ezmeral platform, which doesn’t yet have the market presence in the survey

Snowflake & AWS are Even Stronger in Larger Organizations

Now something that is interesting in looking at Snowflake’s earnings last quarter is its “laser focus” on large customers. This is a hallmark of Frank Slootman and Mike Scarpelli as they certainly know how to go whale hunting.

The chart above isolates the data to the Global 1000. Note that both AWS and Snowflake go up on the Y-Axis – meaning large customers are spending at a faster rate for these two companies.

The previous data view had an N of 161 for Snowflake and a 77% Net Score. This chart shows data from 48 G1000 accounts and the Net Score jumps to 85%. When you isolate on the 59 F1000 accounts in the ETR data set, Snowflake jumps another 100 basis points. When you cut the data by F500 there are 40 Snowflake accounts and their Net Score jumps another 200 basis points to 88%. Finally, when you isolate on the 18 F100 accounts Snowflake’s Net Score jumps to 89%. So it is strong confirmation in the ETR data that Snowflake’s large account strategy is working.

And because we think Snowflake is sticky — this is probably a good sign for the future.

Isolating on Snowflake’s Spending KPIs

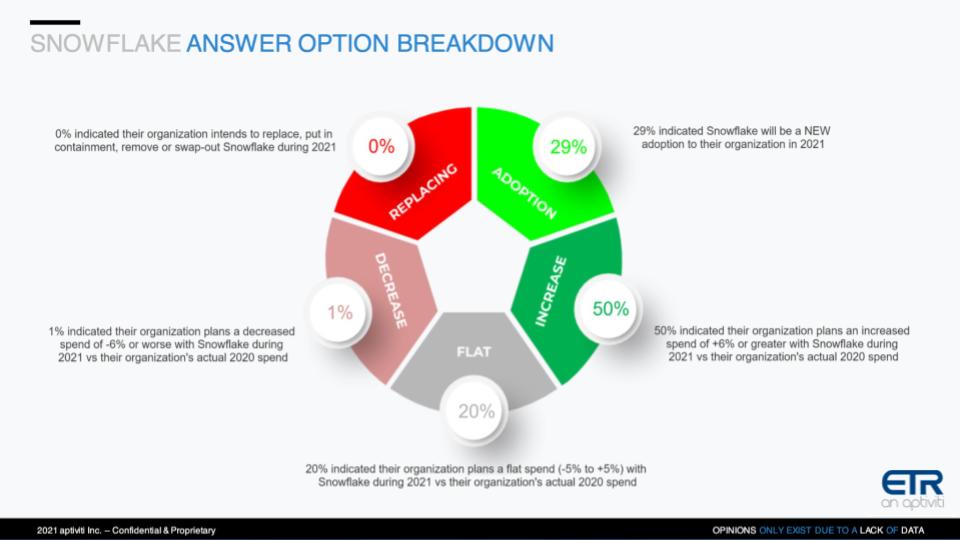

We talk about Net Score a lot as its a key measure in the ETR data so we’d like to just quickly remind you what that is and use Snowflake as the example.

This wheel chart above shows the components of Net Score. The lime green is new adoptions – 29% of the Snowflake customers in the ETR data set are new to Snowflake. That is pretty impressive. Fifty percent (50%) of the customers are spending more – that’s the forest green. Twenty percent (20%) are flat from a spending perspective – that’s the gray area. Only 1% – the pink – are spending less and 0% are replacing Snowflake. Subtract the red from the green and you get a Net Score of 78%…which is tremendous and is a figure that has been steady for many many quarters.

Remember as well, it typically takes Snowflake customers many months – like 6-9 months – to start consuming its services at the contracted rate. So those 29% new adoptions won’t kick into high gear until next year.

This bodes well for Snowflake’s future revenue.

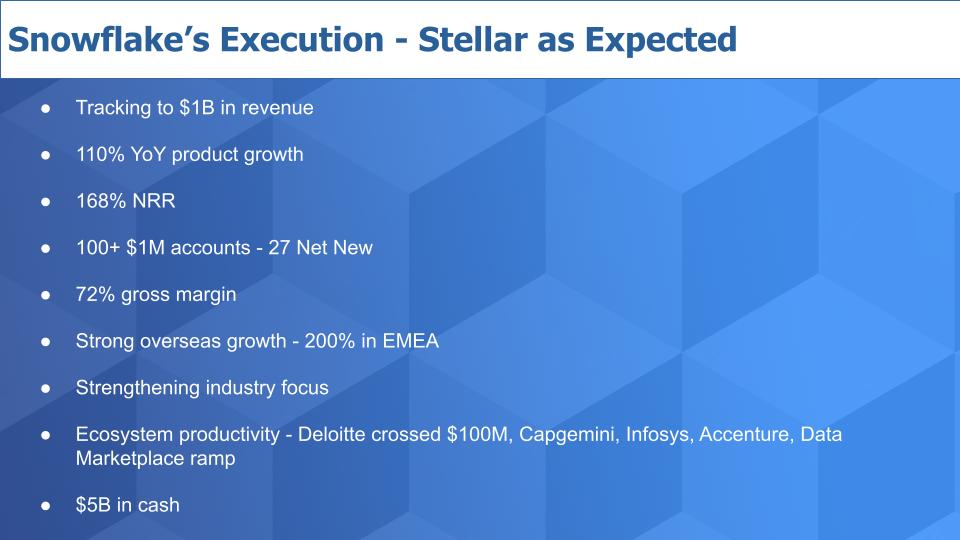

A Snapshot of Snowflake’s Most Recent Quarter

Let’s just do a quick rundown of Snowflake’s numbers. The company’s product revenue run rate is now at $856M and they’ll pass $1B on a run rate basis this year. The growth is off the charts with a very high Net Revenue Retention (NRR). We’ve explained previously that Snowflake’s consumption pricing model means they must account for retention differently than a SaaS company would.

Snowflake added 27 net new $1M accounts in the quarter and claims to have more than 100 now. It also is just getting its groove swing going overseas. Slootman says he’s personally going to spend more time in Europe given his belief that the market is large and of course he’s from the continent. And gross margins expanded due in large part to a renegotiation of its cloud costs. We’ll come back to that.

They’re also moving from a product-led growth company to one that’s focused on core industries – with media and entertainment being one of the largest along with financial services. To us, this is really interesting because Disney is an example Snowflake often puts forth as a reference and we believe it’s a good example of using data and analytics to both target customers and build so-called data products through data sharing.

Snowflake must grow its ecosystem to live up to its lofty expectations and indications are the large SIs are leaning in big time – with Deloitte crossing $100M in deal flow.

And the balance sheet is looking good. The snarks will focus on the losses but this story is all about growth, customer acquisition, adoption, loyalty and lifetime value.

Charting Snowflake’s Performance Since IPO

We said at IPO – and always say this – there almost always will be better buying opportunities ahead for stocks than on day 1.

Above is a chart of Snowflake’s performance since IPO. We have to admit, it’s held up pretty well and is trading above its first day close. But as predicted there were better opportunities than day one but you have to make the call from here. Don’t take our stock picking advice, do your research. Snowflake is priced to perfection so any disappointment will be met with selling but it seems investors have supported the stock pretty well when it dips.

You saw a pullback the day after Snowflake crushed its most recent earnings because their guidance for revenue growth for next quarter wasn’t in the triple digits, it moderated into the 80s.

And Snowflake pointed to a new storage compression feature that will lower customer costs and consequently revenue. While this makes sense in the near term, it may be another creative way for Snowflake CFO Scarpelli to dampen enthusiasm and keep expectations from getting out of control. Regardless, over the long term, we believe a drop in storage costs will catalyze more buying. And Snowflake alluded to that on its earnings call.

Is the “Cloud Tax” a Drag on SaaS Company Valuations?

There’s an interesting conversation going on in Silicon Valley right now around the cloud paradox. What is that?

Martin Casado of Andreessen wrote an article with Sarah Wang calling into question the merits of SaaS companies sticking with the cloud at scale. The basic premise is that, for startups and in the early stages of growth, the cloud is a no-brainer for SaaS companies. But at scale, the cost of cloud approaches 50% of the cost of revenue and becomes an albatross that stifles operating leverage. Their conclusion is that as much as $500B in market cap is being vacuumed away by the hyperscalers. And that cloud repatriation is an inevitable path for large SaaS companies at scale.

We were particularly interested in this as we had recently put out a post on the cloud repatriation myth. But I think in this instance there’s some merit to their conclusions. I don’t think it necessarily bleeds into traditional enterprise settings but for SaaS companies, maybe ServiceNow has it right, running their own data centers. Or maybe a hybrid cloud approach to hedge bets and save money down the road is prudent.

What caught our attention in reading through some of the Snowflake docs like the S1 and its most recent 10K were comments regarding long term purchase commitments and non-cancellable contracts with cloud companies. In the company’s S1, there was a disclosure of $247M in purchase commitments over a five plus year period. In the company’s latest 10K report that same line item jumped to $1.8B.

So Snowflake is clearly managing these costs as it alluded to on its earnings call, but one wonders at some point, will Snowflake follow the example of Dropbox and start managing its own IT, or will it stick with the cloud and negotiate hard.

It certainly has the leverage – Snowflake has to be one of Amazon’s best partners/customers, even though it competes aggressively with RedShift. But on the earnings call, CFO Scarpelli said that Snowflake was working on a new chip technology to dramatically increase performance. Hmmm. Not sure what that means. Is that taking advantage of AWS Graviton or is there some deep in the weeds other work going on inside of Snowflake?

We’re going to leave it there for now and keep following this trend.

In Summary

It’s clear that Snowflake is the pacesetter in this new exciting world of data. But there’s plenty of room for others. And Snowflake still has lots to prove to live up to its lofty valuation. One customer in an ETR CTO roundtable expressed skepticism that Snowflake will live up to the hype because its success will lead to more competition from well established players.

This is a common theme and an easy to reach conclusion. But our guess is it’s the exact type of narrative that fuels Slootman and sucked him back into this game of thrones.

Ways to Keep in Touch

Remember these episodes are all available as podcasts wherever you listen.

Email david.vellante@siliconangle.com | DM @dvellante on Twitter | Comment on our LinkedIn posts.

Also, check out this ETR Tutorial we created, which explains the spending methodology in more detail.

Watch the full video analysis: