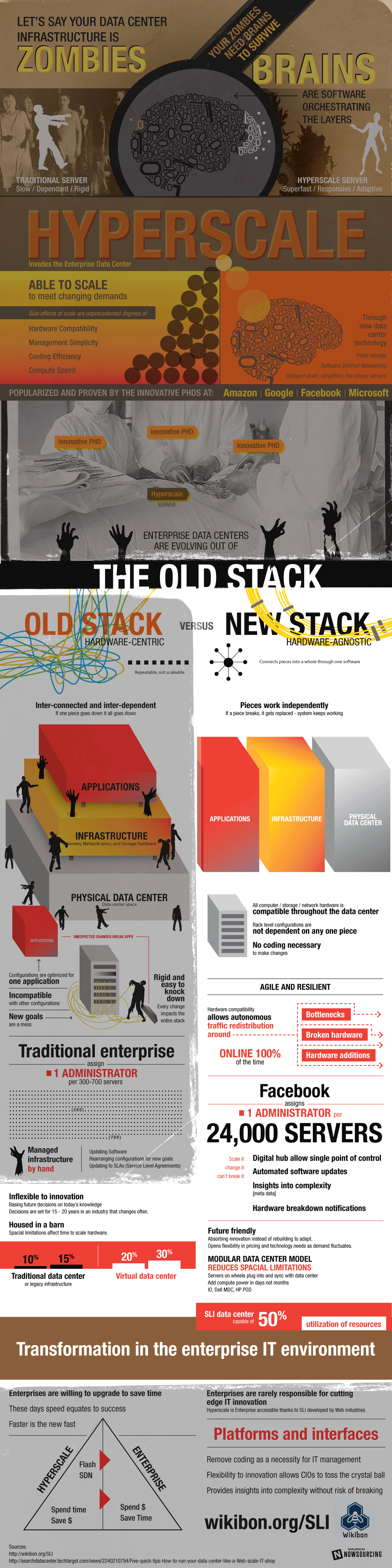

It isn’t the zombie apocalypse, but for too long, IT administrators have been shackled to infrastructure that was as friendly and stable as the stumbling undead. The coordination between application, infrastructure and physical data center was poor, leading to over 70% of resources being spent on adjusting configurations and trying to keep the lights on. Hyperscale cloud providers were built for scalability from day 1, so they had to be able to manage orders of magnitude more gear with a smaller IT staff. While cloud providers can customize new applications, enterprise users are burdened with a portfolio of legacy applications. The transformation to a scalable, agile and fast methodology isn’t simple, there are lessons and technologies that the enterprise can learn from the largest IT shops.

It isn’t the zombie apocalypse, but for too long, IT administrators have been shackled to infrastructure that was as friendly and stable as the stumbling undead. The coordination between application, infrastructure and physical data center was poor, leading to over 70% of resources being spent on adjusting configurations and trying to keep the lights on. Hyperscale cloud providers were built for scalability from day 1, so they had to be able to manage orders of magnitude more gear with a smaller IT staff. While cloud providers can customize new applications, enterprise users are burdened with a portfolio of legacy applications. The transformation to a scalable, agile and fast methodology isn’t simple, there are lessons and technologies that the enterprise can learn from the largest IT shops.

Everyone is talking about the software defined data center, but they are ignoring the physical data center itself. Amazon doesn’t even want to build new data centers – as Wikibon CTO David Floyer describes, Mega Data Centers are the Future. Building a data center is typically a 25+ year commitment that typically has inefficiencies in power/cooling, no flexibility in cabling and no mobility within or between data centers. The software of the data center must go beyond the infrastructure stacks and include the surrounding support systems. Through the use of hybrid clouds and PODS, the data center can be managed independent of physical location.

Moving up the stack to infrastructure, the new “zombies” of infrastructure need to be fast and loosely coordinating; rather than an ambling mob, it is a swarming horde that can climb walls and not pause if pieces fall apart. There is a balance that must be struck between standardization and flexibility. In the cloud community, an analogy used is that infrastructure should be considered cattle rather than pets (or extended into mayflies and dinosaurs). The new mindset is to look to buy less expensive infrastructure, often have faster refresh cycles and allow the application or automation software to manage performance and failures so that administrators can focus on more innovation and less break/fix. While server virtualization helped increase server efficiencies, it did little to improve overall operational efficiencies. Adoption of a new stack that can coordinate from application, through infrastructure and including the data center can yet again improve efficiencies and finally move the needle on operational efficiencies. Large enterprises can stop being envious of hyperscale IT and start to look at ways to close the gap.