![]()

Premise

Avere Systems technology allows NAS filers (supporting NFS & SMB) to guarantee hybrid cloud data consistency. By acquiring and integrating this Avere technology, Microsoft can provide its ISV and enterprise developer communities with support for guaranteed data consistency across a rich hybrid cloud topology of Azure Cloud, Azure Stack, and other public clouds. The cloud giant can now deliver a true hybrid cloud filer.

For ISVs and enterprise developers this can mean increased value for Microsoft-based SMB file-based applications, without the need to rewrite any application code. For enterprise IT, this means much greater flexibility in placing compute and data, enabling them to balance end-user productivity and IT costs for their Microsoft-based application portfolios.

Introduction to Data Consistency

When Guaranteed Data Consistency is Important

Data consistency and state are important for many applications. Cloud data storage systems usually focus on low cost distributed scale-out storage with high availability (e.g., Amazon S3 or Google File System). These systems are usually “eventually consistent”. While this is adequate for many cloud applications, there are many others where guaranteed data consistency is required.

As Data Consistency is explicitly not guaranteed in most low-cost object-based cloud storage solutions, the Data Consistency model is often complex. The Amazon S3 Data Consistency model is shown in the Footnotes at the end of this research. If guaranteed data consistency is required, a programmer will need to take responsibility for providing it, and proving it is guaranteed. This is not trivial coding, and is nuanced work to write and test, as the footnotes illustrate. The challenge is greater still when coding guaranteed data consistency across different private and public cloud instances. And this code will need maintenance, even after the programmer has left.

Enabling Guaranteed Data Consistency

A much cheaper, quicker and lower risk solution is to allow the application access to a hybrid cloud data store with data consistency. This allows flexibility of data placement, and can minimize the problems of data gravity. For example, it can support workflows where the data is constantly changed for a time (e.g., working on video rendering), and allows this data to be close to the users or applications. When the work is over, the data continues to remain in a lower cost public cloud solution, without additional data transfer.

The Avere solution acquired by Microsoft, if integrated into Microsoft Azure, can provide a solution to the problem of data consistency for filers. The solution can also work with Amazon S3 and Google GFS to provide true hybrid inter-cloud solutions.

Data Consistency, Availability & Partition Tolerance

CAP Theorem

Source: © Wikibon 2018. Based on the Theoretical work of Eric Brewer, who said “Of three properties of shared-data systems (Consistency, Availability and tolerance to network Partitions) only two can be achieved at any given moment in time.” PODC conference keynote 2002.

Co-founder Ron Bianchini is president and chief executive officer of Avere Systems. He first came into light as the CEO & Co-founder of Spinnaker, bought by NetApp. Ron was on the cube at the Google Next, 2017. In the two clips from the interview below, he explains the Avere technology, and how it differs from Google File System (GFS) and other .

In the first clip, Ron explains the flexibility of a local Edge-filers running completely integrated with either an on-premises central repository or cloud alternative on (say) Amazon S3 or GFS.

In the second clip, Ron discusses the importance of data consistency. Ron explain the CAP theorem (also called Brewer’s theorem). This states it is impossible for a distributed data storage system to simultaneously provide more than two out of three guarantees:

Data Consistency (the answer received is always correct)

Availability (You always get an answer, although it may not always be the most recent)

Partition Tolerance (The system is distributed, and will continue to operate despite some loss of messages between the nodes)

Figure 1 shows that there are three basic topologies for data storage systems, if only two guarantees can be picked. These are shown as AP, CP, and CA.

Applying CAP Theorem to Applications

- AP – Support applications needing Availability and Partition Tolerance (scale out). Used for very large distributed file-systems, such as Amazon S3 and Google File System.

- CP – Supports applications, databases, and other middleware needing Consistency and Partition Tolerance. Avere’s technology is one example.

- CA – Storage systems featuring Data Consistency and availability are the hallmarks of traditional scale-up architectures, often deployed for systems of record. It supports traditional and important low-latency relational databases with ACID properties.

Storage systems, databases, and application types can also all be placed using the same topology as Figure 1.

Avere Systems Data Consistency Technology

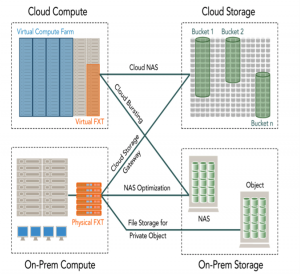

Figure 2 shows the overall components of Avere Systems FXT technology.

Source: Avere Systems 2018

Physical FXT Edge filers run the Avere OS system. Virtual FXT filers can run in a public cloud environment, such as Amazon or Google. Key capabilities are:

- Assured data consistency across the global file system

- Eventually consistent cloud storage can be used as part of the topology, without affecting guaranteed data consistency

- Ability to separate capacity and performance for storage

- Use of public and private file and object-based storage as if it were a traditional NAS

- Reduced latency by caching the most active data closer the users or other applications

- Provision of a transparent cloud gateway exchange protocols between NFS, SMB, and object-based APIs

- Improved performance by optimizing traditional infrastructures and reducing dependencies upon capacity-based storage

- Ability to optimize workflows, balancing the productivity of end-users and the cost of long-term storage.

Avere underlying global file system allow a physical file to be logically broken up in “chunks”, and distributed across different nodes. Key to performance and data consistency is the metadata management and recovery architecture across the nodes.

Limitations of Avere Technology

From the video and the discussion in the last section, it is clear that Avere’s solution is for NAS systems where data consistency and Partition Tolerance (scale-out) are the two important factors. If the data consistency in an application is less important than low-cost scaling and Availability (for example, WORN data (Write Once Read Never) from the Edge), the many eventual consistency solutions would work very well. Big data images or videos that feeds artificial intelligence applications would not be affected significantly by an occasional inconsistency in the data. Other workloads, such as traditional systems supporting systems of record, that require low-latency, guaranteed data consistency, and high-availability topologies are again unsuitable for Avere’s approach. Another area where Avere’s approach may not be valid is applications where access to data is completely random, and the caching hit rates are low.

Wikibon is a great believer in horses for courses, the right tool for the job. Trying to use a hammer for everything should be limited to hammer salesmen and people who can only use hammers.

Conclusions

Developers

For Microsoft-based ISVs, this can mean an increase in value for existing applications, and improved installation hybrid cloud alternatives for existing and future applications. Enterprise developers of applications requiring data consistency will be happy about the potential for increased enterprise value for their SMB file-based applications, without the need to rewrite any application code. For enterprise IT this allows greater flexibility in placing compute and data to balance end-user productivity and IT costs for their Microsoft-based application portfolios.

Strategic Questions Microsoft

The key strategic questions for ISVs and enterprise executives center on Microsoft’s Avere investment plans: –

- Can Microsoft include support for this technology in its portfolio of SaaS software and enterprise applications?

- Will Microsoft sell the Edge Filer, in competition with NetApp, Dell and others?

- Will Microsoft include this filer technology in an Edge True Private Cloud as part of the Azure Stack?

- Can this hybrid cloud technology together with Microsoft’s cloud marketing and SaaS software allow Microsoft to deliver a true private cloud version of the NetApp Filer?

- Will Microsoft share this technology with its hardware partners for the Microsoft Azure Stack?

- Will Microsoft take a customer view, and enable the technology to run well on Amazon and Google storage clouds to maximize the reach of the hybrid cloud offering.

Enterprise & ISV executives should question Microsoft’s plans to invest in this newly acquired technology. They should ask them to addresses the questions above, and the implementation timescales.

Competitive Positioning

If Microsoft executes, Wikibon believes that the resulting capabilities will have a positive influence on Microsoft’s Competitive positioning. It should improve both time-to-market and TAM (Total Available Market) for Microsoft’s Azure Stack strategy. It will also significantly separate them from Amazon’s strategic focus on centralized solutions, and give Microsoft’s enterprise customers a broader range of data placement topologies.

Traditional system vendors should take note of the fact that hybrid clouds need new architectures and design. For example, traditional file systems and metadata are not adequate for this environment. In addition, cloud vendors who focus entirely on the cost reductions that centralization brings should take note that improved local response times can improve productivity significantly, and can more than offset additional costs. Edge devices, Edge filers, and Edge systems all have a role to play, and will work better with integrated architectures and componentry.

ACTION ITEM

Wikibon believes the acquisition of Avere Systems by Microsoft is a positive for ISV and enterprise IT executives with responsibility for significant Microsoft application portfolios. Also, Wikibon strongly believes Edge devices are key to a successful hybrid cloud strategy, as well as common architectures and componentry across all hybrid cloud instantiations. We recommend that senior executives ask for a written plan of Microsoft’s investment projects and timelines for the Avere technology.

Assuming that the outcome of the Microsoft discussions are positive, Wikibon would recommend including Azure and the Azure stack as a key component of a hybrid cloud and multi-cloud strategy to support any portfolio of Microsoft applications. This will provide a broader set of tools to IT professionals.

___________________________________________________________________

Footnotes: Amazon S3 Data Consistency Model

- S3 achieves high availability by replicating data across multiple servers within Amazon’s data centers.

- S3 provides read-after-write consistency for PUTS of new objects

- For a PUT request, S3 synchronously stores data across multiple facilities before returning SUCCESS

- A process writes a new object to S3 and will be immediately able to read the Object

- A process writes a new object to S3 and immediately lists keys within its bucket. Until the change is fully propagated, the object might not appear in the list.

- S3 provides eventual consistency for overwrite PUTS and DELETES in all regions.

- For updates and deletes to Objects, the changes are eventually reflected and not available immediately

- if a process replaces an existing object and immediately attempts to read it. Until the change is fully propagated, S3 might return the prior data

- if a process deletes an existing object and immediately attempts to read it. Until the deletion is fully propagated, S3 might return the deleted data

- if a process deletes an existing object and immediately lists keys within its bucket. Until the deletion is fully propagated, S3 might list the deleted object.

- Updates to a single key are atomic. for e.g., if you PUT to an existing key, a subsequent read might return the old data or the updated data, but it will never write corrupted or partial data.

- S3 does not currently support object locking. for e.g. If two PUT requests are simultaneously made to the same key, the request with the latest time stamp wins. If this is an issue, you will need to build an object-locking mechanism into your application.

- Updates are key-based; there is no way to make atomic updates across keys. for e.g, you cannot make the update of one key dependent on the update of another key unless you design this functionality into your application.

Source for Amazon S3 Data Consistency Model above: Jayendrapatil’s Blog