Networking Industry Gearing Up for Changes

Ethernet is the plumbing that runs every data center and connects every company to the Internet. Changes in the networking world take a lot of time; even the relatively mundane adoption of the next speed bump takes over a decade to make its way through standards and customer adoption. As companies deal with the impact of increasing growth paired with shrinking budgets, IT staffs require automated infrastructure that can simplify operations. The buzz in the networking community is about new models of architecture ranging from Ethernet Fabrics to Software Defined Networking (SDN) and Network Virtualization. As networking has been dominated by Cisco for so long, the question of the day is: Are these new technologies disruptive, and do customers have a strong enough desire to adopt these technologies that they will move to a new vendor to get them?

While Ethernet Fabrics are defined easily as enabling scalable, high bandwidth architectures that replace Spanning Tree Protocol (STP), SDN is more of an architectural model than a product set. Juniper and Arista have offered programmability as part of their solutions for years. Let’s first look at how SDN differs from traditional networking (the next 2 sections and graphics are from Joe Onisick and cross-posted with permission from his article):

Traditional Network Architecture

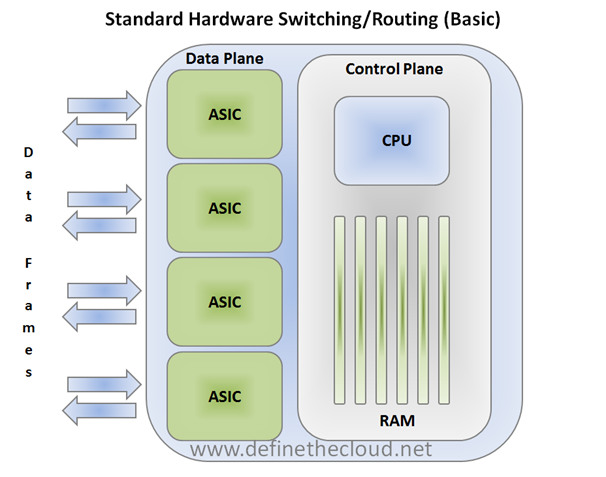

The most important thing to notice in the graphic above is the separate control and data planes. Each plane has separate tasks that provide the overall switching/routing functionality. The control plane is responsible for configuration of the device and programming the paths that will be used for data flows. When you are managing a switch you are interacting with the control plane. Things like route tables and Spanning-Tree Protocol (STP) are calculated in the control plane. This is done by accepting information frames such as BPDUs or Hello messages and processing them to determine available paths. Once these paths have been determined, they are pushed down to the data plane and typically stored in hardware. The data lane then typically makes path decisions in hardware based on the latest information provided by the control plane. This has traditionally been a very effective method. The hardware decision-making process is very fast; reducing overall latency while the control plane itself can handle the heavier processing and configuration requirements.

This method is not without problems; the one we will focus on is scalability. In order to demonstrate the scalability issue, let’s use quality-of-service (QoS) as an example. QoS allows forwarding priority to be given to specific frames for scheduling purposes based on characteristics in those frames. This allows network traffic to receive appropriate treatment in times of congestion. For instance, latency sensitive voice and video traffic is typically engineered for high priority to ensure the best user experience. Traffic prioritization is typically based on tags in the frame known as “class-of-service” (CoS) and or “differentiated services code point” (DSCP). These tags must be marked consistently for frames entering the network, and rules must then be applied consistently for their treatment on the network. This becomes cumbersome in a traditional multi-switch network, because the configuration must be duplicated in some fashion on each individual switching device.

To illustrate the current administrative challenges, consider each port in the network as a management point, meaning each port must be individually configured, which is both time consuming and cumbersome.

Additional challenges exist in properly classifying data and routing traffic. An example of this would be two different traffic types, such as iSCSI and voice. iSCSI is storage traffic and typically a full size packet or even jumbo frame, while voice data is typically transmitted in a very small packet. Additionally, they have different requirements: Voice is very latency sensitive to maintain call quality, while iSCSI is less latency sensitive but will benefit from more bandwidth. Traditional networks have few if any tools to differentiate these traffic types and send them down separate paths, which are beneficial to both types. These types of issues are what SDN looks to solve.

The Three Key Elements of SDN:

- Ability to manage the forwarding of frames/packets and apply policy;

- Ability to perform this at scale in a dynamic fashion;

- Ability to be programmed.

Note: In order to qualify as SDN, an architecture does not have to be open, standard, interoperable, etc. A proprietary architecture can meet the definition and provide the same benefits.

An SDN architecture must be able to manipulate frame and packet flows through the network at large scale in a programmable fashion. The hardware plumbing of an SDN will typically be designed as a converged (capable of carrying all data types including desired forms of storage traffic) mesh of large, lower latency pipes commonly called a fabric. The SDN architecture itself will in turn provide a network-wide view and the ability to manage the network and network flows centrally.

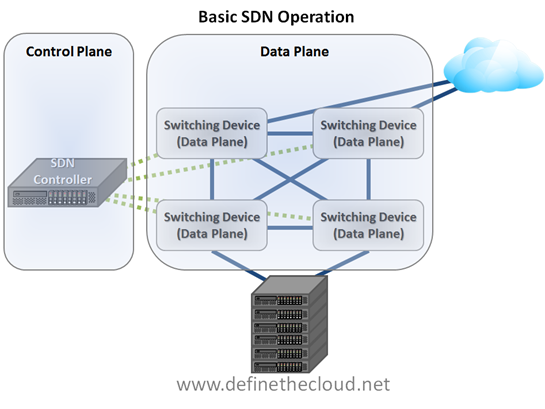

This architecture is accomplished by separating the control plane from the data plane devices and providing a programmable interface for that separated control plane. The data plane devices receive forwarding rules from the separated control plane and apply those rules in hardware ASICs. These ASICs can be either commodity switching ASICs or customized silicone depending on the functionality and performance aspects required. The diagram below depicts this relationship:

In this model, the SDN controller provides the control plane, and the data plane is comprised of hardware switching devices. These devices can either be new hardware devices or existing hardware with specialized firmware, depending on the vendor and deployment model.

One major advantage that is clearly shown in this example is the visibility provided to the control plane. Rather than each individual data plane device relying on advertisements from other devices to build its view of the network topology, a single control plane device has a view of the entire network. This provides a platform from which advanced routing, security, and quality decisions can be made, hence the need for programmability.

Another major capability that can be drawn from this centralized control is visibility. With a centralized controller device it is much easier to gain usable data about real time flows on the network, and make decisions (automated or manual) based on that data.

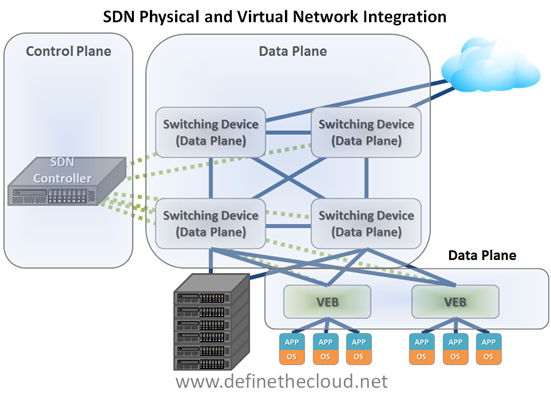

This diagram only shows a portion of the picture, as it is focused on physical infrastructure and servers. Another major benefit is the integration of virtual server environments into SDN networks. This allows centralized management of consistent policies for both virtual and physical resources. Integrating a virtual network is done by having a Virtual Ethernet Bridge (VEB) in the hypervisor that can be controlled by an SDN controller. The diagram below depicts this:

This diagram more clearly depicts the integration between virtual and physical networking systems to provide cohesive consistent control of the network. This plays a more important role as virtual workloads migrate. Because both the virtual and physical data planes are managed centrally by the control plane, when a VM migration happens its network configuration can move with it regardless of destination in the fabric. This is a key benefit for policy enforcement in virtualized environments, because more granular controls can be placed on the VM itself as an individual port and those controls stick with the VM throughout the environment.

Note: These diagrams are a generalized depiction of an SDN architecture. Methods other than a single separated controller could be used, but this is the most common concept.

With the system in place for centralized command and control of the network through SDN and a programmable interface, more intelligent processes can be added to handle complex systems. Real-time decisions can be made for traffic optimization, security, outage, or maintenance. Separate traffic types can be run side-by-side while receiving different paths and forwarding that can respond dynamically to network changes.

SDN Vendor Roundup

Just as many equate a “post-PC” future as bringing the downfall of Microsoft, the SDN world is hyped as the post-Cisco world.

- Cisco recently made a big strategic announcement with its Open Network Environment (ONE) – see this write-up from Network Computing’s Mike Fratto – and also has the new spin-in Insieme in the SDN space.

- SDN also highlights the battle between custom and merchant silicon. Cisco primarily uses its own chips, while most of the other Ethernet switch makers use chips from Broadcom or others. At the recent Dell Storage Forum conference, Broadcom’s CTO of Infrastructure and Networking, Nick Ilyadis, discussed the role of networking software (including SDN). Watch the full video here.

- Brocade shares on its SDN landing page plans for SDN, support for OpenFlow and how Ethernet Fabrics fit into the equation.

- HP supports OpenFlow across its switching portfolio, and in a video discussion at HP Discover, HP Networking CTO, Saar Gillai, discussed the emerging SDN ecosystem and hinted at HP’s plans for developing an OpenFlow controller. Watch the full video here.

- Dell has expanded its efforts in the networking marketplace through acquisitions. In this video interview, Dell Networking GM Dario Zamarian explains where Dell fits into SDN. Watch the full video here.

- Juniper Networks presents its latest vision for SDN in this blog post.

- Arista Networks has an SDN landing page.

- IBM has a whitepaper on SDN and is adding more information on the IBM networking microsite.

Network Virtualization

In the discussions on the future of networking, too many of the vendors seem to pitch that the end-goal is SDN. Protocols are rarely the answer that customers need; improving IT requires a holistic approach of technology, training, and organizational change. Wikibon got its first look at Nicira, and the vision is compelling (see Network Virtualization – Transition or Transformation?). Nicira is often called an SDN startup since its founders were instrumental in OpenFlow’s creation; but while Nicira’s solution is focused on the control plane, it is more akin to server virtualization. This puts a layer of abstraction between the application and the physical world. With the addition of mobility features like VMware VMotion and Microsoft Live Migration, this also allowed for the creation of a pool of servers where VMs could be deployed and reallocated dynamically. Network virtualization holds the promise of extending this agility to multi-hypervisor environments, enabling the promise of any workload, anywhere. This has the potential to cause significant disruption to the network vendor landscape, not by making the hardware irrelevant but rather through realignment to solutions that can take advantage of new architectures (similar to how servers realigned larger boxes and blade servers for virtualization). Nicira’s goals for network virtualization are complementary to SDN:

- Decouple software and hardware so that Layer 2/3 can be done on any switch,

- Reproduce the physical in virtual, and,

- Automate networking, allowing for operational improvements.

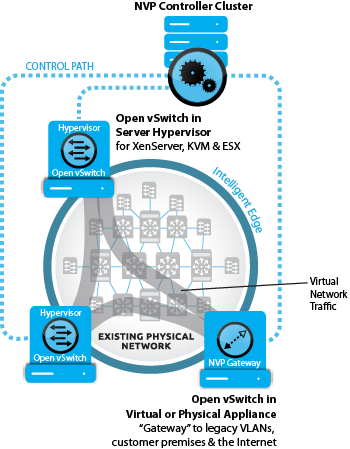

While most SDN solutions are still in the development phase, Nicira is engaged at 24 customers including some of the largest Web, telecommunications and cloud service providers with product (they publicly list AT&T, eBay, Fidelity, NTT, and Rackspace as customers). The Nicira solution is currently only targeted for very large scale deployments, a significantly different usage than the universities that OpenFlow has used as early test beds. Nicira’s DVNI (Distributed Virtual Network Infrastructure) architecture builds a network virtualization platform (NVP) using Open vSwitch to decouple VMs from the physical network, a needed solution that Edge Virtual Bridging has failed to deliver. Network virtualization also uses tunneling such as VXLAN, NVGRE or STT(stateless transport tunneling, which Nicira uses today and is in IETF draft). While the SDN crowd talks of transforming networking, Nicira’s vision is to enable the next generation of hyper-scale distributed systems, which ripples far beyond the plumbing of data centers.

Preparing for the Future of Networking

Action Item: While it is early days for SDN and network virtualization, CIOs need to take these waves of disruption into consideration as they plan for the future. Within the next couple of years, these solutions have the potential to help CIOs transform the business through new operational models. It’s never too soon to engage the IT staff in learning about these new technologies, so they can be excited and help lead the transformation rather than fear that it will displace them. See “Will Engineers Hold Networking Back” on the Packet Pushers site for a good discussion of how network administrators see transformation. As Bill Gates has said about technology change, “We always overestimate the change that will occur in the next two years and underestimate the change that will occur in the next ten.”

Footnotes: As noted above, Joe Onisick wrote the Traditional Network Architecture and The Three Key Elements of SDN sections, Stu Miniman wrote the rest.

Stu Miniman is a Principal Research Contributor for Wikibon. He focuses on networking, virtualization, Infrastructure 2.0 and cloud technologies. Stu can be reached via email (stu@wikibon.org) or twitter (@stu).