Premise – Internet of Things requires Edge and Cloud Computing

AWS announced its initial architecture for managing the Internet of Things (IoT) at AWS re:Invent in October 2015. Not surprisingly, it is cloud-centric. All the management and processing of data from the sensors is performed in the AWS cloud. It is well designed, and there are IoT spaces (especially initial deployments) when this approach will be appropriate. However, the AWS architecture is built round the premise that the cloud should provide all the management, compute and storage centrally and manage the sensors on its own.

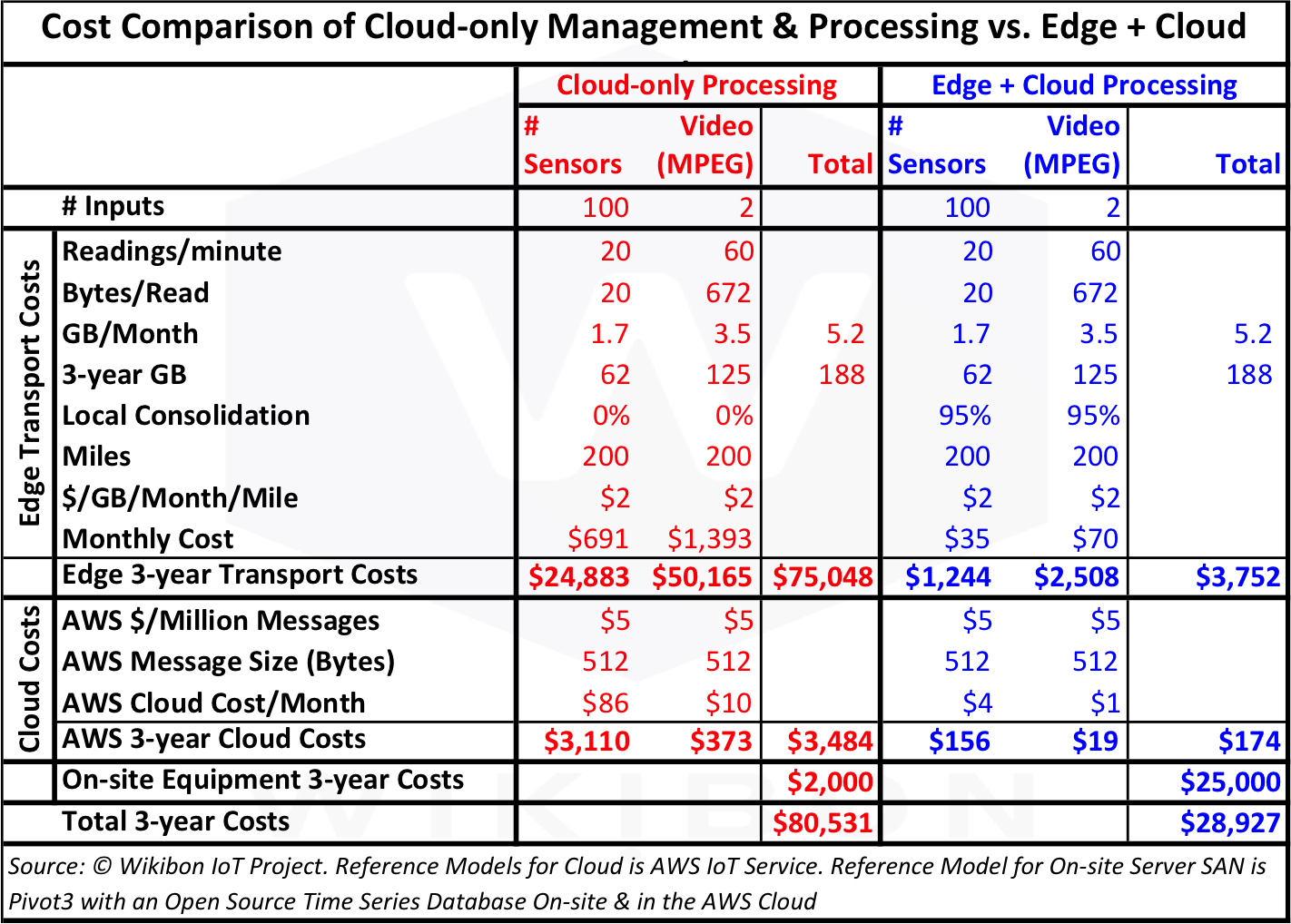

This research is designed to compare this cloud-only architecture approach with a low-cost converged “edge computing” working in concert with cloud computing. The two equipment reference models in this research are AWS IoT cloud service and the Pivot3 Server SAN with a time-series database. Both are cost leaders in their field.

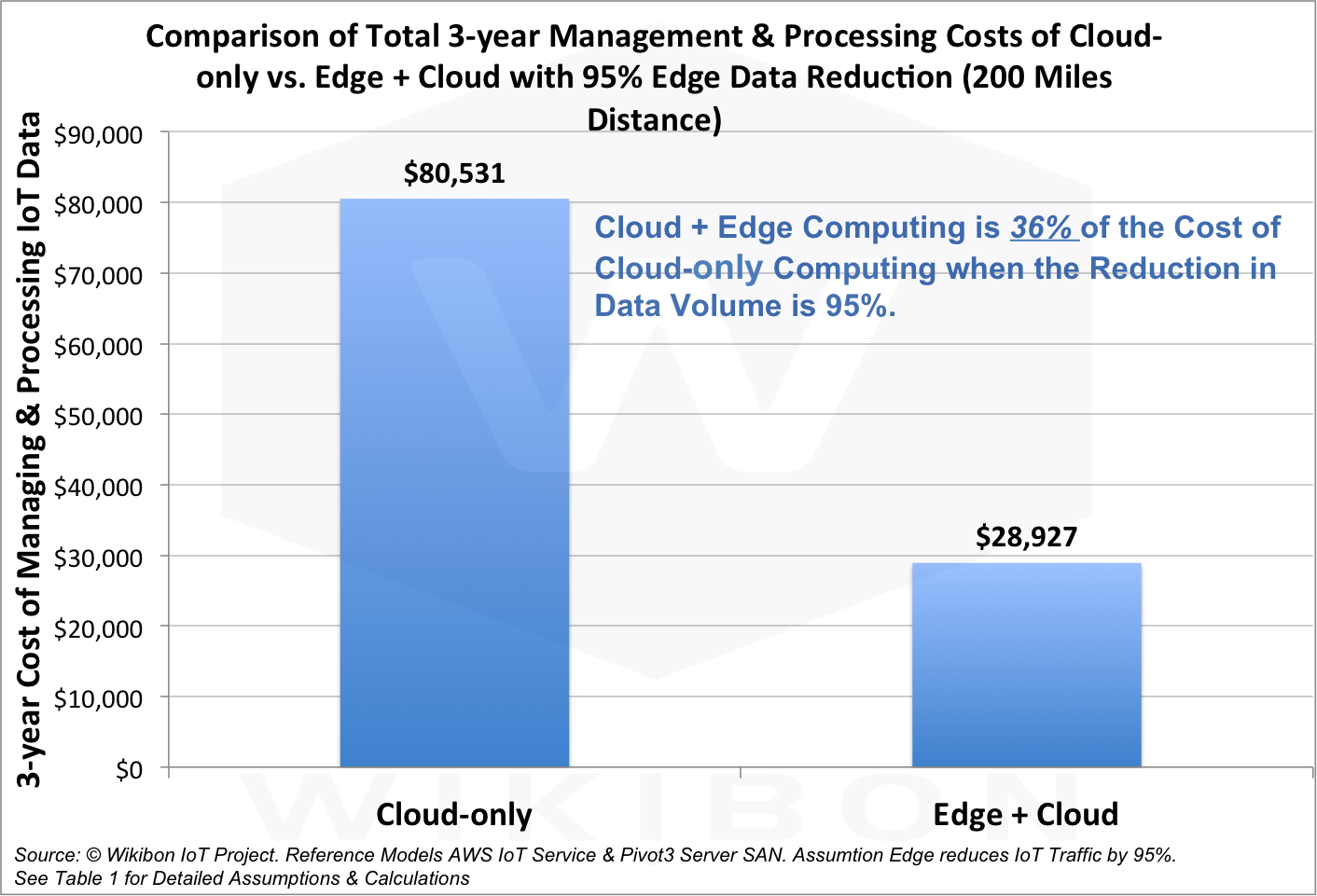

The reference case study for this research is a remote wind-farm with security cameras and other sensors. Figure 1 is a summary of the findings of this research. It compares the 3-year management & processing costs of a cloud-only solution using AWS’s IoT services compared with an Edge + cloud solution using a Pivot3 Server SAN with an Open Source Time-series Database together with AWS IoT services. With a distance of 200 miles between the wind-farm and the cloud, and with an assumed 95% reduction in traffic from using the edge computing capabilities, the total cost is reduced from about $81,000 to $29,000 over 3 years. The cost of Edge + Cloud is about 1/3 the cost of a Cloud-only approach.

Source: © Wikibon IoT Project. Reference Models AWS IoT Service & Pivot3 Server SAN. Assumtion Edge reduces IoT Traffic by 95%. See Table 1 for Detailed Assumptions & Calculations

As a result of this research and other work, Wikibon believes IoT systems will be safer, more reliable, lower cost and more functional using an Edge computing plus Cloud (private or public) approach.

Reference Model – AWS IoT Services

This section is about the technical details of the AWS IoT services, and can be skipped.

AWS IoT supplies an SDK to connect your hardware sensor devices or mobile applications. This SDK allows devices to connect, authenticate, and exchange messages with AWS IoT. The MQTT (a client/server protocol) and the standard internet HTTP 1.1 protocol are supported. The SDK supports C, JavaScript, and Arduino. AWS IoT supports SigV4 and X.509 certificate based authentication and encryption. A device registry tracks metadata, such as device attributes and capabilities.

The AWS IoT supports the creation of a persistent, virtual device version, or “shadow,” of each device. This shadow includes the device’s last known state and desired future state, so that applications or other devices can read messages and interact with the device. This enables devices to be controlled by the AWS cloud when there is an intermittent connection.

A IoT rules engine can gather and act on data, and can route messages to AWS services such as Lambda, Kinesis, S3, Amazon Machine Learning, and DynamoDB. Rules can trigger the execution of Java, Node.js or Python code in AWS Lambda.

The initial AWS IoT does not support edge computing or time-series databases.

Source: © Wikibon IoT Project, 2015

Reference Model – Pivot3 Server SAN

This section is about the technical details of the Pivot3 Server SAN, and can be skipped.

Server SAN is a rapidly emerging architecture for software-led enterprise systems, moving what was previous done in storage arrays to the processor(s). Wikibon analysis shows that the adoption of Server SAN will be driven by lower cost and improved performance. For IoT Edge processing, Server SAN is lower cost and much more efficient than traditional systems.

Pivot3 is a major contributor to the migration of video surveillance from the analog to the digital world. In Figure 2, Wikibon projects surveillance to be about 46% of the data created in 2020 (62 Zetabytes). As the IoT develops, the majority of IoT Edge sites will combine sensor data with surveillance data.

Pivot3 has developed its vSTAC operating system using its own implementation of erasure coding, bypassing the hypervisor for storage processing. The OS optimizes processors for both storage and application processing. As a result, Wikibon rates Pivot3 as a highly efficient Server SAN, and the leading low-cost provider. With a deep understanding of processing surveillance data and a very efficient platform, Wikibon believes Pivot3 is well positioned to provide Edge devices for the IoT.

Wind Farm Case Study

The reference case study in this research is a small fairly remote wind-farm with a combination of security cameras, separate security sensors, sensors on the wind-turbines and access sensors for all employee physical access points. This environment is a pilot configuration. By no means is this a model of the complete long-term sensor coverage for the wind farm. The number of sensors and video surveillance cameras would be expected to grow to be many times larger than the pilot system. The details of the initial case study are in Table 1 below.

Source: © Wikibon IoT Project. Reference Models AWS IoT Service & Pivot3 Server SAN. Assumtion Edge reduces IoT Traffic by 95%.

The model in Table 1 assumes a 200 mile distance from the wind-farm to the datacenter, and calculates the transport cost from the edge to the datacenter. The MPEG video is assumed to be compressed by about 100:1, and the quality assumptions are black/white (only 8 bits/pixel), 1 frame/sec, and 240 x 120 pixels in size. This is the minimum security quality, and would not provide an effective basis for advanced functions such as face recognition. The sensor data shows a small amount of data (20 bytes) gathered every three seconds from 100 sensors. The amount of sensor data is about half the low quality MPEG data coming from the 2 video feeds.

The key difference between the red and blue sections in Table 1 is the percentage local consolidation. The red section assumes there is no local consolidation or time-series database, and all the data is managed and processed in the cloud. The blue section assumes an Edge computing capability, with a 20:1 reduction in data required to be transmitted. This is easily achieved in reality, and higher data reductions of 100:1 or more are easily achievable.

The data in Table 1 is the origin of the Figure 1 above, and shows that providing Edge computing with a 20:1 reduction in data transmitted would reduce the costs to 1/3 the cost of doing all the processing in the cloud.

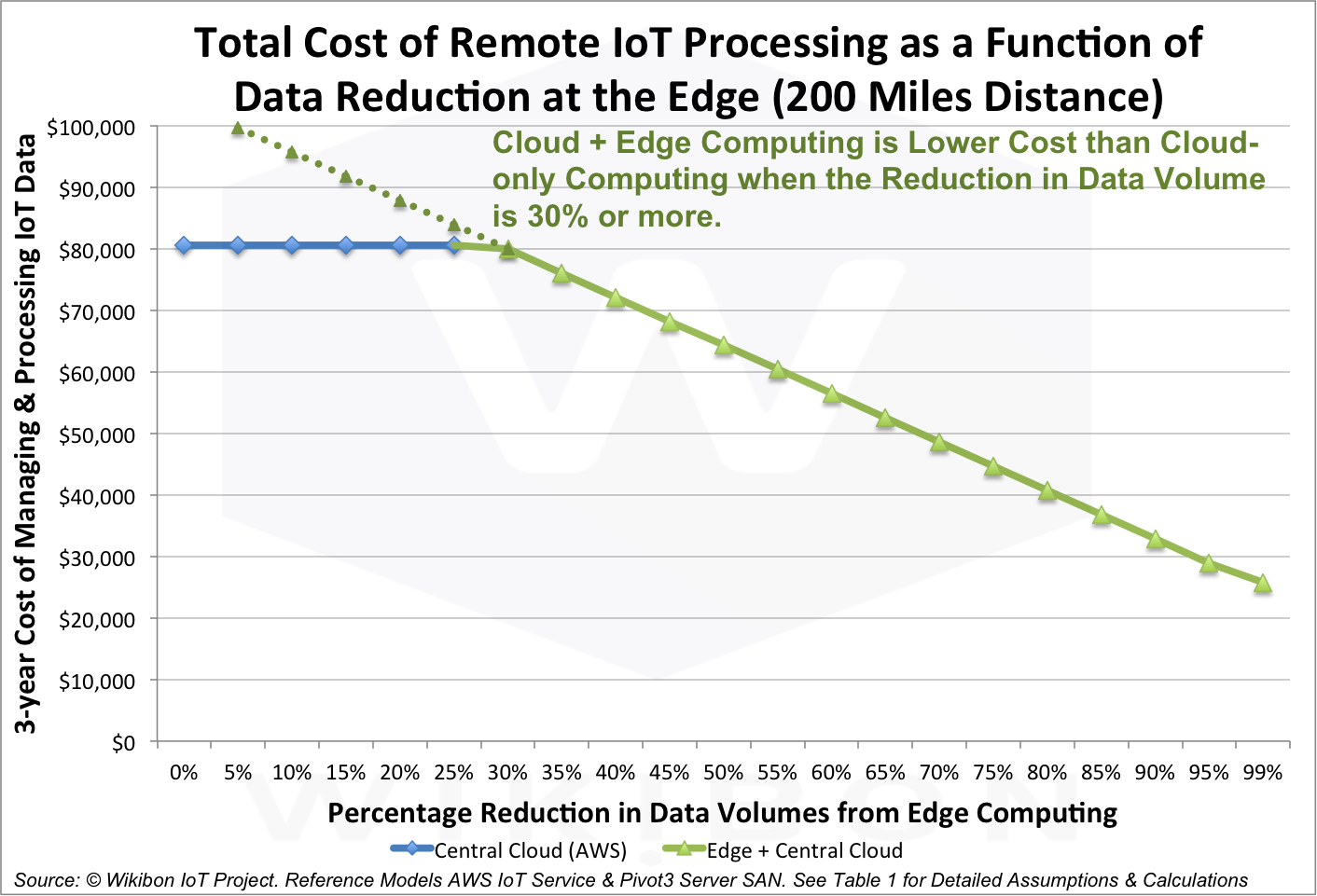

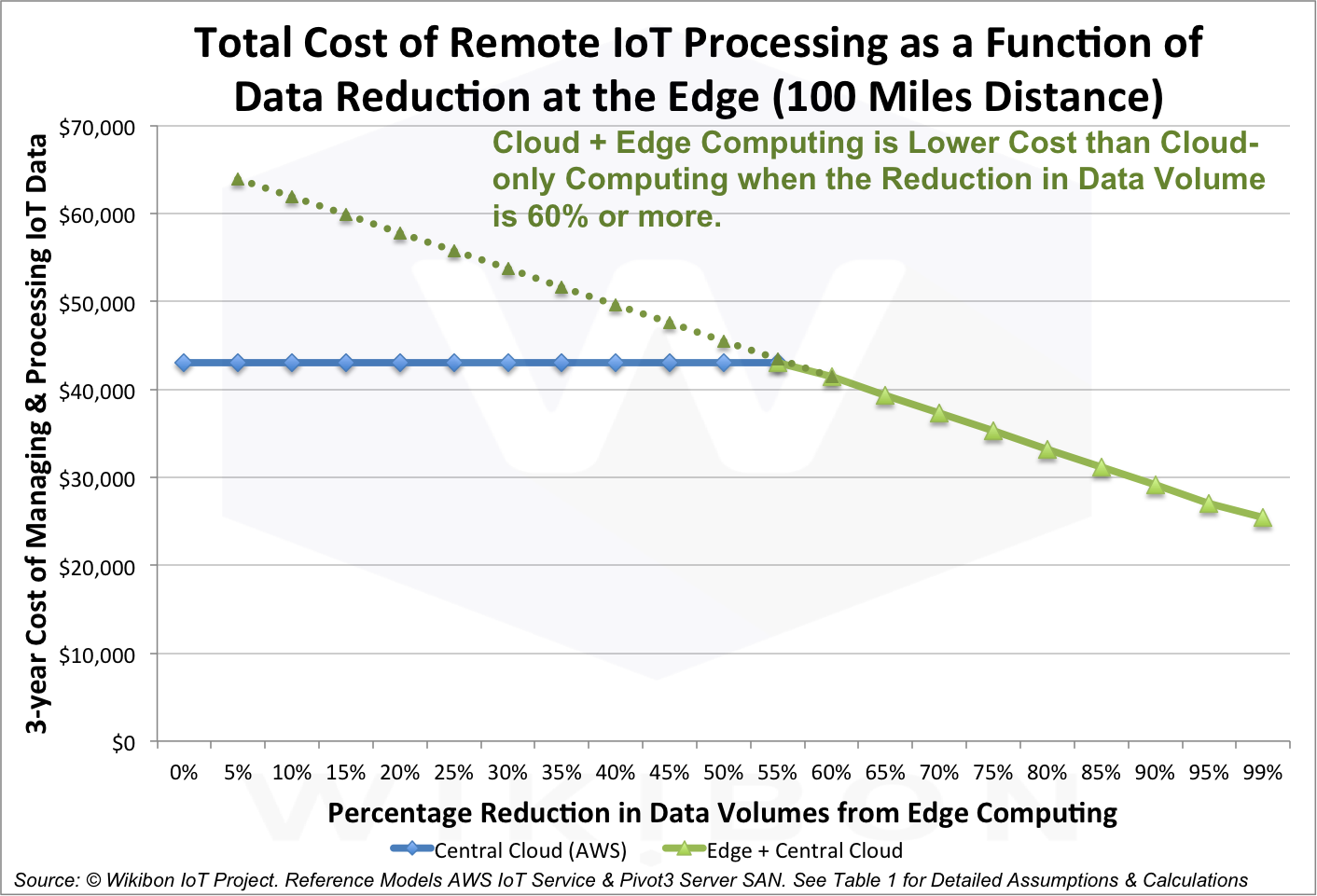

Figure 3 takes the data in Table 1 and shows the cost results for Edge reduction percentages from 0% to 99%. The cross-over point is a 30% reduction. Figure 4 in the Footnotes shows the calculations for a 100 miles distance between the Edge and the datacenter. Even with much shorter distances, the advantage of Edge computing is likely to be overwhelming.

Source: © Wikibon IoT Project. Reference Models AWS IoT Service & Pivot3 Server SAN. One assumption is a distance of 200 miles between the Edge computing and the Datacenter. See Table 1 for additional Detailed Assumptions & Calculations.

This model is only an initial deployment, with minimal data generated. The Edge computing at higher reduction levels of 100:1 or higher would allow much richer data to be gathered and processed at the Edge, and provide a safer, more reliable and lower cost solution.

Advantages of the Cloud-only Centric and Edge + Cloud Models

The advantages for managing the sensors and video streams using a cloud-only model include:

- Faster initial programming

- Faster initial testing

- Lower cloud acquisition cost of hardware

- No maintenance of local “Edge Computing”

- Better integration of data with other non-connected data streams (e.g., comparison of faces with “suspect” database in the same cloud)

- Better initial availability of data about sensors across different sites (value to sensor manufacturers).

This cloud-only model works well for single sensor systems in multiple different locations, where there are low data rates and already existing communication capabilities. An example is the Google NEST system for managing home heating.

The advantages for managing the sensors and video streams using an Edge computing plus cloud model include:

- Much lower bandwidth requirements

- Significantly lower overall costs

- Greater availability from local automation and local autonomy

- Better advanced real-time functionality from integration of local sensors

- Easier to communicated to multiple clouds (e.g., comparison of faces using a different SaaS cloud(s))

- Ability to use a lower-cost consumer commodity ecosystem with sensors based on current consumer mobile management of sensors

- Earlier adoption of new sensors from the consumer mobile commodity ecosystem

- Earlier adoption of new sensors with much higher data rates

- Less complex and real-time local management of sensors (resetting, managing drift, etc.)

- Less complex ability to test and manage local sensors

- Higher M2M functionality based on lower latencies

The bottom line is that the cloud-only approach is likely to allow faster initial deployment with initial deployments of sensors with limited data rates. However, this approach would require a complete replacement of most of the cloud-only application programming by a cloud service that supports Edge computing.

Conclusions

Wikibon concludes that the Internet of Things will develop with an Edge + cloud computing architecture for the vast majority of sensor implementations. The local Edge computing will almost always include a time-series database to assist in data reduction and local and cloud processing. The majority of the IoT processing will be machine to machine (M2M), without the slow end-user response times present in most client/server systems. M2M interaction will demand very low response times from all the compute system elements, which will not be possible over long distances.

Wikibon believes that AWS is philosophically opposed to Edge computing, but will eventually support Edge models. Wikibon believes that other cloud services (Microsoft Azure, Google, Apple, General Electric and many others) are likely to provide superior Edge computing + cloud solutions initially.

Wikibon believes that Edge computing will be a converged software-led model. There are two distinct types of solution in this space:

- Edge systems based on Intel processors and and a new sensor ecosystem.

- Edge systems based on ARM processors, utilizing the existing mobile sensor ecosystem. Major potential suppliers are Android systems from Google and Samsung, and iOS systems from Apple.

It is unclear which model will dominate. There is certainly room for each solution type to be dominant in different segments of the market, with (say) ARM being dominant in consumer “set-top box” type home systems and (say) Intel being dominant in industrial sites. Wikibon believes there will be very fast reduction in the cost of Edge compute systems, with set-box type home systems coming in at well below $1,000 in the near future.

Wikibon believes that projections based on all sensor traffic going over the internet are unlikely to happen in the long run. The impact of Edge IoT computing is very likely to significantly reduce the amount of internet traffic required.

Wikibon believes there is enormous potential for higher level analytics and automation to be processed in the cloud, with the ability to analyze and optimize IoT inputs from many different sources.

Action Item:

As a result of this research and other work, Wikibon believes IoT systems will be safer, more reliable, lower cost and more functional using an Edge computing plus Cloud (private or public) approach. Wikibon advises all senior management responsible for IoT implementations to assume that an Edge plus cloud architecture will be required, and to ensure that IoT RFPs mandate vendors to provide a robust Edge/Cloud architecture for private and public clouds.

Footnotes:

Figure 4 shows a break-even of 60% data reduction when the distance between the Edge and datacenter is 100 miles distance. The normal reduction achievable with Edge computing is 95% to 99% or higher (20:1, 100:1 or higher).

Source: © Wikibon IoT Project. Reference Models AWS IoT Service & Pivot3 Server SAN. One assumption is a distance of 100 miles between the Edge computing and the Datacenter. See Table 1 for additional Detailed Assumptions & Calculations.

—end—