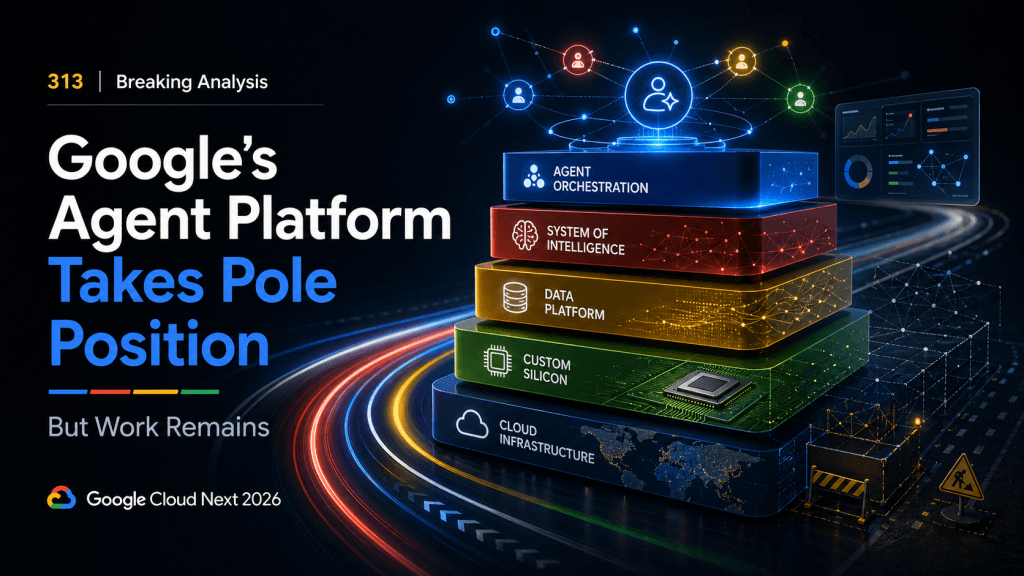

313 | Breaking Analysis | Google’s Agent Platform Takes Pole Position but Work Remains

Enterprises are rapidly moving from an AI that answers questions and generates content to one that performs tasks and takes actions. According to Thomas Kurian, CEO of Google Cloud, this shift requires a fundamentally different approach to infrastructure and software. Google’s view is that only a tightly integrated portfolio – spanning silicon to applications and everything in between – can effectively support this transition.

From Answers to Actions: Why Google’s Full-Stack AI Bet Is Gaining Momentum – And What Still Needs to Happen – Reflections from an AMA with Thomas Kurian

Enterprises are rapidly moving from an AI that answers questions and generates content to one that performs tasks and takes actions. According to Thomas Kurian, CEO of Google Cloud, this shift requires a fundamentally different approach to infrastructure and software. Google’s view is that only a tightly integrated portfolio – spanning silicon to applications and everything in between – can effectively support this transition.

Autonomous Workspaces Push AI Into the Operational Core of Enterprise IT

AI-driven autonomous workspaces shift IT from management to operations, improving security, lifecycle control, and user experience.

312 | Breaking Analysis | As AI Powers Google, What’s Next for Google Cloud

The agentic era is forcing a reset in enterprise architecture. Agents taking action go far beyond just analyzing data living in lakehouses. Agents acting on behalf of humans, continuously, at machine scale bring new architectural requirements to the enterprise. The so-called “modern data stack” as most organizations know it, has become a sort of “new legacy.” No longer can organizations rely on stitched-together systems, fragmented governance, batch pipelines, and historical security boundaries. As we move from human-scale dashboards to agent-scale execution, fragmentation becomes an operational and compliance risk.

This is where we believe Google has an underappreciated advantage. Our research indicates the winning architectures in the agentic era will be the ones that operate as a coherent, end-to-end system — where the model, the cognitive engine, and the infrastructure are tightly integrated and share a single trusted boundary, consistent security controls, and an efficient cost structure that can generate tokens in volume but doesn’t collapse under thousands of agent interactions per minute. This is the premise behind our Google thesis. We believe Google is in a strong position to build on decades of infrastructure and data excellence and push toward an AI powered cloud that goes beyond a reactive system of intelligence to one that takes action at scale. Essentially we see Google as one of the companies best positioned to execute on our vision of delivering a real-time digital representation of an enterprise. One that blends the power of generative AI with trusted and consistent determinism to deliver real time actions that leverage both structured and unstructured and can execute transactions as scale.

Non-Human Identity and AI Automation Are Redefining Enterprise Security Models

AI, automation, and non-human identities are reshaping enterprise security, shifting focus to identity governance and continuous control.

AI Infrastructure Breaks Away From Cloud-Native Models as Enterprises Chase Production Scale

AI adoption grows, but production lags. Explore how AI infrastructure, GPU efficiency, and unified platforms enable enterprise scale.

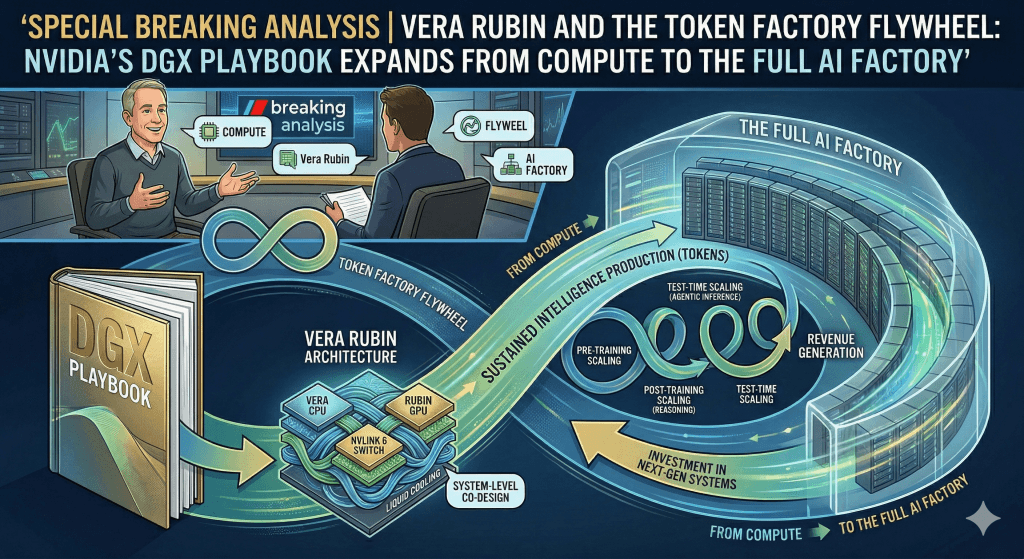

Special Breaking Analysis | Vera Rubin and the Token Factory Flywheel: Nvidia’s DGX Playbook Expands from Compute to the Full AI Factory – In Conversation with Nvidia’s Charlie Boyle

We believe GTC ’26 marked another step-change in the industrialization of AI. Nvidia is moving well beyond “faster GPUs” toward a full-stack AI factory model designed to lower the cost of tokens and expand what customers can build and monetize. We sat down at GTC with Charlie Boyle, VP of DGX at NVIDIA, who tied nearly every major announcement back to a laser-like focus on more tokens at lower cost, delivered through a rack-scale architecture that integrates compute, networking, storage, and power controls.

AI Software Development Shifts From Code Generation to Governed Application Delivery

AI shifts from code generation to governed delivery as enterprises prioritize security, observability, and production readiness.

311 | Breaking Analysis | The Agentic Gap: Vendors Sprint, Enterprises Crawl

Geopolitical dislocations are ripping through the stock market and are filtering down to IT budgets in the form of increased uncertainty. It seems that every quarter of budget optimism is followed with some external event that causes organizations to tighten their belts. Specifically, we’ve seen the increased momentum in January CIO sentiment on spending, pull back as war, oil prices, the threat of inflation and even the prospect of Fed tightening now loom larger. While big tech players continue to spend massively on CAPEX, and the genuine enthusiasm from this month’s Nvidia GTC and RSAC events is still being felt, mainstream enterprises are once again expressing caution in their spending intentions. In addition to economic and world affairs, AI success still eludes most mainstream organizations. Our observation is the tech industry is in the third inning of the AI wave, which started in earnest mid last decade with Deep Mind and other significant research milestones that led to the ChatGPT and subsequent moments like Claude Code and OpenClaw. Yet organizations are still in the first inning. The data suggests that while virtually all firms are leaning into AI, those realizing ROI at scale remain the minority. While leading thinkers like Jensen Huang advise not focusing on ROI and letting innovation flourish irrespective of hard dollar returns, the reality is in the land of enterprise customers, tangible returns and risk management remain key governors of spending.