Introduction

Eighteen months ago Wikibon published research on virtualizing Oracle entitled Damn the Torpedoes: Virtualize Oracle as Fast as Possible. Wikibon recommended organizations not to “…wait for Oracle to announce support for virtualization but to take an aggressive yet pragmatic approach, moving towards virtualization as the standard for all production systems.” [Note: The research discusses virtualization generally and non-Oracle hypervisors specifically].

Wikibon has checked in again with its community with more than 15 hours of in-depth interviews to find out if any practitioners had been torpedoed by virtualizing Oracle. Wikibon found widespread virtualization of test and development and production systems, with Oracle taking a more pragmatic approach to support. Often users had a small skirmish while the Oracle sales teams tried to suggest Oracle VM and/or Exadata, followed by resigned acceptance. The reaction to Exadata is summed up by a senior IT executive:

“……if we go down the Exadata path, now my DBA becomes my storage and operating system engineer. And that is a difficult pill to swallow. What we have done, however, is to take the Exadata concept – the x86 server, the storage layer and the high-speed interconnect integration – and we have built our own architectural model along those lines.”

Levels Of Oracle Database Services

Our research shows that Oracle database customers are implementing virtualization as a means of tranforming their IT infrastructure to offer an “as-a-service” capability. Wikibon found three good service approaches to virtualizing Oracle:

- Hypervisor-only Service: Using hypervisor services to recover an Oracle instance on another VM if necessary (e.g., VMware vSphere SRM to another server in the event of a server failure);

- This service is suitable for test and development and for production applications with few users who can tolerate short outages (99.9% availability).

- Hypervisor with Oracle Clusterware or other cluster software:

- This service is suitable for business-critical production applications with high-availability requirements (99.95% availability).

- Hypervisor with full Oracle RAC:

- This approach is suitable for mission critical production applications, which cannot be down for any length of time (99.99+% availability).

[Note: Availability figures cited are from an application/user point of view and NOT a reflection of infrastructure availability (i.e. the server or storage array).

The three approaches allow greater flexibility in choosing the appropriate hardware and software to meet the necessary recovery objectives for Oracle applications. Some installations used all three approaches, others only the first and/or second approach. For larger organizations, Wikibon recommends that all three approaches be offered as Oracle services.

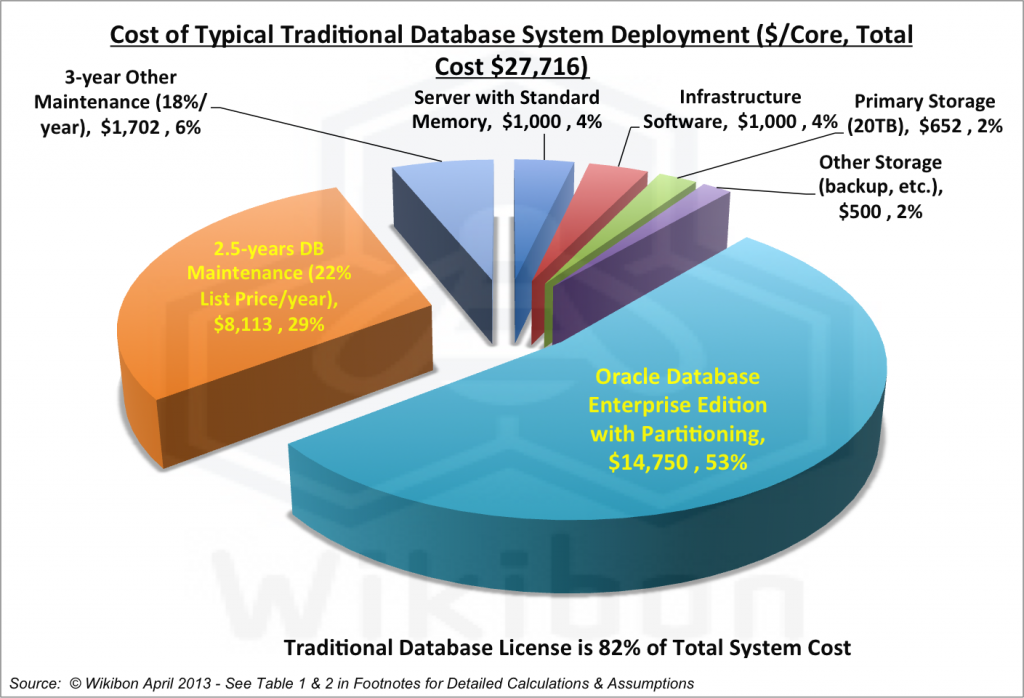

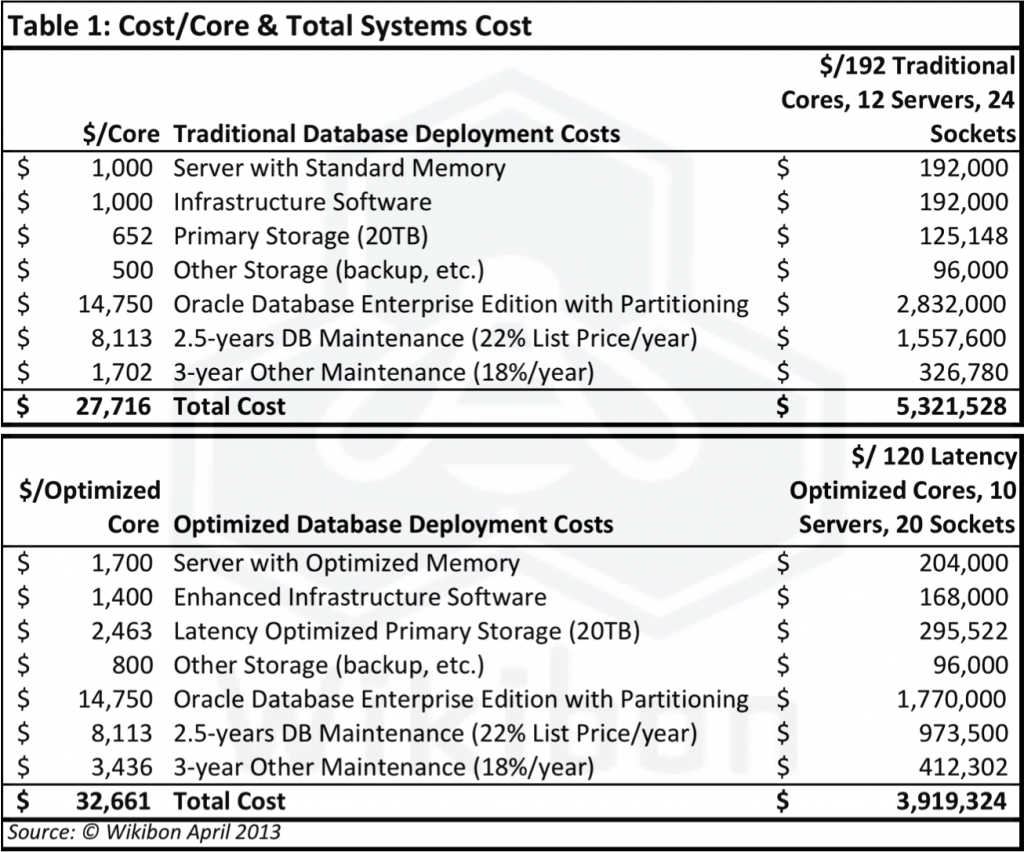

The Cost Of Deploying An Oracle Traditional “Core”

Figure 1 shows the cost of deploying a single traditional “core” of processing, including the cost of the processors, storage, infrastructure software, and database software (Oracle Enterprise Edition). This would be typical of database services 1 & 2 above. Service 3 is more expensive because of the increased cost of the Oracle RAC feature. The key conclusion from Figure 1 is that the three-year cost to deploy a core is $27,716 and the cost of Oracle database software and maintenance is 82% of the total ($22,863).

Wikibon’s research found that smaller organizations understood this well. While the principle is understood by larger organizations, in practice the budget for Oracle database products is usually held within the application development department. Operations management is responsible for the remaining 15% of the cost and often believes that their job is to optimize that 15%. So, there is little incentive to tackle this problem. As a result, we consider optimization to be both a technical and an organizational challenge.

But as we will see below, our model shows that by optimizing “core” components for performance, there is a path for organizations to minimize the overall cost of deploying Oracle database applications.

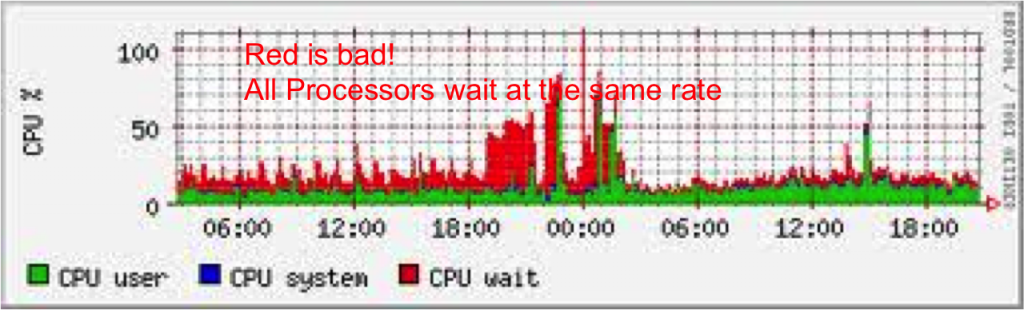

Optimizing For CPU IO Wait Time

Wikibon’s deep discussions with database and infrastructure professionals led to our creating a technical and financial model to illustrate the correct virtualized configuration that would optimize the total cost rather than just the infrastructure cost. One of the largest impacts on virtualized server cost is CPU IO wait time, as shown in Figure 2. The red area in the chart shows the impact of CPU IO wait time, which is the time waiting for IOs to complete. This goes up significantly in a virtualized environment because of the “blending” of IO from different virtual machines, which makes the IOs look more random.

The two optimal ways of reducing CPU IO wait time to improve IO response time are:

- Reducing the number of IOs that need to access persistent storage (i.e., disk) by increasing the CPU RAM memory. The size of RAM, however, is limited by two issues:

- Recovery time in the case of server failure becomes longer the larger the memory;

- The server L1 & L2 cache hit rates can be reduced if the RAM memory is too large, which can reduce the throughput of the server.

- Reducing the IO latency and variance by improving the IO subsystem. This is especially important in database applications with high write loads and complex schemas. The ultimate throughput constraint on databases is the locking rate, and throughput can be significantly increased by improving:

- IO latency, the average response time,

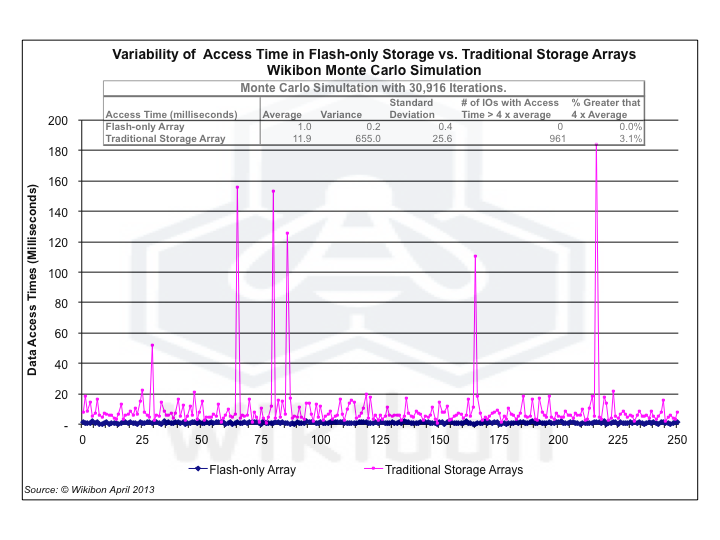

- IO variance. Long occasional IO times (e.g., a cache miss to disk, or a disk recovery) can have a significant impact on locking, as all the IOs waiting for locks to resolve will wait as well. Figure 5 in the footnotes illustrates the difference in variability between flash and traditional arrays.

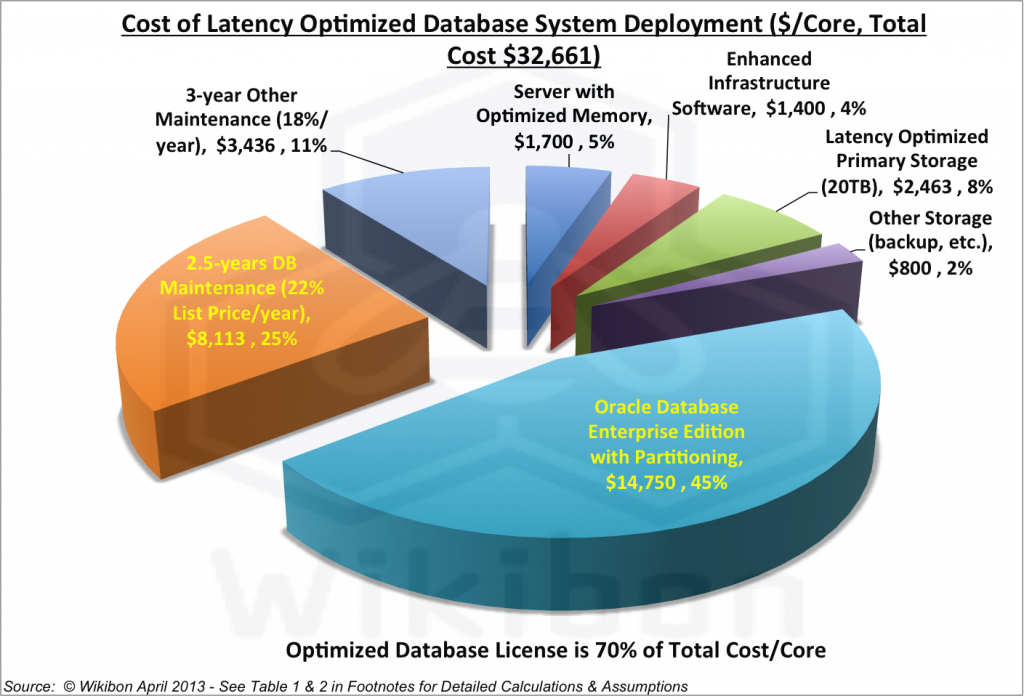

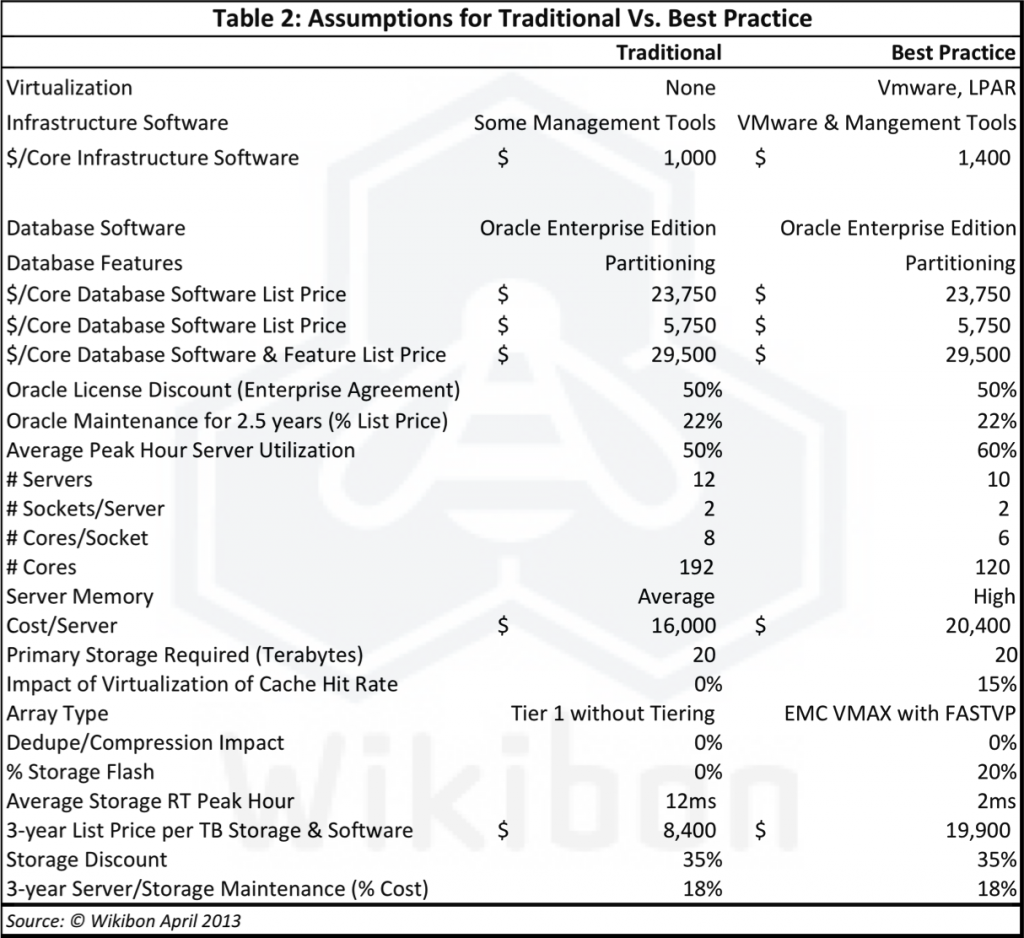

Figure 3 shows the “core” cost when the system is optimized for overall cost and performance. The processor cost is increased to include more RAM memory and to improve the balance between cores and clock speed. Database processing is almost always applied to mission- or business-critical applications, so our model assumes that a Tier 1 storage array is deployed in both the traditional core and the optimized core. Traditional storage IO times are about 10-15ms. The cost of primary storage has been doubled to reduce the latency to under 2ms and to reduce variance. The reduction is achieved by ensuring that the important IO is in flash memory in the primary storage. Because IO variance is very important for database IO (see Impact of Flash-only Arrays on Storage Access Time Variability for details of the Wikibon research), caching approaches to reducing IO latency are not sufficient, since caching increases IO variance significantly and applies only to the read IOs, and not the write IOs critical to database performance. The model assumes that a tiered storage approach is taken, where critical data can be pinned in flash storage if required. EMC’s VMAX was used as the Tier 1 reference model because it has the highest share of mission-critical Oracle storage. As well, FastVP was assumed in the optimized configuration to enable data to be pinned to flash. Overall, the percentage of flash in optimized primary storage is assumed to be about 20%. Tables 1 & 2 in the footnotes show the detailed costings and assumptions behind Figures 1 & 3.

The additional cost of server and storage enhancements increased the cost/core from $27,716/core to $32,661/core, and increase of 17.8%. However, as in shown in Figure 3, the overall cost is significantly reduced because the number of cores can be reduced from 192 to 120, a decrease of 37.5%.

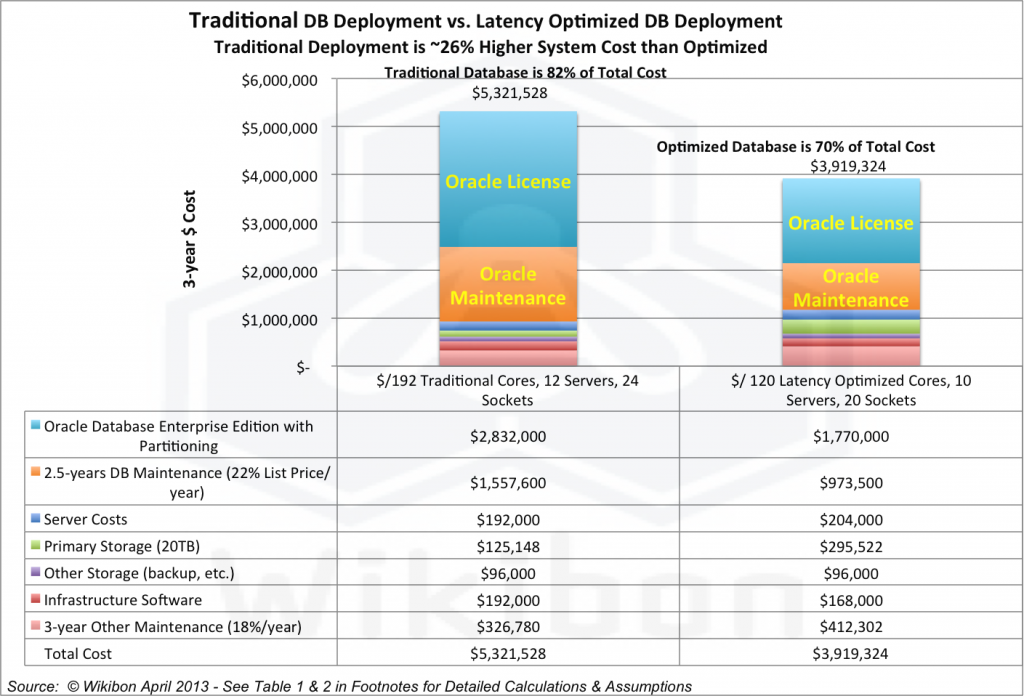

The Cost Impact Of Optimizing Database Infrastructure

The financial and technical model compares the difference in cost of deploying a traditional infrastructure for database service for a level 2 database service with deploying a hypervisor with some cluster software. Our model assumes that the hypervisor is extended function VMware, while the cluster software is Oracle Clusterware.

Our model shows that the reduction in the number of cores required by optimizing the infrastructure is significant. The traditional approach assumes the need for 192 cores (12 servers with 2 sockets per server and 8 cores per socket). By reducing the IO response time to <2ms and ensuring the IO variance is as low as possible, the IO wait time is drastically reduced, and the impact of occasional IO storms on database locking is significantly less. Figure 5 in the footnotes shows the difference in variability between flash and traditional disk-based arrays. The optimized structure would require 120 cores (10 servers, 2 sockets per server and 6 cores per socket).

Figure 4 shows the financial impact of this approach – with the traditional deployment of 192 cores being about $1.4 million more expensive over 3 years (26% Higher Budget Cost) than an optimized configuration.

Organizational Challenges To Optimizing Infrastructure Costs

The organizational challenges to deploying a virtualized and optimized database service are significant. The traditional approach is to take each application as a project, driven by the application group and justified by the line of business. The estimates for database selection, software licenses and infrastructure required are made and signed off for each project. IT Operations is often only peripherally involved until testing and implementation. The database administrators are tasked with ensuring that these critical applications continue to run.

However, this approach is very costly and provides an environment which is more difficult to change and less available. Best practice is now to provide a virtualized database service or services to support the databases. The benefits of this type of approach include:

- Ability to optimize the use of very expensive software resources such as Oracle databases, and ensure that licenses are utilized or redeployed efficiently.

- Ability to use hypervisor services such as vMotion, vStorage Motion and SRM to provide lower cost high availability environments (99.95%) for applications that can tolerate a few short outages per year.

- Ability to test systems before purchasing new licenses and ensure that only what is required is purchased “just-in-time”

- Easier enablement of testing and change to the infrastructure, because of separation of virtual machines from physical hardware

The deployment of 10 physical servers with 120 cores could be accomplished as three groups of three servers (with one backup server), with Oracle instances running under VMware on each. A primary VM on one group would have a secondary VM on another group.

Conclusions And Recommendations

Wikibon concludes that servers have become powerful enough and the virtualization management software stable enough that best practice for deploying x86 Oracle databases is to create an optimized and virtualized server and storage infrastructure for each level of Oracle service required. Pragmatically, IT organizations should start at the simplest service but have a clear objective that all future applications requiring Oracle services should be deployed on the Oracle database service. CIOs will need to adjust the budget and responsibilities within IT for operational database systems, so that there are clear responsibilities for optimizing the infrastructure and utilization of Oracle licenses, and for meeting the availability and performance service level agreements. CIOs should put pressure on ISVs to ensure that their applications are fully supported running in a virtualized database environment and that all future applications or application upgrades are fully supported. The time to ensure that Oracle agrees to support virtualization for its applications is at the time of selection, not deployment.

IT operations should ensure that the best quality servers are used for the database services and that flash is aggressively used to minimize IO response times and variance. DBAs and application managers should have access to the database performance monitoring tools and reports, especially IO performance.

Many of the organizations surveyed by Wikibon did not have tools to determine where Oracle products were deployed. CIOs should put a priority on ensuring they have a total handle on what Oracle licenses are deployed where and which application can be switched to other databases – this will assist organizations in their negotiations with Oracle. Software fromiQuate and others can support this initiative and will more than pay for itself when negotiating with Oracle.

CIOs should either take individual responsibility for establishing an end-to-end strategy for negotiating with Oracle or assign an experienced senior manager to manage the progress and the outcome. Wikibon is working on updating its advice on negotiating with Oracle.

Action Item: The single most difficult change required to achieve Oracle high availability optimization is aligning budget responsibilities for virtualized Oracle Database Services. CIOs should assign clear responsibility at a senior level for:

- Determining the different Oracle Service offerings required by the applications, including levels of high availability, Development & test, the recovery objective SLAs met by each level of service; and compliance objectives;

- Determining the impact of Oracle application unavailability;

- Defining the business cases for levels of Oracle database services and defining the recovery objectives in line with the costs and benefits by application;

- Determining the overall budget for each Oracle database service, including licensing, local and remote infrastructure hardware & software, & overall administration costs;

- Separating out the server infrastructure for Oracle database services to minimize Oracle license costs;

Establishing the default of using Oracle database service, and putting in place a process for Oracle License negotiation will lead to very significant savings to IT and the organization.

Footnotes:

Source: Wikibon, April 2013

.

Source: Wikibon 2013, The results in the table are based on a Monte Carlo model of storage variability. The chart is a representative snapshot illustration of the first 250 points in a simulation of 30,915 iterations; the lines connecting the points are there to help differentiate the shape of the point distribution – the area underneath the line has no statistical meaning.

Figure 5 shows a Monte Carlo simulation of IO RT and Variability of flash storage vs traditional HDD storage. The “snapshot” chart of the first 250 points in a simulation of 30,916 iterations shows the increase in variability of IO with HDD. Because of the high degree of serialization in most database applications, high variability in IO leads to must higher and more much more variable lock release times, increased timeouts and significantly decreased user experience and productivity. For databases in general, variability (in this case, measured as the percentage IOs completing outside 4 times the average response time) is an important metric. It shows that in the case of flash, the percentage of IOs with access times greater than 4ms (4 x average of 1ms) is 0%. Performance is unlikely to be an issue in such an environment.

In the case of traditional hard disk, the percentage is about 3%. Database performance becomes compromised with a high percentage of long IO requests (e.g., over 48ms), because pauses of this type will hang up threads in the database, as well as pause an application server waiting for responses. High IO variance, together with high database complexity and high locking rates leads to erratic performance and failure of threads, processes and applications. This in turn leads to higher operational costs and maintenance costs for database and application software, as well as longer function upgrade cycles.