Quantum computing is not arriving as a magic box that obsoletes classical computing. This is the key takeaway from the work we’ve done recently at theCUBE Research culminating at World Quantum Day, where we held conversations with leading national labs. The main message to our community is that quantum’s first role will be as a specialized accelerator inside a broader hybrid compute fabric. Moreover, while most mainstream organizations should not dilute their AI initiatives with “Quantum fever,” post quantum cryptography (PQC) should be top of mind and part of the planning process in 2026.

The enterprise narrative around quantum has been somewhat distorted by extremes and perhaps not well understood. On one side are the dismissers who see quantum as a perpetual science project. On the other side are the optimists who imply quantum will quickly break everything from encryption to drug discovery to AI training. In our opinion, both views miss the point.

Our data suggests quantum will usher in a somewhat novel architectural approach that will reshape computing, but do so in a more hybrid fashion. While CPUs have not disappeared as GPUs became central to AI, the enterprise platform will change dramatically as we just outlined in this week’s Breaking Analysis. On-prem installations did not disappear when cloud emerged, but cloud became the dominant operating model, which bled into on-prem systems. HPC is not disappearing as AI factories become the center of infrastructure spending. Instead, the stack is becoming more heterogeneous, more specialized and more dependent on software orchestration.

Quantum will follow a similar pattern but unlike our prediction around AI factories “absorbing” x86 functional elements, we see quantum’s role as different.

Quantum will be a complement to existing systems

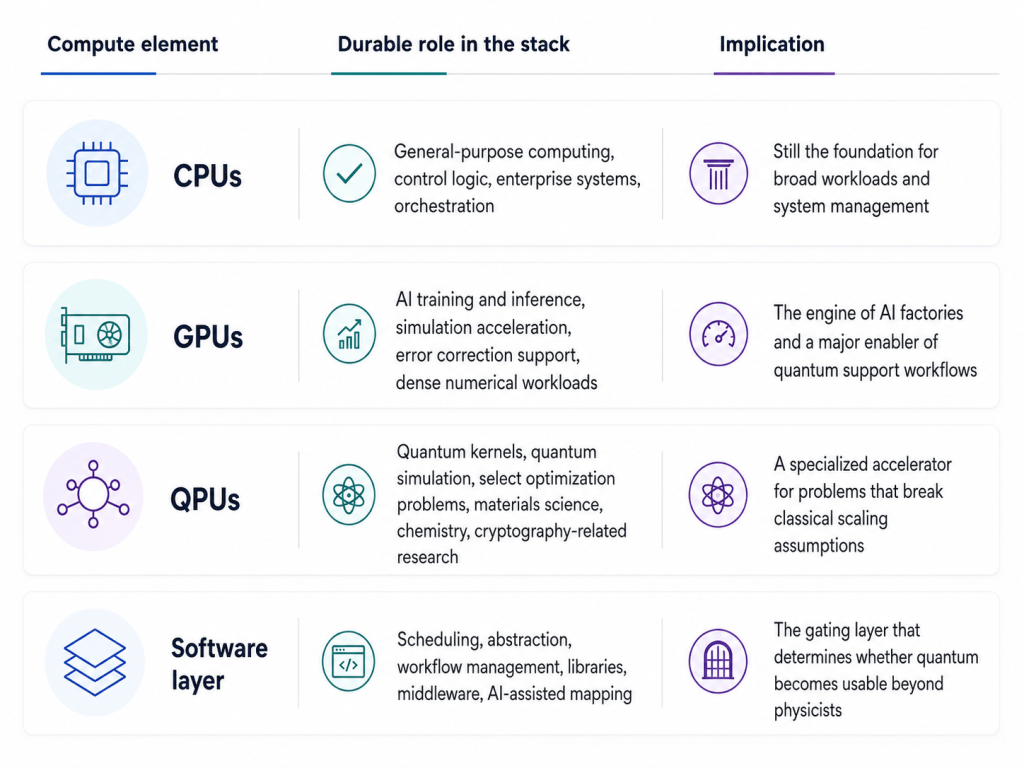

The practical model is not quantum versus classical. It is CPUs plus GPUs plus QPUs, coordinated through software, data movement, tooling, scheduling, AI-assisted control systems and domain-specific algorithms. Quantum will be used where it has structural advantage. Classical systems will continue to run the overwhelming majority of enterprise, scientific and AI workloads. The near-term opportunity is not to replace the stack. It is to make the stack quantum-aware.

The big picture: Quantum is becoming another tool in the heterogeneous computing toolbox

The most consistent theme across the World Quantum Day conversations is that quantum is not a zero-sum game. We framed the question directly in conversation with Paul Gillin – i.e. the industry often assumes one architecture will replace another, but the more likely reality is that CPUs, GPUs and QPUs come together to solve problems that were previously out of reach. Researchers are no longer looking at quantum as a replacement for traditional computer architectures, but as a complement to supercomputers and GPUs.

That view was reinforced in our visit to Oak Ridge National Laboratory. Tom Beck, section head for science engagement and acting group leader for Quantum HPC, described quantum as “the next frontier in high performance computing,” but not as a general-purpose substitute. Rather, he sees quantum initially as a specialized accelerator for certain computational workloads, with classical HPC handling some parts of the calculation and the quantum device handling the hardest quantum portion.

The implications are shown in the graphic below:

This framing is important because it changes the buyer question. The right question is not, “When should we buy a quantum computer?” The better question is, “Which parts of our workflows, security posture, R&D pipeline and data strategy should become quantum-aware?”

The market has moved from hype to workflow realism

Quantum has suffered from a timing problem. For years, credible people said the technology was “tantalizingly close,” only for the field to remain largely confined to labs, cloud experiments and specialized research settings. That has created fatigue in some parts of the market. But our research indicates the field has not stalled. It has shifted from grand claims about replacement to more pragmatic engineering around integration.

The national labs are the best place to see that shift because they are not treating quantum as a standalone miracle. They are asking a more practical question: Where does quantum belong in a real scientific workflow?

Oak Ridge provides a useful example. Frontier, the lab’s flagship supercomputing platform, is not being displaced by quantum. Instead, the discussion is about how quantum connects to systems such as Frontier. Beck described the challenge as a “game of transfer of information” — moving the right part of the calculation between the classical machine and the quantum device, and doing the hardest quantum portion on the QPU.

That sounds mundane, but it is profound. The hard problem is no longer just building a bigger quantum machine. It is deciding how to decompose a problem, route the right computational kernels to the right substrate, manage the data movement and then integrate the answer back into a larger workflow.

This is why we believe the quantum market will be shaped less by standalone quantum benchmarks and more by hybrid system performance. A QPU that cannot be integrated into real workflows will remain a lab instrument. A QPU that can be called by classical applications, orchestrated by HPC schedulers, assisted by AI and embedded in domain-specific libraries becomes part of the next computing platform.

There probably will not be a ChatGPT moment for quantum

One of the more important takeaways from the World Quantum Day setup conversation is that experts do not expect quantum to have a sudden consumer-facing “ChatGPT moment.” That is a critical distinction.

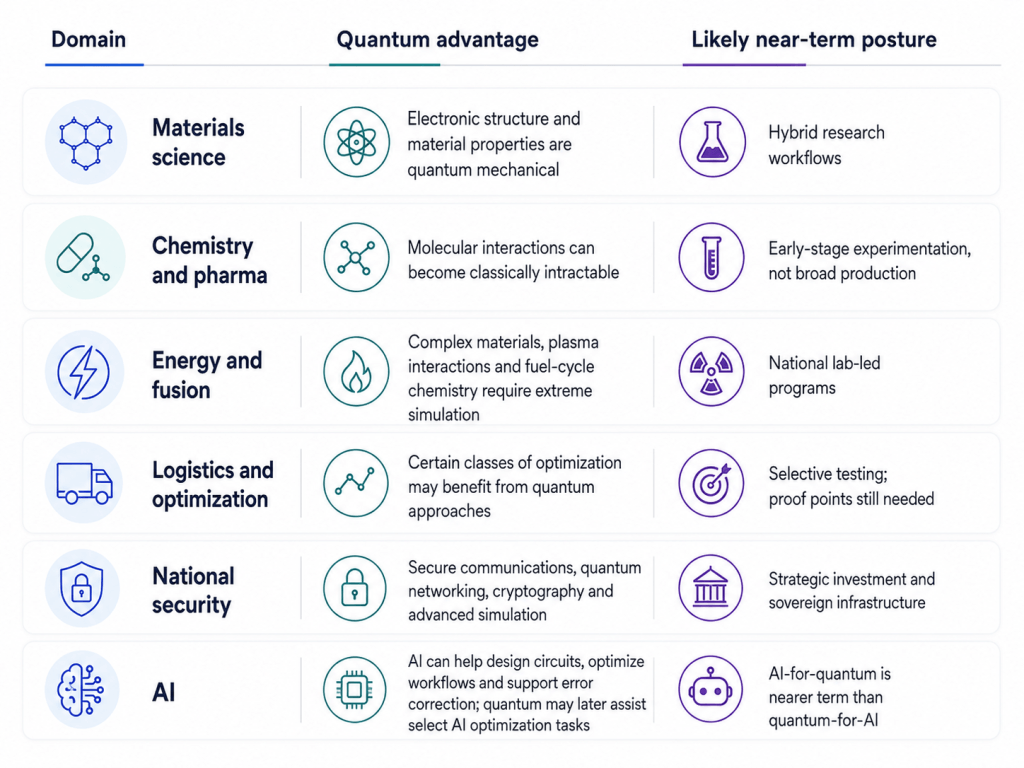

Generative AI became visible because anyone could type a prompt and see an answer. Quantum will not arrive that way. Its early value will be buried inside scientific workflows, materials simulations, chemistry models, logistics optimizations, national security applications, cryptographic transitions and possibly AI control systems. In many cases, the end user will not know or care that quantum was used. They will care whether the workflow delivered a better answer, faster insight, lower cost, lower energy intensity or a result that was previously infeasible.

This has implications for how the market should measure progress. Qubit count will matter, but it will not be sufficient. Error rates will matter, but they will not tell the whole story. The more important indicators will be logical qubit efficiency, I/O, workflow integration, algorithmic advantage, developer abstraction and the ability to reproduce useful results in real domains.

Quantum’s strongest early use case is modeling quantum nature

The best argument for quantum computing remains the one associated with Richard Feynman – i.e. use quantum systems to model quantum systems.

ORNL’s Tom Beck made this point. As a theoretical chemist and physicist, he sees a core application in modeling quantum nature – e.g. materials science, molecular behavior, electronic properties, time evolution of quantum systems and complex physical interactions that scale poorly on classical machines. He noted that classical supercomputers can realistically simulate only on the order of roughly 50 qubits before the state space becomes overwhelming, because the number of states grows as two to the nth power.

That underscores the essence of the opportunity in our view. Many of the world’s hardest scientific problems are not hard because we lack clever software. They are hard because the underlying state space is so huge it becomes impossible to model with classical computers. Quantum computing is attractive because it may eventually represent and manipulate certain kinds of quantum state spaces more naturally than classical machines.

Some of the early domains, which are a good strategic fit for Quantum are shown below:

We believe the most credible near-term thinking is not “quantum will transform every industry.” It is more likely that quantum will first make a difference in domains where the physics, math or data set sizes make classical approaches increasingly inefficient or cost prohibitive.

AI will make quantum more usable; it will not make quantum irrelevant

One of the more interesting themes from the ORNL conversation is the two-way relationship between AI and quantum. Beck described AI being used to design more efficient quantum circuits. Classical AI can help adapt quantum circuits to run more efficiently on quantum devices. There may also be future quantum uses for AI, such as accelerating convergence toward optimal neural network models, but the nearer-term value appears to be AI helping make quantum systems more practical.

The implication is that the software gap in quantum is huge. Quantum software is immature and can be credibly described as primitive – perhaps comparable to where mainframe programming was in the 1950s. The need for a Python analog for quantum – i.e. a higher-level abstraction – will allow developers to call quantum capabilities without programming at the qubit level.

In our view, AI becomes part of the quantum abstraction layer. It can help with circuit design, error correction, noise modeling, hardware mapping, code translation across architectures and workflow decomposition. It can also help domain experts use quantum without becoming quantum physicists.

Specifically, quantum’s adoption problem can’t be solved without hardware becoming more stable. But human readiness must follow as well. If only a narrow group of physicists can program the machines, quantum will remain constrained. If AI-assisted tools, libraries and middleware allow chemists, materials scientists, energy researchers and eventually enterprise developers to call quantum subroutines from familiar environments, the field can broaden and the TAM will expand.

The biggest constraints are error correction, I/O and software – not just qubit count

The popular thinking often focuses on qubit counts. That is understandable but it’s insufficient in our view. Our research indicates three constraints deserve equal or greater attention, incluing:

- Error correction. Qubits are fragile. Noise can disturb quantum states, and small errors can ripple into meaningless results. Beck explained that current thinking may require hundreds of physical qubits to create one logical qubit. That ratio is a major barrier because it changes the economics and scaling path of quantum systems.

- Input/output. Beck offered one of the most useful analogies saying quantum systems operate in a vast Hilbert space, but the pipes going in and out are small. In other words, the computational possibility space is enormous, but accessing it, feeding it, extracting from it and integrating it into classical workflows remains constrained.

- Software abstraction. The lack of a stable, accessible software stack means quantum remains too close to the hardware. The industry needs libraries, middleware, workflow orchestration, developer tools, domain-specific templates and integration with existing HPC environments.

This is why we believe the next phase of innovation will not rely on qubit count alone. Rather the industry must combine hardware progress with usable software, error correction, workflow integration and ecosystem openness.

Multiple quantum architectures will coexist

Another key point from the World Quantum Day data is that there is no single quantum architecture that has clearly won. Superconducting qubits, ion traps, neutral atoms, spin-based systems, diamond-based systems and other approaches all have different tradeoffs. Beck described superconducting systems as having faster turnaround times, while ion traps tend to have higher fidelities. The choice depends on the workload, the fidelity requirement, the scale requirement and the practical deployment model.

This is a classic early-market pattern. The 1970s minicomputer era had many architectures, software stacks and ecosystems before standardization and abstraction layers helped the industry scale. Quantum may follow a similar path, but with one important difference in that we may never see one architecture dominate all use cases.

The better analogy may be modern accelerated computing. CPUs, GPUs, FPGAs, ASICs and AI accelerators coexist because workloads differ. Quantum may add another branch to that heterogeneous architecture. A materials science workload may favor one QPU type. A chemistry problem may favor another. A quantum networking application may evolve differently. A room-temperature or diamond-based approach could matter for deployment models even if it does not win the benchmark wars.

This notion underscores the importance of open ecossytems, portability and abstraction. Customers should not have to bet their entire quantum strategy on one physical implementation. The history of the computing industry does suggest de facto standards will emerge and dominate. Quantum may break that trend.

National labs are becoming the proving ground for quantum-centric supercomputing

The global nature of the World Quantum Day conversations should not be lost on our community. Oak Ridge, Argonne, Lawrence Livermore, CSC in Finland, Pawsey in Australia and Leibniz in Germany all point to the same conclusions. Countries and regions view advanced computing infrastructure as strategic capability. Sovereign is the new mandate and HPC, AI and quantum stacks are pivotal.

This is not just about national pride. Compute is becoming sovereign infrastructure. AI models, HPC capacity, quantum access, scientific workflows, cryptographic resilience and data governance are all converging. Nations want control over critical technologies because these technologies increasingly determine scientific leadership, defense capability, industrial competitiveness and energy innovation.

But the tone from World Quantum Day is more collaborative than adversarial. The labs appear to be competing and cooperating at the same time. That is healthy in our view. Quantum is hard enough that no single institution, vendor or country is likely to solve the entire stack alone.

The sovereign angle also affects enterprise buyers. Regulated industries, government agencies and critical infrastructure operators will increasingly care where quantum services run, who controls the stack, how cryptographic migration is governed and whether quantum-enabled workflows comply with national or regional edicts on data residency, privacy and other regulations.

Energy is both a use case and a forcing function

What we learned from the ORNL conversations adds an important dimension that should be included in the analysis – i.e. energy.

AI infrastructure has put power back at the center of the technology conversation. Data centers, AI factories, GPU clusters and national competitiveness are now tied directly to electricity availability. Quantum is part of that conversation in two ways:

- Quantum should eventually provide more energy-efficient ways to solve certain specialized problems. It is not a universal energy solution, and quantum systems themselves can require sophisticated cooling and infrastructure. But for some workloads, especially those involving quantum simulation, the alternative may be throwing ever-larger classical systems at problems that do not scale efficiently.

- Quantum, AI and HPC may help solve energy problems. Beck described a fusion energy project at ORNL that spans AI, quantum and HPC. The project is aimed at designing better molten salt materials to support tritium production and extraction in fusion reactors – a complicated chemistry and materials problem that could benefit from the combination of classical simulation, AI and quantum methods.

This is the kind of example that makes the quantum discussion intriguing. It is not about quantum changing everything. It is a discussion about how quantum may help solve a very specific materials and chemistry problem inside a much larger energy system. T

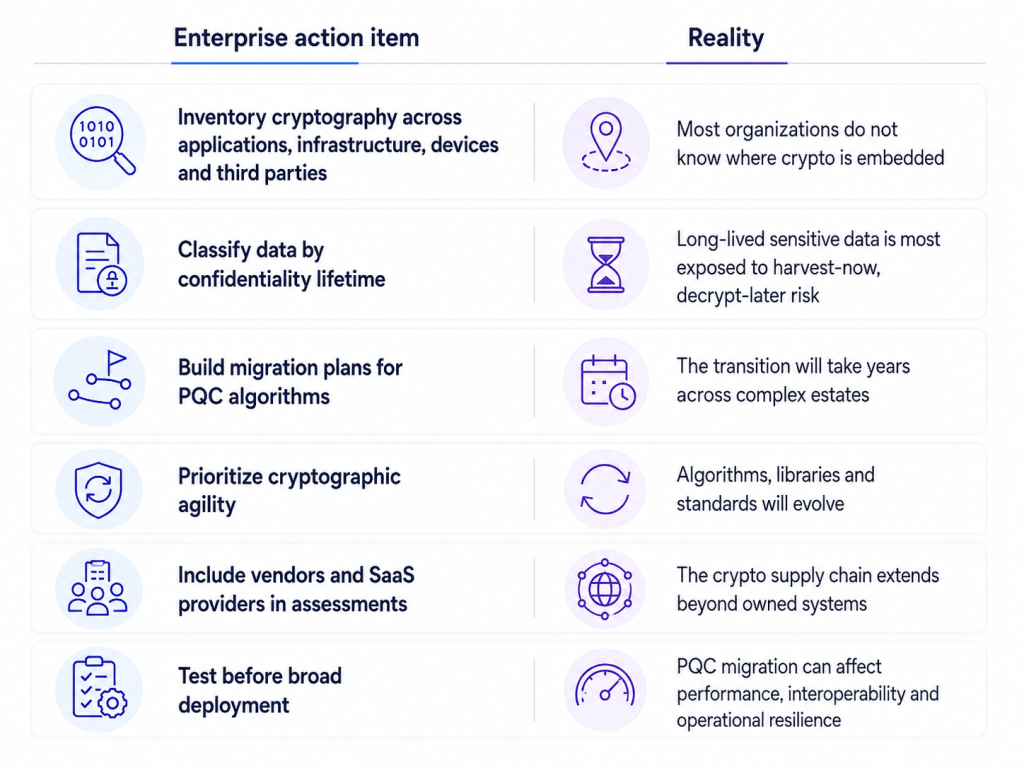

Post-quantum cryptography is the near-term enterprise mandate

For most enterprises, the most urgent quantum action is not building quantum applications. It is preparing for post-quantum cryptography.

The security issue is becoming more important each day. If sufficiently capable quantum computers can eventually break widely used public-key cryptographic systems, then encrypted data stolen today may become readable later. That is the “harvest now, decrypt later” problem discussed at World Quantum Day and other forums like RSAC.

This is why we believe post-quantum cryptography should be treated as an enterprise risk program, not a science project. The right model in our view is cryptographic agility. Organizations need to know where encryption lives, how long protected data must remain confidential, which systems depend on vulnerable algorithms, where certificates and keys are managed, and how third-party software, SaaS platforms, devices, APIs and storage systems will migrate.

The graphic below describes this in more detail:

The key point is timing. Enterprises should not wait for broad quantum advantage to begin this work. By the time a cryptographically relevant quantum computer is available, migration opportunities may already be too short for high-risk environments.

What this means for vendors

The vendor opportunity is shifting. Quantum hardware remains essential, but hardware alone will not define the market. We believe the more valuable platforms will be those that package quantum into simpler, repeatable workflows.

That means vendors should focus on integration with HPC systems, cloud environments, AI tooling, security platforms and developer ecosystems. The market needs less focus on qubit counts and more emphasis on how a researcher, developer or CISO gets value without becoming a quantum physicist.

The market will reward vendors that meet customers where they are. Today, customers are overwhelmed with AI. They are modernizing infrastructure. They are dealing with data governance, power constraints, security exposure and software complexity. Quantum must attach to those priorities rather than asking customers to create a separate quantum agenda.

What this means for enterprise buyers

For enterprise technology leaders, the right angle in our view is active preparation without over-rotation.

Our research suggests most organizations do not need to think about building quantum applications in the near term. But they should develop quantum literacy, identify potential domain-specific use cases, track vendor ecosystems and begin PQC readiness work now.

CIOs and CTOs should be asking where quantum could eventually intersect with simulation, optimization, risk modeling, supply chain, R&D, drug discovery, materials science or AI infrastructure. CISOs should be asking where vulnerable cryptography lives and how long sensitive data must remain protected. Boards should be asking whether quantum is being monitored as both a strategic opportunity and a security risk.

The organizations that benefit first will be those that understand their workflows well enough to know where a quantum accelerator could make a difference.

What to watch through the end of the decade

By the end of the decade, we believe the industry should judge quantum progress against practical milestones. The most important indicators in our view will be whether:

- Error correction reduces the number of physical qubits required for each logical qubit;

- Hybrid workflows can move data efficiently between HPC, AI and QPU resources;

- Software abstraction improves enough for non-physicists to use quantum capabilities;

- Domain-specific applications in chemistry, materials, energy, pharma or logistics demonstrate repeatable advantage;

- PQC migration becomes a standard enterprise security program;

- Multiple quantum architectures can be orchestrated through common software layers.

That last point is important. The future may not be one quantum architecture to rule them all. It may be a portfolio of QPU types, each suited to different workloads and accessed through abstraction layers that hide much of the underlying complexity. But ultimately economics and applicability will determine the outcome and it’s just too early to predict.

Bottom line

Quantum computing is entering a new phase. It is still early. It is still technically constrained. Error correction, I/O, software abstraction, architecture fragmentation and workflow integration remain major barriers. But the tone of the market is changing, recognizing that it’s time to pay attention to quantum.

We believe quantum’s first major role will be as a specialized accelerator inside a hybrid HPC-AI-quantum architecture. The near-term enterprise action is post-quantum cryptography readiness. The medium-term opportunity is hybrid workflow experimentation in domains where classical scaling can’t compete. The long-term opportunity is a new compute fabric in which CPUs, GPUs and QPUs are orchestrated together to solve problems that no single architecture can handle alone.

The most successful players will be those that build the software layers, abstractions, workflows, security readiness and domain expertise required to make quantum useful before it becomes obvious.

Quantum will not replace the stack. It will become part of the stack. And that may be the most important realization the market can have right now.

Check out all the quantum-related discussions with major research labs around the world:

Watch the full interview with Tom Beck of ORNL:

Disclosure: Our coverage of World Quantum Day was made possible by Hewlett Packard Enterprise. The content of this research note was written independently and no third party had any editorial control or influence over the material.